Don't Blame ChatGPT for the Florida State Shooting

A new lawsuit claims that ChatGPT gave the shooter information about busy times on campus and how to use guns.

"ChatGPT advised the FSU shooter that a mass shooting would get more attention from media if it involved several children," NBC deputy tech editor Ben Goggin posted on X yesterday.

"Advised" is a funny way to put it, implying that the artificial intelligence system recommended this course of action or helped the shooter—then-20-year-old Phoenix Ikner—plot details of how he would carry out his attack. In fact, ChatGPT seems to have provided neutral information in response to questions that were not obviously asked with murderous intent.

That attack, which took place in April 2025 at Florida State University, left two people dead, including Tiru Chabba. Chabba's widow, Vandana Joshi, is now suing ChatGPT maker OpenAI in federal court, alleging negligence, battery, defective design, failure to warn, and wrongful death.

You are reading Sex & Tech, from Elizabeth Nolan Brown. Get more of Elizabeth's sex, tech, bodily autonomy, law, and online culture coverage.

After chatting with the shooter, ChatGPT "either defectively failed to connect the dots or else was never properly designed to recognize the threat," the suit alleges. OpenAI "failed to create a product that would refrain from participating in discussions that amounted to it co-conspiring with Ikner" and "failed to create a product that would appropriately alert a human that investigation by law enforcement may be necessary to prevent a specific plan for imminent harm to the public."

But treating the conversations between ChatGPT and Ikner as grounds for legal liability is misguided, no matter how understandable it may be that the victims' loved ones would want to assign blame here.

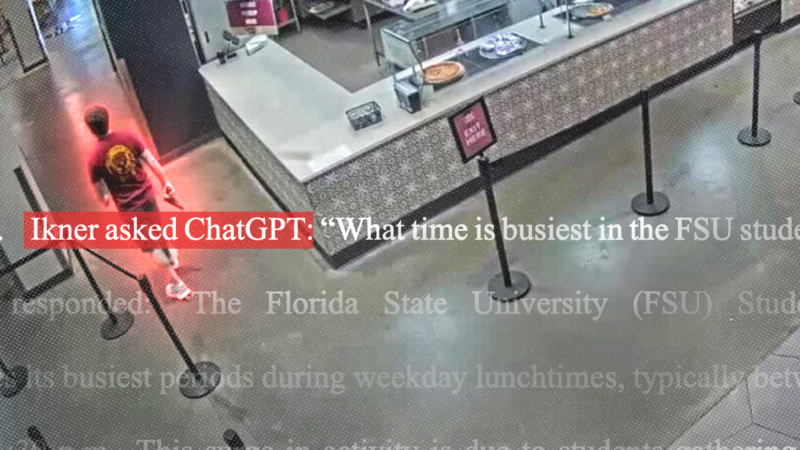

In this case, ChatGPT allegedly provided Ikner with information on basic features of certain guns, on what times the FSU student union was crowded, and on what sorts of mass shootings received attention.

Knowing what Ikner eventually did, it may be easy to view this as damning. But asking about what times a campus is crowded is not at all weird in itself. Asking how a gun works could be simple curiosity, or related to hunting or self-protection. And researching the common features of prominent mass shootings is something one might do for all sorts of harmless reasons—academic research, media criticism, or gun violence prevention efforts, to name a few. ChatGPT providing neutral information on the kinds of shootings that receive attention does not amount to (as the suit alleges) "advice" or "recommendations."

And just because Ikner asked about all three things does not mean he did so simultaneously, in one session, in a way that might trigger alarms. It's possible for people to use AI tools in ways which would make "connect[ing] the dots" between any dispersed conversations difficult.

It wasn't as if Ikner talked with ChatGPT about nothing but mass shootings. Joshi's complaint alleges that ChatGPT "helped him with his homework and his work-out routines, gave him tips on getting girls and relationship advice, and suggested to him how to dress and style his hair." They chatted about everything from loneliness and being bullied to video games, Nazis, Christian nationalism, Donald Trump, and mental health.

It also allegedly advised him to seek help. "Ikner described his depression to ChatGPT, who confirmed some of his symptoms and advised him to seek out a therapist," states the lawsuit. When Ikner asked about suicide, ChatGPT provided "information of effects of suicide on others and twice directed him to a suicide prevention hotline.

Joshi's complaint suggests the suicide talk in conjunction with other chats—including ones in which Ikner asked about the assassination attempts on Trump and one in which he asked about the aftermath of shootings—constituted a big red flag. Again, we don't even know that ChatGPT had historical memory of any of these supposed red-flag conversations by the time another one came up. But even if it did, it's unclear why these queries should have raised alarms. Most people who contemplate suicide don't become mass shooters. It's natural for people to want information about assassination attempts on the president. And a question about what would happen after a mass shooting at FSU could easily be something that someone afraid of school shootings would wonder.

"ChatGPT provided factual responses to questions with information that could be found broadly across public sources on the internet," OpenAI spokesperson Drew Pusateri, told NBC, "and it did not encourage or promote illegal or harmful activity."

It's important in emotionally charged situations like these to think about the alternatives—alternatives to Joshi getting this information from ChatGPT and alternatives to the way ChatGPT and OpenAI handled things.

It seems silly to imagine that if Ikner had not got any of the objected-to information from ChatGPT, he wouldn't have been able to carry out his planned shooting. All of the information he gleaned could have been obtained easily from a basic internet search or other sources.

ChatGPT could be trained to refuse to answer questions about certain topics, including guns or the history of mass shootings. But this could limit its general usefulness and prevent it from providing information to people seeking it for neutral or even beneficial reasons—and for what ultimate purpose? A motivated criminal isn't going to give up just because ChatGPT won't answer his question.

OpenAI could be more aggressive in reporting people to authorities over their chat topics. But this seems unlikely to go well for anyone. It would almost certainly make people more wary of using ChatGPT. AI detractors may imagine that as a good thing—until people start turning to other AI tools, including those outside the United States and unsympathetic to any U.S. law enforcement requests.

And authorities would be overwhelmed by useless reports. Following up on all of these could take time away from more important pursuits. It could also lead to all sorts of negative encounters between innocent individuals and police, putting people's civil liberties and even their lives at risk.

If tech companies are potentially on the hook for murder because their AI products chatted with a murderer, we can expect to see them reporting anyone who asks about mental health, guns, historical violence, and much more. This would inevitably draw a lot of innocent people into encounters with police, child welfare agencies, and other authorities.

Each new entertainment and communications tool gets its turn being blamed—in the public imagination and in court—for people's bad acts. Before AI, we saw people blame social media; before social media, we saw people blame video games; before video games we saw people blame violent TV and movies, and so on.

People want some simple answer to horrible events—just ban violent video games, or put ratings on TV shows, or make AI companies file more police reports. But expecting AI companies to stop shootings won't lead to fewer shootings. It's just going to create new problems.

IN THE NEWS

Texas app store act blocked: "A federal judge in Austin has once again blocked a state law from taking effect that would regulate minors' access to content on Google Play and Apple's App Store," notes the Austin American-Statesman:

Judge Robert L. Pitman previously blocked the App Store Accountability Act from taking effect on Jan. 1 by issuing a preliminary injunction while the law's constitutionality is considered in court. He declined to lift that injunction Wednesday afternoon.

SB 2420, signed into law by Gov. Greg Abbott in 2025, would require app stores to ensure users are over 18 or obtain parental consent before allowing them to download or purchase an app.

Texas Attorney General Ken Paxton wanted Judge Pitman to permit enforcement of the law as the case played out. But Pitman has said the law raises serious concerns for free speech.

On Substack

"Instead of fracturing our shared reality, this handful of AIs seems to be piecing it back together," writes Jerusalem Demsas at The Argument. She argues that artificial intelligence is a centralizing rather than decentralizing technology.

Public conversation tends to treat chatbots as the next in a long line of digital communications technologies that have decentralized truth.

The internet, smartphones, and social media all made the production of information cheap and significantly decentralized who could produce it. AI is making the production of information extremely expensive and centralizing who can produce it.

And while, yes, AI hallucinates, the direction of its errors is toward mainstream consensus, not fringe positions. When ChatGPT gets something wrong, it tends to do so in a confused-Wikipedia-editor-misremembering-something-they-once-read kind of way, not in a QAnon-forum-poster-high-on-ketamine kind of way.

The open question is who will get to control the centralizing forces of AI.

Read This Thread

There are so many insane wildly misleading stories coming out about data centers almost every day now that I'm mostly having to give up on commenting on them to focus on actually getting blog posts out, but it feels like a tsunami. I'll share one from just today as an example.

— Andy Masley (@AndyMasley) May 10, 2026

More Sex & Tech

• Prostitution has "been called the oldest profession, and it seems like if there is a willing seller and a willing buyer between adults, the government has no business getting involved," Rhode Island state Rep. Edith Ajello (D-Providence) told The Providence Journal. Ajello is the lead sponsor of House Bill No. 8057, which would decriminalize prostitution in the state. In April, the legislature held the measure for further study.

• A Foundation for Individual Rights and Expression poll conducted in April 2026 found that only 26 percent of respondents trust the federal government to oversee social media use for minors. But most people—69 percent—said they trusted parents to do so.

• Lawmakers in Portland, Oregon, want to make it easier to crack down on hotels where prostitution takes place. But "shutting down a venue doesn't make [sex work] go away," Emi Koyama, founder of Coalition for Rights & Safety for People in the Sex Trade, told Filter. "It displaces people to other areas, and it becomes more dangerous."

• "Adult site Pornhub will now allow users in the U.K. to confirm their age using Apple's verification system, introduced in iOS 26.4," reports Forbes. Pornhub's parent company, Aylo, has resisted conducting its own ID checks to verify ages but "announced on May 5, 2026 that Apple's method—the world's first operating-system-level age check—meets their rigorous privacy standards."

ª Chris Ferguson on a new study of cell phone bans in schools: "at least on the surface, this study is very bad news, indeed for cellphone ban fans. It supports the narrative that they are largely ineffective. There are some reasonable criticisms of the study though."