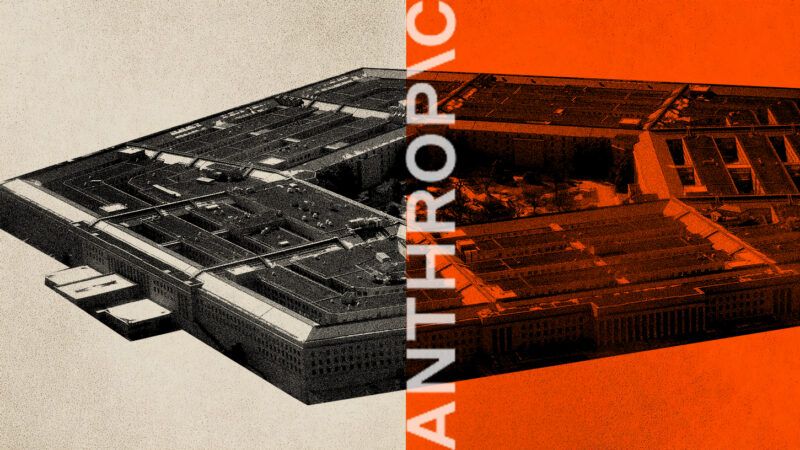

The Federal Government's Crusade Against Anthropic Raises First Amendment Concerns

Trump administration officials openly seek to punish the AI company for its corporate philosophy.

Vendors have the right to do business with whoever they please, and to put conditions on the purchase of their goods and services. Buyers have a matching right to choose among vendors, and to enter only deals that serve their purposes. But when government officials go further and use their power to punish private businesses that won't sell them what they want, they may run afoul of constitutionally protected rights. That's the case in the battle between AI firm Anthropic and the Trump administration.

You are reading The Rattler from J.D. Tuccille and Reason. Get more of J.D.'s commentary on government overreach and threats to everyday liberty.

A Company With Ethical Boundaries

Anthropic is a leading tech company whose AI model, Claude, reportedly played a role in the capture of former Venezuelan dictator Nicolás Maduro. But, like many companies, Anthropic limits the use of its technology for reasons of internal beliefs, public relations, or both. The company's philosophy is based on the idea that AI is potentially dangerous and should be built around "good personal values, being honest, and avoiding actions that are inappropriately dangerous or harmful."

In a February 26 press release, Anthropic CEO Dario Amodei pointed out that his company "chose to forgo several hundred million dollars in revenue to cut off the use of Claude by firms linked to the Chinese Communist Party" because it didn't want to enable an authoritarian regime. Likewise, "in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values" when used by any government, including authorities in the United States.

"Some uses are also simply outside the bounds of what today's technology can safely and reliably do" Amodei added. "Two such use cases have never been included in our contracts with the Department of War, and we believe they should not be included now: Mass domestic surveillance" and "fully autonomous weapons."

As limitations go, refusing to participate in the creation of a totalitarian police state or the production of killer robots seem reasonable lines to draw. But that doesn't matter, because Anthropic has the right to draw whatever lines it wishes. The U.S. government can then respect those limits or take its shopping needs elsewhere.

But that's not what the federal government did. Instead, the president and his allies threw public temper tantrums over Anthropic telling them "no."

Feds Target a Corporate Philosophy for Punishment

"THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS!" President Donald Trump huffed on Truth Social. "Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic's technology."

"@AnthropicAI and its CEO @DarioAmodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of 'effective altruism,' they have attempted to strong-arm the United States military into submission – a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives," sniffed Secretary of Defense War Pete Hegseth. "In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic."

In their comments, administration officials made it clear they were punishing Anthropic for its corporate beliefs, not because the company poses an actual danger to the country.

"Designating Anthropic as a supply chain risk would be an unprecedented action—one historically reserved for US adversaries, never before publicly applied to an American company," Anthropic responded prior to filing a lawsuit against the federal government. "We believe this designation would both be legally unsound and set a dangerous precedent for any American company that negotiates with the government."

Legally unsound and dangerous are good descriptions for a government policy that is openly intended as retaliation against a company for its corporate philosophy.

The First Amendment Is at Stake When Government Punishes Private Ethics

"The claim implicit in the Pentagon's reported demand is…that when national security is invoked, the state's judgment supersedes the moral constraints of the supplier. The company may sell — but only on terms that dissolve its own ethical boundaries," notes Walter Donway for the American Institute for Economic Research's The Daily Economy.

Importantly, the Anthropic–Pentagon dispute raises the issue of whether the government can punish a company for disagreeing with government officials as to what constitutes ethical behavior.

"Though the media is busy framing this as a national security showdown, it actually poses a constitutional concern," warns John Coleman for The Foundation for Individual Rights and Expression (FIRE). "It is a test of whether the federal government can weaponize its contracting power to force a private company to bend the knee."

That doesn't mean the federal government must do business with Anthropic. But it can't forbid federal contractors to use the company's products as a punishment.

"The government's actions, which are designed to harm Anthropic's business, raise serious constitutional concerns, including threats of compelled speech and retaliation against a company for taking positions disfavored by government officials," Coleman added.

FIRE filed an amicus brief with the U.S. District Court of Northern California supporting Anthropic's First Amendment case against the federal government.

It should be noted that Anthropic is not the first company to put conditions on the sale of its products to governments. For years, Barrett Firearms has refused to sell its products to agencies in jurisdictions that don't allow civilians to own large-bore guns. Home Depot had a longtime policy against doing business with the federal government because it didn't want the bureaucratic headaches that came with being classified as a federal contractor; it reversed that policy amid public blowback during the Iraq War.

Nobody, the Defense Department included, should be forced to do business with Anthropic. But, nor should the government be allowed to penalize private parties that refuse to do things they consider wrong.