Study of Virtual Images Suggests Jurors May Not Know Child Porn When They See It

Not to worry: Prosecutors can use a backup law that also makes it a crime to look at cartoons.

A new study finds that people have "considerable difficulty" distinguishing between photographs and computer-generated images of human faces, a fact the authors suggest will complicate prosecution of child pornography cases. "As computer-generated images quickly become more realistic, it becomes increasingly difficult for untrained human observers to make this distinction between the virtual and the real," says lead researcher Hany Farid, a professor of computer science at Dartmouth. "This can be problematic when a photograph is introduced into a court of law and the jury has to assess its authenticity."

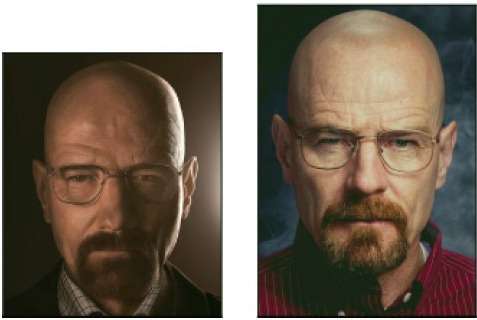

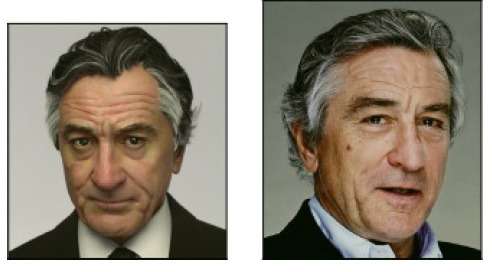

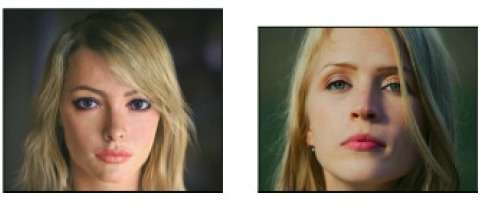

In the study, which was published by the journal ACM Transactions on Applied Perception, Farid and his colleagues showed 250 subjects 60 pictures of men's and women's face, half of which were photographs and half of which were computer-generated images. "Observers correctly classified photographic images 92 percent of the time," Farid et al. report, "but correctly classified computer-generated images only 60 percent of the time."

In a second experiment, subjects were first shown 10 pairs of labeled real and virtual images, then shown the same 60 pairs used in the first experiment. They were better than the first group of subjects at identifying the computer-generated pictures, classifying them correctly 76 percent of the time. But they were somewhat worse than the original subjects at identifying photographs, classifying them correctly 85 percent of the time, perhaps because the training made them second-guess accurate perceptions.

Observers, whether trained or not, did significantly worse than the subjects in a similar study that Farid and his colleagues conducted five years ago—an indication that the quality of virtual images has improved. "We expect that as computer-graphics technology continues to advance, observers will find it increasingly difficult to distinguish computer-generated from photographic images," Farid et al. write. "Although this can be considered a success for the computer-graphics community, it will no doubt lead to complications for the legal and forensic communities. We expect that human observers will be able to continue to perform this task for a few years to come, but eventually we will have to refine existing techniques and develop new computational methods that can detect fine-grained image details that may not be identifiable by the human visual system."

Congress anticipated this development back in 1996, when it passed the Child Pornography Prevention Act. That law's definition of child pornography included "any visual depiction" that "appears to be" an image of "a minor engaging in sexually explicit conduct." Six years later, the Supreme Court overturned that aspect of the law, concluding that it was overbroad, covering constitutionally protected speech such as movie versions of Lolita or Romeo and Juliet. In 2003 Congress tried again with the PROTECT Act, which banned "obscene visual representations of the sexual abuse of children." Although the main rationale for that prohibition was preventing people caught with actual child pornography from winning acquittal by demanding that the government prove it was not virtual, the proscribed material explicitly includes drawings, cartoons, sculptures, and paintings that no one would mistake for the real thing.

On the face of it, the problem highlighted by Farid et al. should not pose much of a challenge for federal prosecutors. If it becomes increasingly difficult to prove that purported child pornography shows actual children, prosecutors can instead charge defendants with violating the PROTECT Act, which carries the same penalties as the provisions dealing with child pornography, including up to 10 years in prison for possession and a five-year mandatory minimum for "receiving" a prohibited picture, which amounts to the same thing when the image is viewed online. But PROTECT Act prosecutions are more problematic for a couple of reasons. First, prosecutors have to prove the image is obscene, meaning it "appeals to the prurient interest" and "lacks serious literary, artistic, political, or scientific value." Second, the Supreme Court has said the First Amendment forbids punishing people for mere possession of obscene material, unless it is child pornography. Hence any prosecution for merely possessing material banned by the PROTECT Act is constitutionally questionable.

Then again, when someone is caught with pictures that have been transmitted via the Internet (as is typically the case), he can always be charged with receiving them, which triggers the five-year mandatory minimum. The U.S. Court of Appeals for the 4th Circuit upheld such a conviction in a 2008 case involving Dwight Whorley, a Virginia man who was caught looking at "Japanese anime-style cartoons of children engaged in explicit sexual conduct with adults." The 4th Circuit rejected the argument that "receiving" obscene material via the Internet is essentially the same as possessing it, which the Supreme Court has said is constitutionally protected. Prosecutors also can obtain convictions for possession under the PROTECT Act through guilty pleas coerced by the threat of a receiving charge. That's what happened in a 2011 case involving cartoon pornography featuring characters from The Simpsons and a 2013 case involving "incest comics."

Farid et al. say "juries are reluctant to send a defendant to jail on an obscenity charge for merely possessing computer-generated imagery when no real child was harmed in its creation." That may be true, but jurors generally do not know the penalties a defendant faces, and lawyers are not allowed to tell them. Dwight Whorley, who had previous convictions involving cartoons as well as "digital photographs depicting minors engaging in sexually explicit conduct," received a 20-year sentence. Even if he had no record, he would have been subject to the five-year mandatory minimum just for looking at cartoons. It is unlikely that the jurors realized that—or that they would have believed it had they been told.