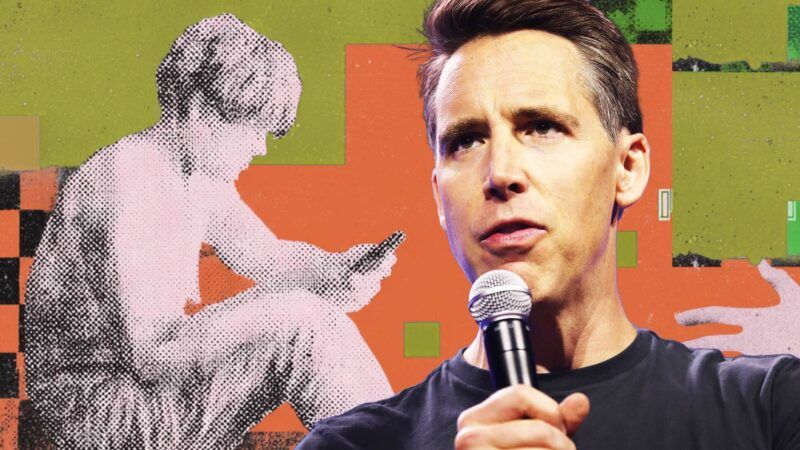

How a Bill Banning AI Companions for Kids Could Usher in Widespread ID Checks Online

Plus: Supreme Court pauses ban on mail-order abortion pills, TikTok's artistic merit, a defense of pickup artists, and more...

Sen. Josh Hawley's Guidelines for User Age-verification and Responsible Dialogue (GUARD) Act advanced out of the Senate Judiciary committee last week. "A Trojan horse for universal online ID checks," is how Jibran Ludwig of Fight for the Future described it.

The bill would require anyone using an AI chatbot to provide proof of identity and ban minors from interacting with many sorts of AI chatbots entirely.

Unlike some social media age verification bills, it would give parents no right to opt out of the rules the federal government sets on their kids' technology use.

You are reading Sex & Tech, from Elizabeth Nolan Brown. Get more of Elizabeth's sex, tech, bodily autonomy, law, and online culture coverage.

The GUARD Act is co-sponsored by Sen. Richard Blumenthal (D–Conn.), who—like Hawley—has long been a champ at moral panic around technology. (Cue: Bipartisan is just another word for really bad idea…)

And while some on the Senate Judiciary Committee expressed concerns about privacy or how this could actually backfire and harm minors, those senators still voted to advance the bill. It "easily passed in committee," notes The Hill, despite some senators' reservations:

Sen. Alex Padilla (D-Calif.), who voted yes, said there are concerns about "potential privacy and security risks" with the age-verification component, suggesting it may need to be "fine-tuned."

Sen. Ted Cruz (R-Texas), who supported various kids online safety bills, said he would vote yes but noted the bill needs "some revisions."

Cruz was concerned the bill would completely ban all AI chatbots for minors, noting their potential benefits. Hawley clarified the bill does not ban all AI chatbots for minors, but rather it "prevents AI chatbots that engage with minors from pushing sexually explicit material to the minor," or encouraging self-harm or suicide.

That seems like some incredibly disingenuous framing from Hawley. While the bill does ban what he says it does, it would also do a whole lot more. Such as:

1) Ban Kids From Using Friendly AI

The GUARD Act defines AI companion as any AI system that "provides adaptive, human-like responses to user inputs; and is designed to encourage or facilitate the simulation of interpersonal or emotional interaction, friendship, companionship, or therapeutic communication." AI companies would be required to prohibit anyone under age 18 "from accessing or using any AI companion," the GUARD Act says.

That obviously goes way beyond stopping teens from chatting with robots about sex or violence. It takes away their right to talk to AI companions about any topic. And it defines AI companion so broadly that it would encompass any AI chatbot that affects a friendly or familiar tone.

Even at its least broad, such a ban would be a bad idea. Some teens might benefit from AI therapy tools. And there are all sorts of not-bad reasons why a teenager might want to engage with an AI chatbot capable of providing supportive or friendly communication.

In the broad form in which it's written, the bill could stop young people from engaging chatbots in all sorts of neutral or even positive interactions, including using "online tutors, practicing a foreign language, or developing an array of skills," as Jennifer Huddleston and Juan Londoño point out at the Cato Institute's blog:

AI tools have also become ubiquitous in many products, doing everything from providing tech support to helping customize a burrito (and perhaps being able to write code in the process). A February 2026 survey by the Pew Research Center found that over half of US teens use chatbots for help with schoolwork. The GUARD Act would prevent those under 18 from accessing any of these products.

2) Take Away Parental Rights

Parents would have no ability to opt their kids out of this ban. The GUARD Act takes the choice about when and how to introduce young people to certain AI technologies out of families' hands and into the hands of the state.

"Restricting parental choice in this manner is indicative of a failure to consider both the unique values of every family and the potential for AI chatbots to improve the lives of many young people, including those with disabilities like autism," note Huddleston and Londoño. "Different families may have different views on when a child should or shouldn't access any technology. This decision appropriately belongs with parents, not policymakers."

3) Invade Everyone's Privacy

Besides its negative implications for minors, the GUARD Act would be a big blow to privacy, since implementing it would require some sort of identity verification from all AI chatbot users.

The GUARD Act says that any provider of an AI chatbot must require all users to create an account, and that creating an account requires age verification.

"By mandating government ID or equivalent age verification for any American who wishes to interact with an AI chatbot, the bill burdens the speech and associational rights of every adult, not just minors," Ashkhen Kazaryan of The Future for Free Speech told The Hill.

Because AI tools and chatbots are becoming ubiquitous across all types of digital platforms, the GUARD Act's age verification scheme could wind up much broader than it might initially appear.

It could wrap up "every social media platform and the website of any company operating AI customer service chatbots," the digital rights group Fight for the Future points out:

But it doesn't end there: any person who "makes available an artificial intelligence chatbot" is covered by the law. This would require everyone from internet service providers to anyone who runs a blog with a comment section to administer online ID checks. While apparently narrow, this bill is in fact an online ID check mandate unmatched in scope and highly invasive in methods.

For better and for worse, AI chatbots are threatening to overtake search engines as the primary way people find information online. This means that the millions of people who use these tools for everyday tasks will now be providing sensitive and private information to a sketchy, insecure age verification service, which have already resulted in thousands of people's private information being leaked. Government censorship is not confined to outright prohibition of speech: burdens like this are a legally dubious limit on free expression.

4) Chill (and Compel) Speech

The way this measure is written, it could seriously restrict what AI chatbots are allowed to say while simultaneously compelling them to speak the government's messages.

At the start of every conversation and at 30-minute intervals thereafter, AI chatbots would have to tell users that they are not human. At the start of every conservation, they would also have to "clearly and conspicuously disclose to the user that the chatbot does not provide medical, legal, financial, or psychological services; and users of the chatbot should consult a licensed professional for such advice."

Meanwhile, chatbots would be banned from "represent[ing], directly or indirectly, that the chatbot is a licensed professional, including a therapist, physician, lawyer, financial advisor, or other professional."

The "indirectly" bit there raises alarms. Authorities might argue simply providing authoritative advice counts as an indirect representation of professional authority.

Then there's the ban on AI chatbots engaging minors in discussions about sexuality. It's written broadly—this isn't just about stopping minors from viewing pornography. The GUARD Act would make it "unlawful to design, develop, or make available an artificial intelligence chatbot, knowing or with reckless disregard for the fact that the artificial intelligence chatbot poses a risk of soliciting, encouraging, or inducing minors to engage in, describe, or simulate sexually explicit conduct."

This could ban AI chatbots—even those that are strictly non-companion-like—from talking with minors about safe sex practices, contraception, sexual orientation, and more, since doing so might "pose a risk" of getting the minors to talk about intercourse, oral sex, masturbation, or anything related.

It could also chill AI and user speech around topics related to sex and sexuality generally, not just when minors are concerned.

Notice that an AI chatbot provider need not intentionally or affirmatively engage a minor in sex talk; it must only act in "disregard" of the "risk" that it could do so. The penalty is $100,000 per offense. For tech companies looking to avoid liability, that makes a strong case for limiting such discussions more generally, training their bots to shut down any conversations related to sex.

"Like age-verification proposals for online services, this bill is unlikely to survive constitutional scrutiny," write Huddleston and Londoño. "But beyond its likely unconstitutionality, Sen. Hawley's approach endangers users' privacy, limits parental rights, and locks minors out of beneficial uses of AI."

IN THE NEWS

Supreme Court pauses ban on mail-order abortion pills. On Friday, the 5th U.S. Circuit Court of Appeals said it's illegal to mail abortion pills. Today, the Supreme Court put a one-week pause on that decision.

In a unanimous ruling last week, 5th Circuit judges said the abortion-inducing drug mifepristone can be handed out only in person, contrary to the U.S. Food and Drug Administration's current prescribing rules. "Friday's ruling…affects all states, even those without abortion restrictions," noted PBS. "There is little precedent for a federal court overruling the scientific regulations of the FDA, and it remains to be seen how the decision could impact how the drug is dispensed long-term."

On Saturday, two mifepristone manufacturers asking the Supreme Court to stay the 5th Circuit's ruling. From SCOTUSblog:

The companies, Danco Laboratories and GenBioPro, both told the justices that the 5th Circuit's order was "unprecedented." Danco argued that the order "injects immediate confusion and upheaval into highly time-sensitive medical decisions," while GenBioPro said that the order "has unleashed regulatory chaos."…

Today, the Supreme Court did indeed pause the 5th Circuit's ruling. So mail-order mifepristone is still legal, for now.

"The order signed by Justice Samuel Alito temporarily allows women seeking abortions to obtain the pill at pharmacies or through the mail, without an in-person visit to a doctor," reports the Associated Press. "Alito's order will remain in effect for another week while both sides respond and the court more fully considers the issue."

ON SUBSTACK

Gen Z marriage myths: Halina Bennet at Slow Boring pushes back against the idea that marriage is dead among the college-educated ranks of Gen Z:

The data tells us that college-educated women are still marrying—they are just marrying later. It's actually the numbers among non-college-educated women that are falling. Many of the people who will eventually marry simply haven't reached the average age of marriage among college-educated women.

Bennet conducted her own survey of people ages 18 to 35, and "the portrait that emerged was of a generation that has decided that marriage is worth having, and that value makes it worth waiting for."

But Bennet does see a tendency to place too much stock in doubt. "This is a generation trained to wait for complete information—for the best option, the right moment, the optimal conditions," she writes. "Marriage resists this habit because the data is never complete and the conditions never fully stabilize."

READ THIS THREAD

Point:

Frankly, they're not just replacing reading with vertical video slop. They're replacing EVERYTHING with vertical video. Streaming, gaming, IRL interactions, all of it is being swallowed by vertical shortform video. https://t.co/synEcWAw4q pic.twitter.com/XrUQXQNcDh

— Jeremiah Johnson 🌐 (@JeremiahDJohns) April 27, 2026

And counterpoint?

"There are about 70% more bookstores now than there were six years ago in the United States. After 20 years of declining numbers, they're coming roaring back."

"Since 2020,… American Booksellers Association membership has grown from 1,900 to 3,200."https://t.co/XTUijwVQQn

— 𝙲𝚑𝚊𝚛𝚕𝚎𝚜 𝙲. 𝙼𝚊𝚗𝚗 (@CharlesCMann) April 30, 2026

Relatedly: "Is TikTok art?" asks The Argument.

"Kids don't just spend time on social media because they are screen junkies who can't read. That would be too easy," Maibritt Henkel writes.

They spend time on social media, in large part, because social media has become brilliantly, absurdly, unprecedentedly, entertaining.

Even if you wish it weren't, vertical 30-second video is the creative medium of our time. Taking seriously the merits of any new formal paradigm is in the spirit of how we have met every technological rupture in art history.

More Sex & Tech

• Magdalene J. Taylor defends the pickup artist. "While some might argue that pickup artistry faded due to its misogynistic attitudes, the reality is that the manosphere it grew from is more misogynistic than ever," she writes. "Trying to get laid has been traded for a culture of hustle and grift that views women as a waste of time. At the very least, the pickup artist didn't think so."

• "The Trump administration's intrusive social media rules are a gift to tyrants," writes Julian Sanchez. Pointing to the U.S. Embassy in Thailand posting that all visa applicants must set their social media profiles to public, he notes that "demanding prospective visitors and immigrants set their social media profiles public isn't just an intrusive policy in service of a constitutionally dubious scheme to exclude people with disfavored political opinions: It is likely to put applicants, their friends, and their families in very real, physical danger" in countries like Thailand, where it's still criminal to insult the royal family.

• Oklahoma lawmakers passed a bill to criminalize providing a woman with abortion pills. "Supporters argued it was necessary to save the unborn and reduce abortions forced on women by sex traffickers," reports the Oklahoma Voice. (That last bit is a stellar example of how supporters of abortion bans try to negate women's agency in the abortion debate so that their bans can be framed as for women's own good.)