Congress Weighs a Moratorium on Facial Recognition and Biometric Surveillance Technologies

And it's not a moment too soon.

Today, a group of congressional Democrats re-introduced the Facial Recognition and Biometric Technology Moratorium Act of 2021. And it's not a moment too soon.

Earlier this month a coalition of more than 40 privacy advocacy organizations including the Electronic Frontier Foundation, the Electronic Privacy Information Center, Fight for the Future, and Restore the Fourth, issued a declaration calling for a ban or moratorium on law enforcement use of facial recognition technology. In its statement, the coalition observed that police use of facial recognition technologies is already becoming pervasive.

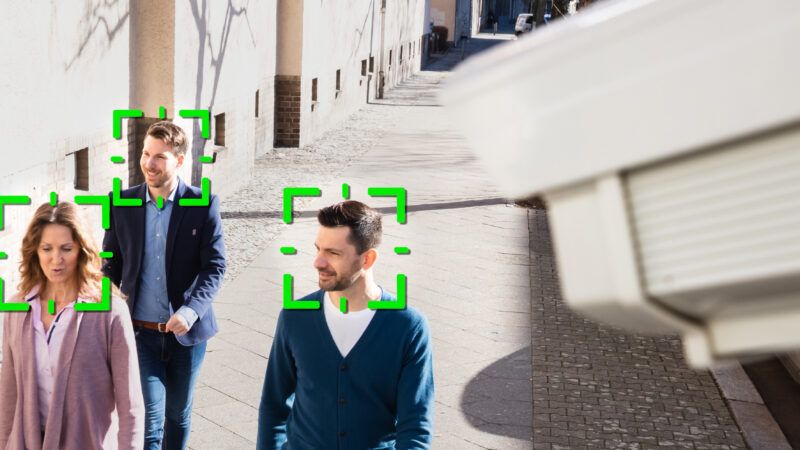

"Law enforcement agencies routinely use the technology to compare an image from bystanders' smartphones, CCTV cameras, or other sources with face image databases maintained by local, state, and federal agencies," noted the coalition. "Potentially more than 133 million Americans are included in these databases, with at least thirty-one states giving police access to driver's license images to run or request searches, and twenty-one states giving the FBI access to the same. Law enforcement use of these databases for investigations places millions of Americans in what has been called a 'perpetual line-up.'"

In addition, more than 1,000 federal, state, and local law enforcement agencies have used the facial recognition tool developed by the surveillance startup Clearview AI. That company created its massive faceprint database by illicitly scraping more than 3 billion photos from across the Internet, including employment sites, news sites, YouTube, and social media networks such as Facebook, LinkedIn, and Instagram.

Using the service, police can upload a photo of an unidentified person to Clearview AI's database and retrieve publicly posted photos of that person along with the locations at which the photos were posted. Back in January 2020, the New York Post reported, "Rogue NYPD officers are using sketchy [Clearview AI] facial recognition software on their personal phones that the department's own facial recognition unit doesn't want to touch because of concerns about security and potential for abuse."

The privacy group coalition argued that ubiquitous police facial recognition surveillance would chill the exercise of First Amendment freedoms to protest, attend political events, or gather for religious ceremonies. "American history is fraught with efforts to monitor individuals based on dissent or religious beliefs, and face recognition could supercharge that surveillance," the coalition pointed out.

In addition, police often hide the fact that they used facial recognition software in investigating cases, thus preventing defendants from exercising their Sixth Amendment rights to challenge the accuracy of identifications made by police procedures and software algorithms. While noting that current versions of facial recognition technologies are much worse at accurately identifying black and brown people, the coalition stressed that "face recognition expands the scope and power of law enforcement, an institution that has a long and documented history of racial discrimination and racial violence that continues to this day."

"Facial recognition is the perfect tool for oppression," wrote Woodrow Hartzog, a professor of law and computer science at Northeastern University, and Evan Selinger, a philosopher at the Rochester Institute of Technology. It is, they persuasively argued, "the most uniquely dangerous surveillance mechanism ever invented."

"Facial recognition is like nuclear or biological weapons. It poses such a threat to the future of human society that any potential benefits are outweighed by the inevitable harms," asserted Caitlin Seeley George, director of campaigns and operations for the privacy group Fight for the Future, in a statement supporting the bill. "This inherently oppressive technology cannot be reformed or regulated. It should be abolished."