San Francisco Facial Recognition Ban Proposed

It's a good idea that libertarians should applaud.

Aaron Peskin, a member of San Francisco's Board of Supervisors, has proposed a new ordinance that could severely limit city agencies' use of enhanced surveillance of the city, including a ban on facial recognition technology. "I have yet to be persuaded that there is any beneficial use of this technology that outweighs the potential for government actors to use it for coercive and oppressive ends," Peskin said.

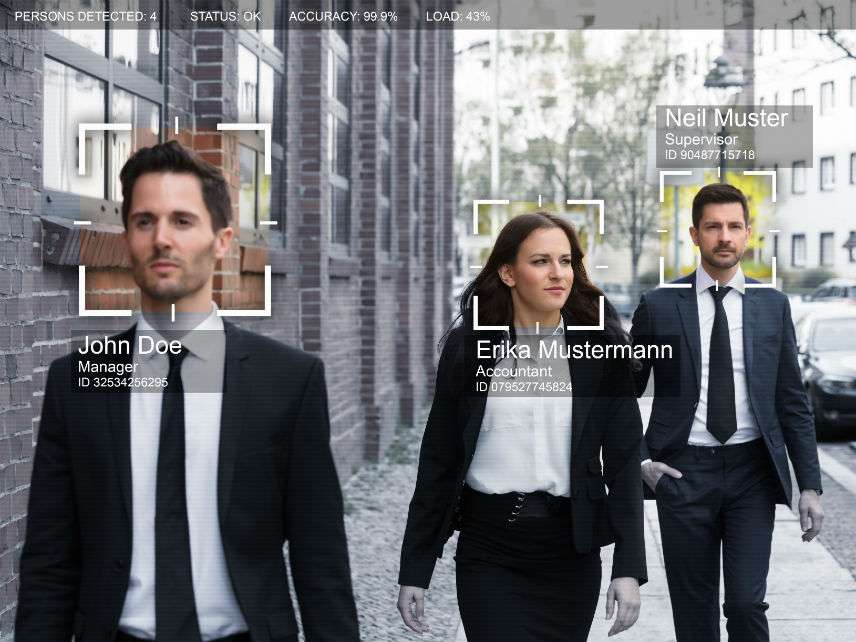

Peskin is not alone in worrying about how government agents can abuse the technology. Woodrow Hartzog, a professor of law and computer science at Northeastern University, and his colleague, Rochester Institute of Technology philosopher Evan Selinger, make a strongly persuasive case that "facial recognition is the perfect tool for oppression." As a consequence of the technology being "such a grave threat to privacy and civil liberties," they argue that "measured regulation should be abandoned in favor of an outright ban."

Their list of the harms to privacy and civil liberties entailed by the widespread use of this technology by government agencies is chilling. They point out that people who think that they are being watched behave differently. In addition, they cite a host of other abuses and corrosive activities entailed by the technology:

Disproportionate impact on people of color and other minority and vulnerable populations.

Due process harms, which might include shifting the ideal from "presumed innocent" to "people who have not been found guilty of a crime, yet."

Facilitation of harassment and violence.

Denial of fundamental rights and opportunities, such as protection against "arbitrary government tracking of one's movements, habits, relationships, interests, and thoughts."

The suffocating restraint of the relentless, perfect enforcement of law. The normalized elimination of practical obscurity.

The amplification of surveillance capitalism.

Why ban rather than regulate this surveillance technology and not others such as geolocation data and social media searches? Hartzog and Selinger argue that systems using face prints differ in important ways that justify banning them. Given the spread of CCTVs and police body cameras, faces are hard to hide and can be inexpensively and secretly captured and stored. All-seeing cameras and never forgetful digital databases will put an end to obscurity zones that secure the possibility of privacy for us all.

In addition, existing databases, such as those for driver's licenses, make facial recognition tech easy to plug in and play. In fact, it's already been done by the FBI. In their 2016 report, The Perpetual Line-Up, Georgetown University researchers found that 16 states had let the FBI use facial recognition technology to compare the faces of suspected criminals to the states' driver's license and ID photos, creating a virtual line-up of each state's residents. "If you build it, they will surveil," assert Hartzog and Selinger.

China's social credit system is already taking advantage of facial recognition technology. According to Fortune, the Chinese government is deploying facial recognition tied to millions of public cameras to not only "identify any of its 1.4 billion citizens within a matter of seconds but also having the ability to record an individual's behavior to predict who might become a threat—a real-world version of the 'precrime' in Philip K. Dick's Minority Report."

"The future of human flourishing depends upon facial recognition technology being banned before the systems become too entrenched in our lives," conclude Hartzog and Selinger. "Otherwise, people won't know what it's like to be in public without being automatically identified, profiled, and potentially exploited. In such a world, critics of facial recognition technology will be disempowered, silenced, or cease to exist."

Perhaps that's too ominous. Widespread use of facial recognition technology can certainly make our lives easier to navigate. But it doesn't take a cynic to worry that governments will demand the right to plunder private face print databases. It's not too soon to consider if the tradeoffs between convenience and not-implausible government abuses of the technology are worth it.

The proposed ordinance in San Francisco requires agencies to gain the Board of Supervisors' approval before buying new surveillance technology and puts the burden on city agencies to publicly explain why they want the tools as well as to evaluate their potential harms. That's at least a step in the right direction.

For more on how we are already constructing a pervasive surveillance state, see my January feature article, "Can Algorithms Run Things Better Than Humans?" The answer depends on what you mean by "better."