Why Polls Don't Work

After decades of gradual improvement, the science of predicting election outcomes has hit an accuracy crisis.

On October 7, 2015, the most famous brand in public opinion polling announced it was getting out of the horse-race survey business. Henceforth, Gallup would no longer poll Americans on whom they would vote for if the next election were held today.

"We believe to put our time and money and brain-power into understanding the issues and priorities is where we can most have an impact," Gallup Editor in Chief Frank Newport told Politico. Let other operations focus on predicting voter behavior, the implication went, we're going to dig deeper into what the public thinks about current events.

Still, Gallup's move, which followed an embarrassingly inaccurate performance by the company in the 2012 elections, reinforces the perception that something has gone badly wrong in polling and that even the most experienced players are at a loss about how to fix it. Heading into the 2016 primary season, news consumers are facing an onslaught of polls paired with a nagging suspicion that their findings can't be trusted. Over the last four years, pollsters' ability to make good predictions about Election Day has seemingly deteriorated before our eyes.

The day before the 2014 midterms, all the major forecasts declared Republicans likely to take back the Senate. The Princeton Election Consortium put the odds at 64 percent; The Washington Post, most bullish of all, put them at 98 percent. But the Cook Political Report considered all nine "competitive" seats to be tossups—too close to call. And very few thought it likely that Republicans would win in a landslide.

Conventional wisdom had it that the party would end up with 53 seats at most, and some commentators floated the possibility that even those numbers were biased in favor of the GOP. The week before the election, for example, HuffPollster noted that "polling in the 2006 and 2010 midterm elections and the 2012 presidential election all understated Democratic candidates. A similar systematic misfire in 2014 could reverse Republican leads in a small handful of states."

We soon learned that the polls were actually overstating Democratic support. The GOP ended up with 54 Senate seats. States that were expected to be extremely close calls, such as Kansas and Iowa, turned into runaways for the GOP. A couple of states that many were sure would stay blue—North Carolina, Louisiana—flipped to red. The pre-election surveys consistently underestimated how Republicans in competitive races would perform.

The following March, something similar happened in Israel. Both pre-election and exit polls called for a tight race, with the Likud Party, headed by Prime Minister Benjamin Netanyahu, and the Zionist Union Party, led by Isaac Herzog, in a virtual tie. Instead, Likud easily captured a plurality of the vote and picked up 12 seats in the Knesset.

The pattern repeated itself over the summer, this time in the United Kingdom, where the 2015 parliamentary election was roundly expected to produce a stalemate. A few polls gave the Conservative Party a slight lead, but not nearly enough of one to guarantee it would be part of the eventual governing coalition. You can imagine the surprise, then, when the Tories managed to grab 330 of the 650 seats—not just a plurality but an outright majority. The Labour and Liberal Democrat parties meanwhile lost constituencies the polls had predicted they would hold on to or take over.

And then there was Kentucky. This past November, the Republican gubernatorial candidate was Matt Bevin, a venture capitalist whom Mitch McConnell had trounced a year earlier in a Senate primary contest. As of mid-October, Bevin trailed his Democratic opponent, Jack Conway, by 7 points. By Halloween he'd narrowed the gap somewhat but was still expected to lose. At no point was Bevin ahead in The Huffington Post's polling average, and the site said the probability that Conway would beat him was 88 percent. Yet Bevin not only won, he won by a shocking 9-point margin. Pollsters once again had flubbed the call.

Why does this suddenly keep happening? The morning after the U.K. miss, the president of the British online polling outfit YouGov was asked just that. "What seems to have gone wrong," he answered less than satisfactorily, "is that people have said one thing and they did something else in the ballot box."

'To Lose in a Gallup and Win in a Walk'

Until recently, the story of polling seemed to be a tale of continual improvement over time. As technology advanced and our grasp of probability theory matured, the ability to predict the outcome of an election seemed destined to become ever more reliable. In 2012, the poll analyst Nate Silver correctly called the eventual presidential winner in all 50 states and the District of Columbia, besting his performance from four years earlier, when he got 49 states and D.C. right but mispredicted Indiana.

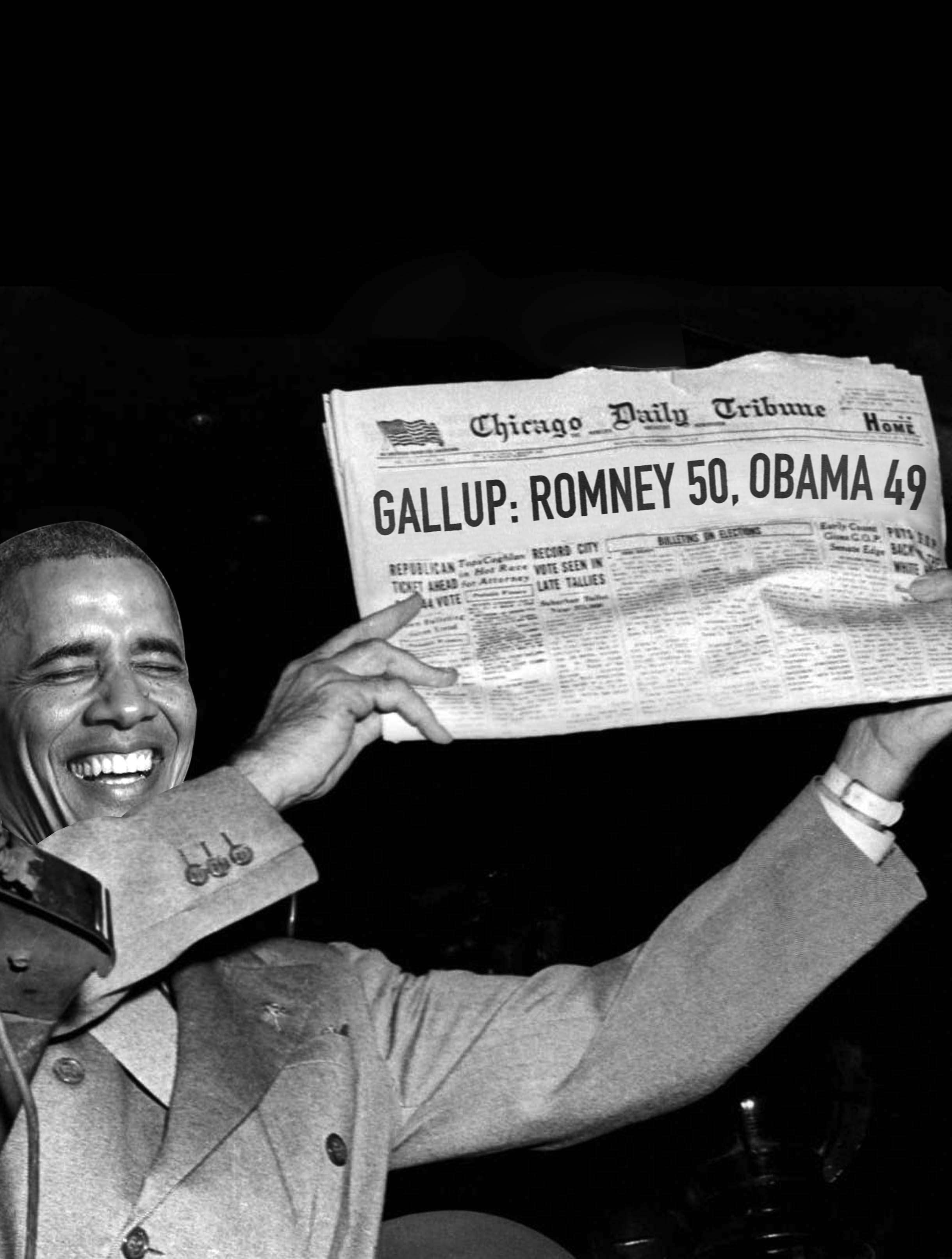

There have been major polling blunders, including the one that led to the ignominious "DEWEY DEFEATS TRUMAN" headline in 1948. But whenever the survey research community has gotten an election wrong, it has responded with redoubled efforts to figure out why and how to do better in the future. Historically, those efforts have been successful.

Until Gallup burst onto the scene, The Literary Digest was America's surveyor of record. The weekly newsmagazine had managed to correctly predict the outcome of the previous four presidential races using a wholly unscientific method of mailing out postcards querying people on who they planned to vote for. The exercise served double duty as a subscription drive as well as an opinion poll.

In 1936, some 10 million such postcards were distributed. More than 2 million were completed and returned, an astounding number by the standards of modern survey research, which routinely draws conclusions from fewer than 1,000 interviews. From those responses, the editors estimated that Alfred Landon, Kansas' Republican governor, would receive 57 percent of the popular vote and beat the sitting president, Franklin Delano Roosevelt.

In fact, Roosevelt won the election handily—an outcome predicted, to everyone's great surprise, by a young journalism professor named George Gallup. Recognizing that the magazine's survey methodology was vulnerable to self-selection bias, Gallup set out to correct for it. Among The Literary Digest's respondents in California, for example, 92 percent claimed to be supporting Landon. On Election Day, just 32 percent of ballots cast in the state actually went for the Republican. By employing a quota system to ensure his sample looked demographically similar to the voting population, Gallup got a better read despite hearing from far fewer people.

Though far more scientific than what had come before, the early years of quota-based surveys retained a lot of room for error. It took another embarrassing polling miss for the nascent industry to get behind random sampling, which is the basis for the public opinion research we know today. That failure came in 1948, as another Democratic incumbent battled to hold on to the White House.

In the run-up to the election that year, all the major polls found New York Gov. Thomas Dewey ahead of President Harry Truman. Gallup himself had Dewey at 49.5 percent to Truman's 44.5 percent two weeks out. On the strength of that prediction, though the results were still too close to call, the Chicago Tribune went to press on election night with a headline announcing the Republican challenger's victory. When the dust had cleared, it was Truman who took 49.5 percent of the vote to Dewey's 45.1 percent, becoming, in the immortal words of the radio personality Fred Allen, the first president ever "to lose in a Gallup and win in a walk."

That embarrassment pushed the polling world to adopt more rigorous methodological standards, including a move to "probability sampling," in which every voter—in theory, anyway—has exactly the same chance of being randomly selected to take a survey. The result: more accurate research, at least at the presidential level.

Another boon to the polling industry around this time was the spread of the telephone. As call volumes shot up during and immediately following World War II, thousands of new lines had to be installed to keep up with the rising demand. Although Gallup continued to survey people the old-fashioned way—in person, by sending interviewers door-to-door—new players entered the marketplace and began to take advantage of telephonic communications to reach people more cheaply.

A series of analyses from the National Council on Public Polls (NCPP) provides quantitative evidence that polling got better during this period. Analysts looked at the final survey from each major public-facing outfit before a given presidential election and determined its "candidate error"—loosely, the percentage-point difference between the poll's estimate and the actual vote percentage garnered by a candidate. From 1936 to 1952, the overall average candidate error per cycle ranged from 2.5 percent to 6 percent. Between 1956 and 2008, the average candidate error per cycle ranged from 0.9 percent to 3.1 percent—significantly better.

Polls were never perfect. Gallup's final survey before the 1976 election had Gerald Ford ahead of the eventual winner, Jimmy Carter. Still, in the post-Dewey/Truman era, pollsters amassed a respectable record that paved the way for Nate Silver to make a name for himself aggregating their data into an extraordinarily powerful predictive model.

Silver's success is achieved not just by averaging poll numbers—something RealClearPolitics was already doing when Silver launched his blog, FiveThirtyEight, in early 2008—but by modifying the raw data in various ways. Results are weighted based on the pollster's track record and how recent the survey is, then fed into a statistical model that takes into account other factors, such as demographic information and trend-line changes in similar states. The payoff is a set of constantly updating, highly accurate state-by-state predictions that took the political world by storm during the 2008 and 2012 elections.

The Seeds of Disaster Ahead

Despite Silver's high-profile triumph in 2012—the morning of the election, his model gave Barack Obama a 90.9 percent chance of winning even as the Mitt Romney campaign was insisting it had the race locked up—that year brought with it some early signs of trouble for the polling industry.

The NCPP's average candidate error was still just 1.5 percent for that cycle, meaning most pollsters called the race about right. But Gallup's final pre-election survey showed the former Massachusetts governor squeaking out a win by one percentage point. Polls commissioned by two prominent media entities, NPR and the Associated Press, also put Romney on top (though in all three cases his margin of victory was within the poll's margin of error). In reality, Obama won by four percentage points nationally, capturing 332 electoral votes to his opponent's 206. Like Truman before him, the president had lost "in a Gallup" while easily holding on to the Oval Office in real life. The world's most famous polling company—having performed dead last among public pollsters according to a post-election analysis by FiveThirtyEight—responded by scrapping plans to survey voters ahead of the 2014 midterms and embarking on an extensive post mortem of what had gone wrong.

Things have only gotten worse for pollsters since 2012. With the misses in the 2014 U.S. midterms, in Israel, in the U.K., and in the 2015 Kentucky governor's race, it's getting hard to escape the conclusion that traditional ways of measuring public opinion no longer seem capable of accurately predicting outcomes the way they more or less once did.

One reason for this is that prospective voters are much harder to reach than they used to be. For decades, the principal way to poll people has been by calling them at home. Even Gallup, long a holdout, switched to phone interviews in 1988. But landlines, as you may have noticed, have become far less prevalent. In the United States, fixed telephone subscriptions peaked in 2000 at 68 per 100 people, according to the World Bank. Today, we're down to 40 per 100 people, as more and more Americans—especially young ones—choose to live in cell-only households. Meanwhile, Federal Communications Commission regulations make it far more expensive to survey people via mobile phones. On top of that, more people are screening their calls, refusing to answer attempts from unknown numbers.

In addition to being more picky about whom they'll speak to, cellphone users are less likely to qualify for a survey being conducted. In August, I got a call from an interviewer looking for opinions from voters in Florida. That's understandable, since I grew up in Tampa and still have an 813 area code. Since I no longer live in the state, I'm not eligible to vote there, yet some poor polling company had to waste time and money dialing me—probably repeatedly, until I finally picked up—before it found that out.

All these developments have caused response rates to plummet. When I got my first job at a polling firm after grad school, I heard tales of a halcyon era when researchers could expect to complete interviews with 50 percent or more of the people they set out to reach. By 1997, that number had fallen to 36 percent, according to Pew Research Center. Today it's in the single digits.

This overturns the philosophy behind probability polling, which holds that a relatively small number of people can stand in for the full population as long as they are chosen randomly from the larger group. If, on the other hand, the people who actually take the survey are systematically different from those who don't—if they're older, or have a different education level, or for some other reason are more likely to vote a certain way—the methodology breaks down.

A series of Pew Research studies suggests, counterintuitively, that falling response rates may not actually matter. "Telephone surveys that include landlines and cell phones and are weighted to match the demographic composition of the population continue to provide accurate data on most political, social and economic measures," concludes the most recent study, conducted in the first quarter of 2012. "This comports with the consistent record of accuracy achieved by major polls when it comes to estimating election outcomes, among other things." But that was before the streak of missed calls in the four years since. A savvy observer would be right to wonder if people's growing unwillingness to participate in surveys has finally rendered the form inadequate to the task of predicting election outcomes.

Methodological Obstacles Mount

Because it's no longer possible to get a truly random (and therefore representative) sample, pollsters compensate by making complicated statistical adjustments to their data. If you know your poll reached too few young people, you might "weight up" the millennial results you did get. But this involves making some crucial assumptions about how many voters on Election Day will hail from different demographic groups.

We can't know ahead of time what the true electorate will look like, so pollsters have to guess. In 2012, some right-of-center polling outfits posited that young voters and African Americans would be less motivated to turn out than they had been four years earlier. That assumption turned out to be wrong, and their predictions about who would win were wrong along with it. But in the elections since then, many pollsters have made the opposite mistake—underestimating the number of conservatives who would vote and thus concluding that races were closer than they really were.

One way pollsters try to figure out who will show up on Election Day is by asking respondents how likely they are to vote. But this ends up being harder than it seems. People, it turns out, are highly unreliable at reporting their future voting behavior. On a 1–9 scale, with 9 being "definitely will," almost everyone will tell you they're an 8 or 9. Many of them are wrong. Maybe they're just overly optimistic about the level of motivation they'll feel on Election Day; maybe they're genuinely planning to vote, but something will come up to prevent them; or maybe they're succumbing to a phenomenon called "social desirability bias," in which people are embarrassed to admit aloud that they won't be doing their civic duty.

Some polling outfits—Gallup foremost among them—have tried to find more sophisticated methods of weeding out nonvoters. For example, they might begin a survey with a battery of questions, like whether the respondent knows where his or her local polling place is. But these efforts have met with mixed results. At the end of the day, correctly predicting an electoral outcome is largely dependent on getting the makeup of the electorate right. That task is much easier stated than achieved.

There's another open question regarding the 2016 race: How will Silver and other election modelers fare without the Gallup numbers to incorporate into their forecasts? There's no shortage of raw data to work with, but not all of it comes from pollsters that abide by gold-star standards and practices. "There's been this explosion of data, because everyone wants to feed the beast and it's very clickable," says Kristen Soltis Anderson, a pollster at Echelon Insights (and my former boss at The Winston Group). "But quantity has come at the expense of quality. And as good research has become more expensive, that's happening at the same time media organizations have less money to spend on polls and want to get more polls out of the limited polling budget that they have."

In service of that bottom line, some outfits experiment with nontraditional methodologies. Public Policy Polling on the left and Rasmussen Reports on the right both use Interactive Voice Response technology to do robo-polling—questionnaires are pre-recorded and respondents' answers are either typed in on their keypads ("Press 99 for 'I don't know'") or registered via speech recognition software. Other companies, such as YouGov, conduct surveys entirely online using non-probabilistic opt-in panels.

Meanwhile, "Gallup uses rigorous polling methodologies. It employs live interviewers; it calls a lot of cell phones; it calls back people who are harder to reach," FiveThirtyEight blogger Harry Enten wrote after the announcement. "Polling consumers are far better off in a world of [Gallups] than in a world of Zogby Internet polls and fly-by-night surveys from pollsters we've never heard of."

As methodological obstacles to good, sound research mount, Enten and Silver have also found evidence of a phenomenon they call "herding." Poll results in recent cycles have tended to converge on a consensus point toward the end, which suggests that some pollsters are tweaking their numbers to look more like everyone else's. When a race includes a "gold standard" survey like Gallup's, the other polls in that race have lower errors. Without Gallup in the mix, less rigorous polling outfits won't have its data to cheat off of.

All of which spells trouble for the ability of anyone to predict what's going to happen next November 8. The industry's track record since 2012 should make us think twice about putting too much stock in what the horse-race numbers are saying. At best, all a poll can know is what would happen if the election were held today. And there's no guarantee anymore that the polls are even getting that right.

The Future of Polling Is All of the Above

As good polls became harder and costlier to conduct, academics and politicos alike began to wonder if there isn't a better approach that eschews the interview altogether. Following the 2008 election, a flurry of papers suggested that the opinions people voluntarily put onto social media platforms—Twitter in particular—could be used to predict real-world outcomes.

"Can we analyze publicly available data to infer population attitudes in the same manner that public opinion pollsters query a population?" asked four researchers from Carnegie Mellon University in 2010. Their answer, in many cases, was yes. A "relatively simple sentiment detector based on Twitter data replicates consumer confidence and presidential job approval polls," their paper, from the 2010 Proceedings of the International Association for the Advancement of Artificial Intelligence Conference on Weblogs and Social Media, concluded. That same year Andranik Tumasjan and his colleagues from the Technische Universität München made the even bolder claim that "the mere number of [Twitter] messages mentioning a party reflects the election result."

A belief that traditional survey research was no longer going to be able to stand alone prompted Anderson to begin searching for ways to bring new sources of data into the picture. In 2014, she partnered with the Republican digital strategist Patrick Ruffini to launch Echelon Insights, a "political intelligence" firm.

"We realized that, for regulatory and legal reasons, for cultural and technological reasons, the normal, traditional way we've done research for the last few decades is becoming obsolete," Anderson explains. "The good news is, at the same time people are less likely to pick up the phone and tell you what they think, we are more able to capture the opinions and behaviors that people give off passively. Whether through analyzing the things people tweet, analyzing the things we tell the Google search bar—the modern-day confessional—all this information is out there…and can be analyzed to give us additional clues about public opinion."

But as quickly as some people began hypothesizing that social media could replace polling, others began throwing cold water on the idea. In a paper presented at the 2011 Institute of Electrical and Electronics Engineers Conference on Social Computing, some scholars compared social media analytics with the outcomes of several recent House and Senate races. They found that "electoral predictions using the published research methods on Twitter data are not better than chance."

One of that study's co-authors, Daniel Gayo-Avello of the University of Oviedo in Spain, has gone on to publish extensive critiques of the social-media soothsayers, including a 2012 article bluntly titled "No, You Cannot Predict Elections with Twitter." Among other issues, he noted that studies that fail to find the desired result often never see the light of day—the infamous "file-drawer effect." This, he said, creates an erroneous bias toward positive findings in scientific publications.

The biggest problem with trying to use social media analysis in place of survey research is that the people who are active on those platforms aren't necessarily representative of the ones who aren't. Moreover, there's no limit on the amount one user can participate in the conversation, meaning a small number of highly vocal individuals can (and do) dominate the flow. A study in the December 2015 Social Science Computer Review found that the people who discuss politics on Twitter overwhelmingly are male, live in urban areas, and have extreme ideological preferences. In other words, social media analyses suffer from the same self-selection bias that derailed The Literary Digest's polling project almost 80 years ago: Anyone can engage in online discourse, but not everyone chooses to.

Another concern is that most social media analysis relies on sentiment analysis, meaning it uses language processing software to figure out which tweets are relevant, and then—this is key—to accurately code them as positive or negative. Yet in 2014, when PRWeek magazine tried to test five companies that claimed they could measure social media sentiment, the results were disappointing. "Three of the five agencies approached by PRWeek that initially agreed to take part later backed down," the article reads. "They pointed to difficulties such as weeding out sarcasm and dealing with ambiguity. One agency said that while it was easy to search for simple descriptions like 'great' or 'poor', a phrase like 'it was not great' would count as a positive if there was no advanced search facility to scan words around key adjectives. Such a system is understood to be in the development stage, the agency said."

Even if we're able to crack the code of sentiment analysis, it may not be enough to render the fire hose of social media content valuable for election-predicting purposes. After more than 12 months of testing Crimson Hexagon's trainable coding software, which "classifies online content by identifying statistical patterns in words," Pew Research Center concluded that it achieves 85 percent accuracy at differentiating between positive, negative, neutral, and irrelevant sentiments. Yet Pew also found that the reaction on Twitter to current events often differs greatly from public opinion as measured by statistically rigorous surveys.

"In some instances, the Twitter reaction was more pro-Democratic or liberal than the balance of public opinion," Pew reported. "For instance, when a federal court ruled last February that a California law banning same-sex marriage was unconstitutional…Twitter conversations about the ruling were much more positive than negative (46% vs. 8%). But public opinion, as measured in a national poll, ran the other direction." Yet in other cases, the sentiment on Twitter skewed to the political right, making it difficult if not impossible to correct for the error with weighting or other statistical tricks.

At Echelon Insights, Ruffini and Anderson have come to roughly the same conclusion. When I asked if it's possible to figure out who the Republican nominee will be solely from the "ambient noise" of social media, they both said no. But that doesn't mean you can't look at what's happening online, and at what volume, to get a sense of where the public is on various political issues.

"It's easier for us to say 'Lots more people are talking about trade' than it is for us to make a more subjective judgment about whether people are talking more positively about trade," Anderson tells me. "But because of the way a lot of these political debates break down, by knowing who's talking about a topic, we can infer how that debate is playing out."

So what is the future of public opinion research? For the Echelon team, it's a mixture of traditional and nontraditional methods—an "all-of-the-above" approach. Twitter data isn't the Holy Grail of election predictions, but it can still be extraordinarily useful for painting a richer and more textured picture of where the public stands.

"It's just another data point," says Ruffini. "You have the polls and you have the social data, and you have to learn to triangulate between the two."

This article originally appeared in print under the headline "Why Polls Don't Work."