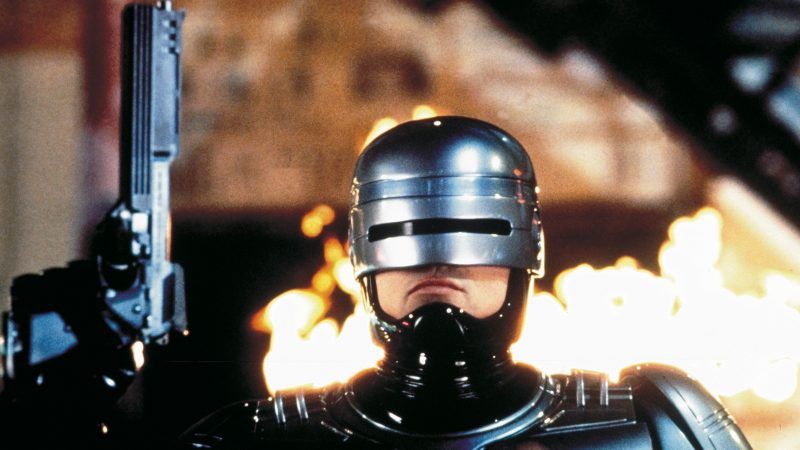

Facial Recognition Tech Straight Out of 'Robocop' Could Be a Real Threat to Civil Liberties

As governments and law enforcement agencies rush to incorporate facial recognition tech, California lawmakers have a chance to slam on the brakes.

Nearly a decade ago, the spokesman for a company that produces Tasers and body cameras for police departments envisioned the day when "every cop is Robocop." He was referring to then-nascent facial-recognition software, which lets police nab suspects based on an image grabbed from a camera, and a science-fiction movie about crime-fighting cyborg cops.

Technology advances at such rapid speeds that such a day already is here in authoritarian China. It's also gaining a foothold within American law-enforcement agencies that use body cameras. Such cameras have become ubiquitous for good reason. By recording police interactions with the public, the cameras depict police interactions with the public. They are a tool for improving accountability and building community trust.

But police departments—spurred by tech companies that might make a fortune selling high-technology products to government agencies—are turning this public-spirited tool into a means of constant surveillance. Evolving software applications will let police record every encounter and match up a citizen's face with a database, thus enabling an officer to make an arrest or call in a SWAT team based solely on an algorithm.

There are myriad problems here. But it's best to start with that "Robocop" analogy. The 1987 blockbuster pointed to a terrifying future where a nefarious corporation takes over policing in gang-infested Detroit. Its droid gruesomely kills an innocent person. The company then relies on the half-man, half-machine Robocop. The movie takes swipes at corruption, privatization and authoritarianism, but ultimately is about the triumph of humanity over machinery.

Civil-liberties concerns have driven California lawmakers to consider Assembly Bill 1215, which would ban police agencies from using facial and biometric tracking devices as part of their body cameras.

"Having every patrol officer constantly scanning faces of everyone that walks into their field of view to identify people, run their records, and record their location and activities is positively Orwellian," said ACLU attorney Peter Bibring.

This technology is creepy, especially when one considers the next step that's under active development: Tying facial-recognition software into security cameras that are practically everywhere. The bill's author, Assemblyman Phil Ting (D–San Francisco), points to an incident in China where the authorities used recognition software to grab someone from a crowd of 20,000 people during a concert.

Opponents of the ban naively insist that there's no difference between using such software and looking at a mugshot—and that police are still required to follow the Constitution's Fourth Amendment restraints on unreasonable searches. That's nonsensical. Police admit that they want to use these cameras as part of wholesale dragnets, by scanning everyone at public events and not only those that they suspect of having committed a crime.

In its official opposition to the bill, the Riverside Sheriffs' Association argues that, "Huge events…and scores of popular tourist attractions should have access to the best available security—including the use of body cameras and facial-recognition technology." There you have it. The goal of police is to scan our faces at every event.

This is far more intrusive than those checkpoints in totalitarian countries where people must constantly show their papers. In this emerging Robocop world, every American will always be identifiable to the authorities simply by walking around in public. If your face alarms the software, the police will get you.

There are many practical concerns, as well. Let's say I get pulled over for driving 75 MPH on the freeway and the officer approaches my vehicle. His body camera scans my face and compares it to tens of thousands of photos of older, bald, overweight white guys. It triggers a match and instantly the officer draws his gun or calls for backup. In other words, this software can turn a simple stop into a potentially dangerous situation.

That's of particular concern given how inaccurate facial-recognition software can be. "Facial recognition technology has misidentified members of the public as potential criminals in 96 per cent of scans so far in London, new figures reveal," according to a May report in the Independent. A Commerce Department study found a high rate of accuracy, but misidentification still was common. How would you like to be wrongly tagged as a gang-banger?

According to the Assembly analysis, the ACLU used such software to compare photos of all federal legislators and "incorrectly matched 28 members of Congress with people who had been arrested. The test disproportionately misidentified African-American and Latino members of Congress as the people in mug shots." The company that produced the software disputed the ACLU's approach, but this is disturbing, especially in terms of racial bias.

Republicans often complain about big government and creeping socialism, yet the only Republican to thus far support this bill is state Assemblyman Tyler Diep (R–Orange County), who was born in communist Vietnam. Good for him. "Robocop" provided dystopian warnings about the creation of a police state. It wasn't a model for a free society.

This column was first published in the Orange County Register.