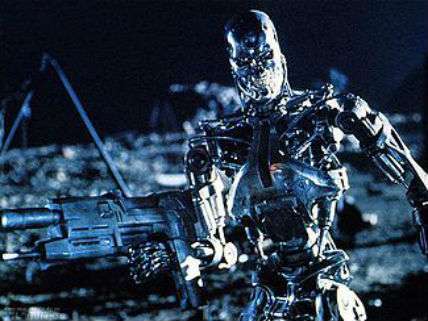

Killer Robots: Protectors of Human Rights?

Why a ban on the development of lethal autonomous weapons now is premature.

"States should adopt an international, legally binding instrument that prohibits the development, production, and use of fully autonomous weapons," declared Human Rights Watch (HRW) and Harvard Law School's International Human Rights Clinic (IHRC) in an April statement. The two groups issued their report, "Killer Robots and the Concept of Meaningful Human Control," as experts in weapons and international human rights met in Geneva to consider what should be done about lethal autonomous weapon systems (LAWS). It was their third meeting, conducted under the auspices of the Convention on Conventional Weapons.

What is a lethal autonomous weapons system? Characterizations vary, but the U.S. definition provides a good starting point: "A weapon system that, once activated, can select and engage targets without further intervention by a human operator." Weapons system experts typically distinguish among technologies where there is a "human in the loop" (semi-autonomous systems where a human being controls the technology as it operates), a "human on the loop" (human-supervised autonomous systems where a person can intervene and alter or terminate operations), or a "human out of the loop" (fully autonomous systems that operate independent of human control).

The authors of that April statement want to ban fully autonomous systems, because they believe a requirement to maintain human control over the use of weapons is needed to "protect the dignity of human life, facilitate compliance with international humanitarian and human rights law, and promote accountability for unlawful acts."

The HRC and IHRC argue that killer robots would necessarily "deprive people of their inherent dignity." The core argument here is that inanimate machines cannot understand the value of individual life and the significance of its loss, while soldiers can weigh "ethical and unquantifiable factors" while making such decisions. In addition, the HRC and IHRC believe that LAWS could not comply with the requirements of international human rights law, specifically the obligations to use force proportionally and to distinguish civilians from combatants. They further claim that killer robots, unlike soldiers and their commanders, could not be held accountable and punished for illegal acts.

Yet it may well be the case that killer robots could better protect human rights during combat than soldiers using conventional weapons do now, according to Temple University law professor Duncan Hollis.

Hollis notes that under international human rights law, states must conduct a legal review to ensure that any armaments, including autonomous lethal weapons, are not unlawful per se—that is, they are neither indiscriminate nor the cause of unnecessary suffering. To be lawful, a weapon must be capable of distinguishing between civilians and combatants. Also, it must not by its very nature cause unnecessary suffering or superfluous injury. A weapon would also be unlawful if its deleterious effects cannot be controlled.

Just such considerations have persuaded most governments to sign treaties outlawing the use of such indiscriminate, needlessly cruel, and uncontrolled weapons as antipersonnel land mines and chemical and biological agents. If killer robots could better discriminate between combatants and civilians and reduce the amount of suffering experienced by people caught up in battle then they would not be per se illegal.

Could killer robots meet these international human rights standards? Ronald Arkin, a roboticist at the Georgia Institute of Technology, thinks they could. In fact, he argues that LAWS could have significant ethical advantages over human combatants. For example, killer robots do not need to protect themselves, and so could refrain from striking when in doubt about whether a target is a civilian or a combatant. Warbots, Arkin contends, could assume "far more risk on behalf of noncombatants than human warfighters are capable of, to assess hostility and hostile intent, while assuming a 'First do no harm' rather than 'Shoot first and ask questions later' stance."

LAWS, he suggests, would also employ superior sensor arrays, enabling them to make better battlefield observations. They would not make errors based on emotions—unlike soldiers, who experience fear, fatigue, and anger. They could integrate and evaluate far more information faster in real time than could human soldiers. And they could objectively monitor the ethical behavior of all parties on the battlefield and report any infractions.

Under the Geneva Convention, the principle of proportionality prohibits "an attack which may be expected to cause incidental loss of civilian life, injury to civilians, damage to civilian objects, or a combination thereof, which would be excessive in relation to the concrete and direct military advantage anticipated." Human soldiers may take actions where they knowingly risk, but do not intend, harm to noncombatants. In order to meet the requirement of proportionality, autonomous weapons could be designed to be conservative in their targeting choices; when in doubt, don't attack.

In justifying their call for a ban, the HRW and IHRC argue that soulless warbots cannot be held responsible for their actions, creating a morally unbridgeable "accountability gap." Under the current laws of warfare, a commander is held responsible for an unreasonable failure to prevent a subordinate's violations of international human rights laws. The organizations that oppose the deployment of autonomous weapons argue that a commander or operator of a LAWS "could not be held directly liable for a fully autonomous weapon's unlawful actions because the robot would have operated independently." Hollis counters that since states and the people who represent them are supposed to be held accountable when the armed forces they command commit war crimes, they could similarly be held accountable for unleashing robots that violate human rights.

Peter Margulies, a professor of law at Roger Williams University, makes a similar argument. Holding commanders responsible for the actions of lethal autonomous weapons systems, he writes, is "a logical refinement of current law, since it imposes liability on an individual with power and access to information who benefits most concretely from the [system's] capabilities in war-fighting."

In order to augment command responsibility for warbots' possible human rights infractions, Margulies suggests that states and militaries create a separate lethal autonomous weapons command. The officers that head up this dedicated command would be required to have a deep understanding of limitations of the killer robots under their control. In ambiguous situations, killer robots should also have the capability of requesting a review by its human commanders of its proposed targets.

"The status quo is unacceptable with respect to noncombatant deaths," Arkin argues cogently. "It may be possible to save noncombatant lives through the use of this technology—if done correctly—and these efforts should not be prematurely terminated by a preemptive ban."