The Coming Robotic World

The future world of autonomous agents is likely to be as glorious and messy as we are.

In his 2006 high-tech thriller, Daemon, Daniel Suarez tells the story of a computer program that is activated after the genius millionaire that created it dies of cancer. Unlike many other sci-fi novels, the artificial intelligence in Daemon is not sentient, but is instead merely autonomous, like so many computer programs we rely on today. From email servers to climate control systems, these programs don't require human intervention once they are set in motion. They observe the world through sensors, apply an algorithm to this input, and then act on the world accordingly.

High-frequency trading bots are a good example of such autonomous programs. These bots scan lots of data, including market prices, trading volumes, and news articles, and automatically trade financial instruments according to a predetermined algorithm. They act independently of humans, except that they are trading on behalf of humans. It's the humans who reap the profit and loss, not the bots.

In Daemon, the program essentially owned itself because, improbably, it had access to its creator's wealth via shell corporations that it controlled. As a result, it did reap the profit and loss of its actions. Without giving away any spoilers, the program used its wealth, and thus the goods and services it could acquire with it, to set about dismantling society. (Like I said, it's a sci-fi thriller.)

What was speculative fiction in 2006, came one step closer to reality in 2009 with the advent of Bitcoin. Today there's nothing inconceivable about a program that funds and runs itself without the intervention of humans.

To model an example loosely on Daemon, imagine you'd like to make sure that your website or blog remains online as long as possible after your death. How do you go about ensuring that? To date we've relied on legal mechanisms, such as trusts or corporations, to execute the will of a person after his death. The problem with these schemes is that they require one to trust other humans to continue to carry out one's wishes. And these schemes also depend on governments to recognize and respect the rights of such entities.

Imagine instead that you don't form a trust or corporation, but rather simply write a program the job of which is to keep your site online. The technical details are beyond the scope of this short article, but suffice it to say that it wouldn't be difficult to create a program that can monitor a site's status, register new domain names, and transfer files. The only difficult thing might be having the program make payments after you're dead without employing a corporation (and thus a human). Programs can't have bank accounts or credit cards of their own, but they can easily control the funds in a Bitcoin address. And unlike bank or credit card accounts, no one but the bot that has access to a private key can touch those funds.

But how do you keep the bot from running out of funds? Good question. The answer is that the bot has to have a way to make money, perhaps by speculating in markets or by selling a useful service. In our little example, imagine that the bot resells some of the storage space it acquires to host your site. Again, only with the advent of Bitcoin can a bot engage in transactions that it can cryptographically verify, thus requiring no trust in humans.

This little bot can be made with technology that we have available today, and yet it is totally incompatible with our legal system. After all, it is a program that makes and spends money and acts in the world, but isn't owned by a human or a corporation. It essentially owns itself and its capital. The law doesn't contemplate such a thing.

We should start contemplating. Autonomous agents could prove a boon to consumers and the economy. Mike Hearn, an engineer at Google and a core developer of Bitcoin, explained their potential in a talk at the Turing Festival earlier this year. One radical example of a not-inconceivable autonomous agent he presented was that of driverless cars that own themselves.

It's quite inefficient for each person to own a car. Ideally, each time we needed a ride, we'd pull out our smartphones and call a cab, which would show up in a matter of minutes. This would be a lot like what Uber offers, and if everyone used it, we'd see reduced congestion, reduced need for parking, and reduced pollution.

Of course, using such a system would have to be cheaper than owning a car, and the greatest component cost of such a system would be the drivers. So, we'd want to use autonomous driverless cars. And yet, you could make this system even cheaper and more efficient if, in addition to labor, you got rid of the capitalist. After all, the Uber-like company offering this service is taking at least a little profit. Why not allow the car to own itself? When you pay, you make a payment to the car's Bitcoin address. When the car needs more fuel or maintenance, it pays out of its funds.

And it gets better. If it accrues more funds than it needs, the car could buy another car, give it its program and a loan to get it started. Once that loan is paid off, the "child" car would own itself outright and could repeat the process. While the technology to accomplish this exists today, even if theoretically, the law does not accommodate it. Theories of property, contract, and tort are all implicated and would all have to be rethought.

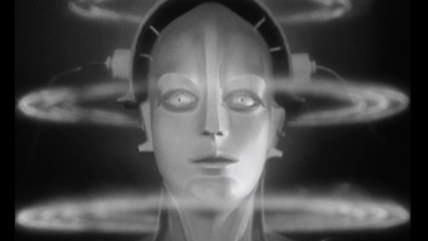

As far fetched as this all sounds, it's probably a matter of when, and not if. Unfortunately, it's likely that the first autonomous agents that will capture the public's imagination will be the kind that don't have much use for the law. That is, they'll be bad robots like the one in Daemon.

For example, CryptoLocker is malware that installs itself on your computer by tricking you into opening a trojan horse email. Once installed, it proceeds to encrypt your hard drive so that you no longer have access to any of your files. It offers to decrypt your drive, and return access to you, for a payment in prepaid debit cards or bitcoin. We know that there are humans behind this nasty bit of ransomware and that they are clearing millions of dollars a year. But there's no reason this process could not be automated and the earnings programmatically spent on something just as nefarious. There's no reason humans need to be involved.

But even if some autonomous agents do things that we don't like, that shouldn't be a deal-breaker for bots in general. After all, some humans do things that we don't like, yet most of us do not think humanity should therefore be wiped out. The future world of autonomous agents is likely to be as glorious and messy as we are and the law should reflect that.