When Cancer Was Conquerable

To win the war on cancer, we must recapture the bold spirit of the early days of discovery.

The first attempt to treat cancer in humans with chemotherapy happened within days of doctors realizing that it reduced the size of tumors in mice.

The year was 1942, and we were at war. Yale pharmacologist Alfred Gilman was serving as chief of the pharmacology section in the Army Medical Division at Edgewood Arsenal, Maryland, working on developing antidotes to nerve gases and other chemical weapons the Army feared would be used against American troops.

After a few months of researching mustard gas in mice, Gilman and his collaborator, Louis S. Goodman, noticed that the poison also caused a regression of cancer in the rodents. Just a few days later, they persuaded a professor of surgery at Yale to run a clinical trial on a patient with terminal cancer; within 48 hours, the patient's tumors had receded.

In 1971, three decades after Gilman's discovery, the U.S. government declared a "war on cancer." Since then, we have spent nearly $200 billion in federal money on research to defeat the disease. But we haven't gotten much bang for our buck: Cancer deaths have fallen by a total of just 5 percent since 1950. (In comparison, heart disease deaths are a third of what they were then, thanks to innovations like statins, stents, and bypass surgery.) The American Cancer Society estimates that more than 600,000 Americans die of cancer annually; 33 percent of those diagnosed will be dead within five years.

Chemotherapy drugs remain the most common treatments for cancer, and most of them were developed before the federal effort ramped up. Out of 44 such drugs used in the U.S. today, more than half were approved prior to 1980. It currently takes 10–15 years and hundreds of millions of dollars for a drug to go from basic research to human clinical trials, according to a 2009 report funded by the National Institutes of Health (NIH). It is now nearly impossible to conceive of going from a eureka moment to human testing in a few years, much less a few days.

Beating cancer is not a lost cause. But if we're going to break new ground, we need to recapture the urgency that characterized the work of pioneers like Gilman and Goodman. And in order to do that, we need to understand how we managed to turn the fight against humanity's most pernicious pathology into a lethargic slog.

Fast, Efficient, and Effective

Look at the history of chemotherapy research and you'll find a very different world than the one that characterizes cancer research today: fast bench-to-bedside drug development; courageous, even reckless researchers willing to experiment with deadly drugs on amenable patients; and centralized, interdisciplinary research efforts. Cancer research was much more like a war effort before the feds officially declared war on it.

One reason that's true is that research on chemotherapy started as a top-secret military project. Medical records never mentioned nitrogen mustard by name, for example—it was referred to only by its Army code name, "Substance X." By 1948, close to 150 patients with terminal blood cancers had been treated with a substance most Americans knew of only as a battlefield killer. After World War II, Sloan Kettering Institute Director Cornelius "Dusty" Rhoads recruited "nearly the entire program and staff of the Chemical Warfare Service" into the hospital's cancer drug development program, former National Cancer Institute (NCI) Director Vincent DeVita recalled in 2008 in the pages of the journal Cancer Research.

Researchers turned swords into ploughshares, and they did it quickly. In February 1948, Sidney Farber, a pathologist at Harvard Medical School, began experiments with the antifolate drug aminopterin. This early chemotherapy drug, and its successor methotrexate, had been synthesized by Yellapragada Subbarrow, an Indian chemist who led the research program at Lederle Labs, along with his colleague Harriet Kiltie. Using their compounds, Farber and his team produced the first leukemia remissions in children in June 1948.

In a July 1951 paper, Jane C. Wright, an African-American surgeon, reported she had extended the successes of methotrexate from blood to solid cancers, achieving regressions in breast and prostate tumors by using the substance.

Chemist Gertrude Elion, who'd joined Wellcome Labs in 1944 despite being too poor to afford graduate school, quickly developed a new class of chemotherapy drugs—2,6-diaminopurine in 1948 and 6-mercaptopurine in 1951—for which she and George H. Hitchings would later win the Nobel Prize.

In 1952, Sloan Kettering's Rhoads was running clinical trials using Elion's drugs to treat leukemia. After popular columnist Walter Winchell reported on the near-miraculous results, public demand for 6-mercaptopurine forced the Food and Drug Administration (FDA) to expedite its approval. The treatment was on the market by 1953.

Notice how fast these researchers were moving: The whole cycle, from no chemotherapies at all to development, trial, and FDA approval for multiple chemotherapy drugs, took just six years. Modern developments, by contrast, can take decades to get to market. Adoptive cell transfer—the technique of using immune cells to fight cancer—was first found to produce tumor regressions in 1985, yet the first such treatments, marketed as Kymriah and Yescarta, were not approved by the FDA until 2017. That's a 32-year lag, more than five times slower than the early treatments.

Despite the pace of progress in the 1940s, researchers had only scratched the surface. As of the early 1950s, cancer remissions were generally short-lived and chemotherapy was still regarded with skepticism. As DeVita observed in his Cancer Research retrospective, chemotherapists in the 1960s were called the "lunatic fringe." Doctors scoffed at George Washington University cancer researcher Louis Alpert, referring to "Louis the Hawk and his poisons." Paul Calabresi, a distinguished professor at Yale, was fired for doing too much early testing of new anti-cancer drugs.

"It took plain old courage to be a chemotherapist in the 1960s," DeVita said in a 2008 public radio interview, "and certainly the courage of the conviction that cancer would eventually succumb to drugs."

The first real cure due to chemotherapy was of choriocarcinoma, the cancer of the placenta. And yet Min Chiu Li—the Chinese-born NCI oncologist who discovered in 1955 that methotrexate could produce permanent remissions in pregnant women—was fired in 1957. His superiors thought he was inappropriately experimenting on patients and unnecessarily poisoning them.

But early chemotherapists were willing to bet their careers and reputations on the success of the new drugs. They developed a culture that rewarded boldness over credentialism and pedigree—which may be why so many of the founders of the field were women, immigrants, and people of color at a time when the phrase affirmative action had yet to be coined. Gertrude Elion, who didn't even have a Ph.D., synthesized six drugs that would later find a place on the World Health Organization's list of essential medicines.

"Our educational system, as you know, is regimenting, not mind-expanding," said Emil Freireich, another chemotherapy pioneer, in a 2001 interview at the MD Anderson Cancer Center. "So I'd spent all my life being told what I should do next. And I came to the NIH and people said, 'Do what you want.'…What came out of that environment was attracting people who were adventurous, because you don't do what I did if you're not adventurous. I could have gone into the military and been a captain and gone into practice. But this looked like a challenge."

Chemotherapy became a truly viable treatment option in the late 1960s, with the introduction of combination chemotherapy and the discovery of alkaloid chemotherapeutic agents such as vincristine. While the idea of combination therapy was controversial at first—you're giving cancer patients more poisons over longer periods of time?—the research showed that prolonged protocols and cocktails of complementary medications countered cancer's ability to evolve and evade the treatment. The VAMP program (vincristine, amethopterin, 6-mercaptopurine, and prednisone) raised leukemia remission rates to 60 percent by the end of the decade, and at least half the time these remissions were measured in years. Oncologists were also just starting to mitigate the negative effects of chemotherapy with platelet transfusions. Within a decade of its inception, chemotherapy was starting to live up to its promise.

The late '60s saw the development of two successful protocols for Hodgkin's disease. The complete remission rate went from nearly zero to 80 percent, and about 60 percent of the original patients never relapsed. Hodgkin's lymphoma is now regarded as a curable affliction.

In the 1970s, chemotherapists expanded beyond lymphomas and leukemias and began to treat operable solid tumors with chemotherapy in addition to surgery. Breast cancer could be treated with less invasive surgeries and a lower risk of remission if the operation was followed by chemotherapy. The results proved spectacular: Five-year breast cancer survival rates increased by more than 70 percent. In his public radio interview, DeVita credited "at least 50 percent of the decline in mortality" in colorectal cancer and breast cancer to this combined approach.

Chemo was soon being used on a variety of solid tumors, vindicating the work of the "lunatic fringe" and proving that the most common cancers could be treated with drugs after all.

The '70s also saw the advent of the taxane drugs, originally extracted from the Pacific yew. That tree was first identified as cytotoxic, or cell-killing, in 1962 as part of an NCI investigation into medicinal plants. The taxanes were the first cytotoxic drugs to show efficacy against metastatic breast and ovarian cancer.

But something had changed between the development of the first chemotherapies and the creation of this next class of medicines. Taxol, the first taxane, wasn't approved for use in cancer until 1992. While 6-mercaptopurine journeyed from the lab to the doctor's office in just two years, taxol required three decades.

Something clearly has gone wrong.

A Stalemate in the War

In the early 1950s, Harvard's Farber, along with activist and philanthropist Mary Lasker, began to pressure Congress to start funding cancer research. In 1955, federal lawmakers appropriated $5 million for the Cancer Chemotherapy National Service Center (CCNSC), which was set up between May and October of that year.

At $46 million in 2018 dollars, the initial budget of the CCNSC wouldn't be enough to fund the clinical trials of even one average oncology drug today. The National Cancer Institute, meanwhile, now has an annual budget of more than $4 billion.

At Lasker's insistence, CCNSC research was originally funded by contracts, not grants. Importantly, funding was allocated in exchange for a specific deliverable on a specific schedule. (Today, on the other hand, money is generally allocated on the basis of grant applications that are peer-reviewed by other scientists and often don't promise specific results.) The original approach was controversial in the scientific community, because it makes it hard to win funding for more open-ended "basic" research. The contracts allowed, however, for focused, goal-oriented, patient-relevant studies with a minimum of bureaucratic interference.

Farber pushed for directed research aimed at finding a cure rather than basic research to understand the "mechanism of action," or how and why a drug works. He also favored centralized oversight rather than open-ended grants.

"We cannot wait for full understanding," he testified in a 1970 congressional hearing. "The 325,000 patients with cancer who are going to die this year cannot wait; nor is it necessary, in order to make great progress in the cure of cancer, for us to have the full solution of all the problems of basic research.…The history of medicine is replete with examples of cures obtained years, decades, and even centuries before the mechanism of action was understood for these cures—from vaccination, to digitalis, to aspirin."

Over time, cancer research drifted from Farber's vision. The NCI and various other national agencies now largely fund research through grants to universities and institutes all over the country.

In 1971, Congress passed the National Cancer Act, which established 69 geographically dispersed, NCI-designated cancer research and treatment centers. James Watson—one of the discoverers of the structure of DNA and at the time a member of the National Cancer Advisory Board—objected strenuously to the move. In fact, DeVita in his memoir remembers the Nobel winner calling the cancer centers program "a pile of shit." Watson was fired that day.

Impolitic? Perhaps. Yet the proliferation of organizations receiving grants means cancer research is no longer primarily funded with specific treatments or cures (and accountability for those outcomes) as a goal.

With their funding streams guaranteed regardless of the pace of progress, researchers have become increasingly risk-averse. "The biggest obstacle today to moving forward effectively towards a true war against cancer may, in fact, come from the inherently conservative nature of today's cancer research establishments," Watson wrote in a 2013 article for the journal Open Biology.

As the complexity of the research ecosystem grew, so did the bureaucratic requirements—grant applications, drug approval applications, research board applications. The price tag on complying with regulations for clinical research ballooned as well. A 2010 paper in the Journal of Clinical Oncology reported that in 1975, R&D for the average drug cost $100 million. By 2005, the figure was $1.3 billion, according to the Manhattan Institute's Avik Roy. Even the rate at which costs are increasing is itself increasing, from an annual (inflation-adjusted) rise of 7.3 percent in 1970–1980 to 12.2 percent in 1980–1990.

Running a clinical trial now requires getting "protocols" approved. These plans for how the research will be conducted—on average, 200 pages long—must go through the FDA, grant-making agencies such as the NCI or NIH, and various institutional review boards (IRBs), which are administered in turn by the Department of Health and Human Services' Office of Human Research Protections. On average, "16.8 percent of the total costs of an observational protocol are devoted to IRB interactions, with exchanges of more than 15,000 pages of material, but with minimal or no impact on human subject protection or on study procedures," wrote David J. Stewart, head of the oncology division at the University of Ottawa, in that Journal of Clinical Oncology article.

While protocols used to be guidelines for investigators to follow, they're now considered legally binding documents. If a patient changes the dates of her chemotherapy sessions to accommodate family or work responsibilities, it may be considered a violation of protocol that can void the whole trial.

Adverse events during trials require a time-consuming reporting and re-consent process. Whenever a subject experiences a side effect, a report must be submitted to the IRB, and all the other subjects must be informed and asked if they want to continue participation.

A sizable fraction of the growth in the cost of trials is due to such increasing requirements. But reporting—which often involves making patients fill out questionnaires ranking dozens of subjective symptoms, taking numerous blood draws, and minutely tracking adherence to the protocol—is largely irrelevant to the purpose of the study. "It is just not all that important if it was day 5 versus day 6 that the patient's grade 1 fatigue improved, particularly when the patient then dies on day 40 of uncontrolled cancer," Stewart noted drily.

As R&D gets more expensive and compliance more onerous, only very large organizations—well-funded universities and giant pharmaceutical companies, say—can afford to field clinical trials. Even these are pressured to favor tried-and-true approaches that already have FDA approval and drugs where researchers can massage the data to just barely show an improvement over the placebo. (Since clinical trials are so expensive that organizations can only do a few, there's an incentive to choose drugs that are almost certain to pass with modest results—and not to select for drugs that could result in spectacular success or failure.) Of course, minimal improvement means effectively no lives saved. Oligopoly is bad for patients.

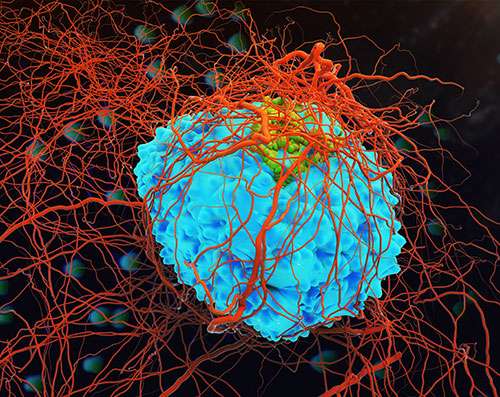

To be sure, cancer research is making progress, even within these constraints. New immunotherapies that enlist white blood cells to attack tumors have shown excellent results. Early screening and the decline in smoking have had a huge impact as well: Cancer mortality rates are finally dropping, after increasing for most of the second half of the 20th century. It's possible that progress slowed in part because we collected most of the low-hanging fruit from chemotherapy early on. Yet we'll never know for sure how many more treatments could have been developed—how much higher up the proverbial tree we might be now—if policy makers hadn't made it so much harder to test drugs in patients and get them approved.

To find cures for cancer, we need novel approaches that produce dramatic results. The only way to get them is by lowering barriers to entry. The type of research that gave us chemotherapy could never receive funding—and would likely get its practitioners thrown in jail—if it were attempted today. Patient safety and research ethics matter, of course, and it's important to maintain high standards for clinical research. But at current margins it would be possible to open up cancer research quite a bit without compromising safety.

Our institutions have been resistant to that openness. As a result, scholars, doctors, and patients have less freedom to experiment than ever before.

Bringing Back the Urgency

The problem is clear: Despite tens of billions of dollars every year spent on research, progress in combating cancer has slowed to a snail's pace. So how can we start to reverse this frustrating trend?

One option is regulatory reform, and much can be done on that front. Streamline the process for getting grant funding and IRB approval. Cut down on reporting requirements for clinical trials, and start programs to accelerate drug authorizations for the deadliest illnesses.

One proposal, developed by American economist Bartley Madden, is "free-to-choose medicine." Once drugs have passed Phase I trials demonstrating safety, doctors would be able to prescribe them while documenting the results in an open-access database. Patients would get access to drugs far earlier, and researchers would get preliminary data about efficacy long before clinical trials are completed.

More radically, it might be possible to repeal the 1962 Kefauver-Harris amendment to the Federal Food, Drug, and Cosmetic Act, a provision that requires drug developers to prove a medication's efficacy (rather than just its safety) before it can receive FDA approval. Since this more stringent authorization process was enacted, the average number of new drugs greenlighted per year has dropped by more than half, while the death rate from drug toxicity stayed constant. The additional regulation has produced stagnation, in other words, with no upside in terms of improved safety.

Years ago, a Cato Institute study estimated the loss of life resulting from FDA-related drug delays from 1962 to 1985 in the hundreds of thousands. And this only included medications that were eventually approved, not the potentially beneficial drugs that were abandoned, rejected, or never developed, so it's probably a vast underestimate.

There have been some moves in the right direction. Between 1992 and 2002, the FDA launched three special programs to allow for faster approval of drugs for certain serious diseases, including cancer. And current FDA Commissioner Scott Gottlieb shows at least some appetite for further reform.

Another avenue worth exploring is private funding of cancer research. There's no shortage of wealthy donors who care about discovering cures and are willing to invest big money to that end. Bill and Melinda Gates are known around the world for their commitment to philanthropy and interest in public health. In 2016, Facebook founder Mark Zuckerberg and his wife Priscilla Chan promised to spend $3 billion on "curing all disease in our children's lifetime." Napster co-founder Sean Parker has donated $250 million for immunotherapy research.

We know from history that cancer research doesn't need to cost billions to be effective. Instead of open-ended grants, donors could pay for results via contracts or prizes. Instead of relying solely on clinical tests, doctors could do more case series in which they use experimental treatments on willing patients to get valuable human data before progressing to the expensive "gold standard" of a randomized controlled trial. And instead of giving huge sums to a handful of insiders pursuing the same old research avenues, cancer funders could imitate tech investors and cast around for cheap, early stage, contrarian projects with the potential for fantastic results.

The original logo on the Memorial Sloan Kettering Cancer Center, designed in 1960, is an arrow pointing upward along with the words Toward the Conquest of Cancer. We used to think cancer was conquerable. Today, that idea is often laughed off as utopian. But there are countless reasons to believe that progress has slowed because of organizational and governmental problems, not because the disease is inherently incurable. If we approach some of the promising new avenues for cancer research with the same zeal and relentlessness that Sidney Farber had, we might beat cancer after all.

This article originally appeared in print under the headline "When Cancer Was Conquerable."