Fake News Freakout

Are internet conspiracy theories ruining America?

Anyone with a Facebook account this year likely witnessed a barrage of false, conspiracy-laden headlines. My news feed informed me that Hillary Clinton was gravely ill, was already dead, had a body double, and murdered dozens of people. (It's amazing what you can learn when you have the right friends.) I also found out that President Barack Obama had worked his way through college as a gay prostitute. (Who could blame him? Columbia is very expensive!)

Reeling after November's unexpected loss to Donald Trump, Democrats have taken to blaming such "fake news" for that outcome. Trump won, the argument goes, because Americans were exposed to inaccurate information; if only they'd had the right info from the right people, voters would have made better choices. A Washington Post piece took the idea further, claiming that fake news stems from a "sophisticated Russian propaganda campaign that created and spread misleading articles online with the goal of punishing Democrat Hillary Clinton, helping Republican Donald Trump and undermining faith in American democracy." In response to such heated calls, Facebook has started looking for ways to rid itself of the fakeries.

Whether or not it's to blame for Trump's victory, fake news can be a problem. People who absorb inaccuracies will sometimes believe them and, worse, act on them. And once an inaccuracy gets lodged in a person's head, it can be difficult to dislodge. The political scientists Brendan Nyhan and Jason Reifler have shown that even when presented with authoritative facts, people will not merely refuse to change their incorrect beliefs; in some instances they'll double down on them. This is called the "backfire" effect.

But it is far from clear that fake news has the sweeping effects that its critics charge. People have always put stock in dubious ideas, and the latest deluge of suspect headlines traversing the Internet smells more of continuity than it does of change.

I have been studying political communication for more than a decade. Much of that time has been spent looking at conspiracy theories, why people believe them, and how they spread. What we know about how people interact with information—and misinformation—suggests that fake political news doesn't affect people's opinions nearly as much as is being insinuated.

Where Political Beliefs Come From

In the 1940s, the sociologist Paul Lazarsfeld and his colleagues explored how the media affects political views by comparing people's opinions (as measured by surveys) to the news and advertisements they were exposed to. The investigators expected to find evidence that media messages had immediate, powerful, and intuitive effects on people's political views. Instead, they found that opinions were largely stable and invariant to media messages. You could face a barrage of the Madison Avenue pitches proclaiming the virtues of either President Franklin Roosevelt or his Republican challenger, but if six months in advance you were inclined to vote for one of those men, in November that was who you'd probably vote for. Very few people changed their preferences over the course of the campaign.

The same finding held throughout the broadcast era: There was very little relationship between people's intended choices and the messaging they encountered. Whatever change did occur usually took the form of people aligning their candidate preferences with their underlying party affiliation. External events and economic conditions mattered, of course, but they tended to make their impact regardless of messaging. This is not to say that news, advertisements, and campaigns have no effects. But those effects tend to be less direct and of lower magnitude than people assume.

Over the last few decades, as media markets segmented, the ratings for the three traditional broadcast news programs have declined and people have sought out other entertainment options. For those who enjoy and seek out political news, market segmentation allowed them to gravitate toward messages that gratified their beliefs. Republicans tuned in to Fox News; Democrats preferred MSNBC. People tended to avoid news they disagreed with—and when they nonetheless did encounter a message that they rejected a priori, they found ways to discount it or to interpret it in a manner that made it congruent with their pre-existing opinions. Political scientists Katherine Einstein and David Glick recently published the results of an experiment showing that Republicans were far more likely than Democrats to believe the Bureau of Labor Statistics' unemployment numbers were faked or misleading. The experiment took place during the Obama administration, so it was no surprise that Democrats resisted the idea that the numbers were false while Republicans could not accept that unemployment was going down. People hear what they want to hear.

This is consistent with broader findings on partisanship. The first major statement of these data was The American Voter, a 1960 book by a team of scholars at the University of Michigan. During their formative years, the authors concluded, people are socialized into their ideologies and partisan loyalties by their parents, family, schools, friends, religion, media, and many other sources. Their views may evolve as they mature, but by the time a person hits her 30s, her political identity has usually solidified. Candidates, elections, and issues will come and go, but people's political identities will probably not change.

These underlying attitudes determine how people vote. Thus, despite the remarkably low favorability numbers that both major-party candidates enjoyed in 2016, about 90 percent of Democrats voted for Hillary Clinton and about 90 percent of Republicans voted for Donald Trump. There were few defectors.

Partisanship and ideology color how people interpret information. Democrats look at the current unemployment rate of 4.9 percent and conclude that Barack Obama has done a great job on the economy. Republicans see that same number and conclude it is either improperly calculated or, worse, a hoax. Same information, different interpretations.

Perhaps my favorite example of this occurred when Herman Cain ran for the Republican presidential nomination. His successful tenure as CEO of Godfather's Pizza was a primary talking point on the campaign trail. Coincidentally, YouGov's BrandIndex already happened to be surveying the public about the restaurant's brand favorability. When Cain's campaign began, Republicans and Democrats viewed the pizza chain similarly, but as the country learned about Cain, opinions of Godfather's polarized: Democrats began to view the chain more negatively while Republicans did the opposite. By the height of Cain's popularity in late 2011, Republicans and Democrats differed by 25 points (on a scale ranging from -100 to 100) in their view of the company. It was the same pizza, but suddenly people's political loyalties played a major role in determining how much they liked it.

What does this mean for fake news? When my father-in-law read on Facebook that Barack Obama once worked as a gay prostitute, it did not change his view of the president. He was already convinced that Obama had a shrouded past and that the president did not share his values. The prostitution story reinforced my father-in-law's views, but it did not create them. Fake political news tends to preach to the choir.

Has the Internet Really Made the Problem Worse?

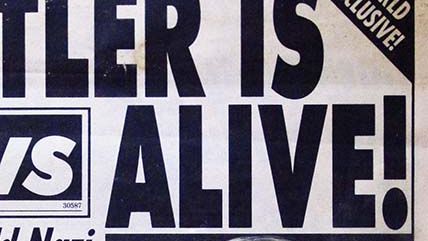

Aha, you might object, but the internet has changed all that. The old gatekeepers have been wiped away, and we are flooded like never before by misinformation, disinformation, and dubious claims of all kinds. Surely that poses an unprecedented threat. As the New York Daily News breathlessly declared a few years ago, "It's official: America is becoming a conspiratocracy. The tendency for a small slice of the population to believe in devious plots has always been with us. But conspiracies have never spread this swiftly across the country. They have never lodged this deeply in the American psyche. And they have never found as receptive an audience."

My research into conspiracy theories suggests we should be suspicious of such claims. "Fake news" and "conspiracy theories" are not precisely the same thing—not all fake news stories involve conspiracies, and for that matter not all conspiracy claims are fake—but they overlap enough that what we've learned about one can inform how we react to the other. Much fake news is sold and consumed on the premise that mainstream outlets cover up important information that is only available through alternative sources.

Contrary to many people's expectations, the internet does not appear to have made conspiracy thinking more common. In a study of letters to the editor of The New York Times, my coauthor Joseph Parent and I found that over time, conspiracy theorizing has in fact declined. There have been spikes in the past—in the 1890s, when fear was focused on corporate trusts, and in the 1950s, during the Red Scare—but there does not seem to be such a surge now. Yes, thanks to Trump (and to some extent Bernie Sanders) our political and media elites are discussing conspiracy theories much more frequently. But that doesn't mean people are believing them more than they did in, say, 2012. If anything, Trump's conspiracy theories follow trends that already existed: His conspiracy theories about foreigners and foreign governments play to fears that people already had. (Our data show that Americans have always possessed a fondness for scapegoating foreigners.)

Furthermore, conspiracy theories may feature more prominently in societies that are less connected to the internet. While survey data is hard to collect in closed societies, political scientist Scott Radnitz's research into post-communist countries suggests that conspiracy theorizing is very common there and used as a political tool for coalition formation, particularly in times of uncertainty. Political scientists Stephanie Ortmann and John Heathershaw have also noted "that anyone recently doing social science or humanities research on the [post-Soviet space] will have come across conspiracy theories," and that in Russia and other post-communist countries conspiracy theories function as the "official discourses of state power." In Africa, where levels of internet connectivity are much lower than in the U.S., conspiracy theories about disease, western medicine, and genetically modified crops abound. Economist Nicoli Nattrass' research shows both the prominence and terrible consequences of AIDS conspiracy theories: With government policy reflecting popular conspiracy beliefs, hundreds of thousands of South Africans either were needlessly infected or died prematurely from the virus.

The idea that fake news from social media is radically transforming Americans' political attitudes relies on several dubious assumptions about people, the internet, and people's behavior on the internet.

Dubious assumption No. 1: In the past, false ideas did not travel far or fast, but social media allow such ideas to be adopted by many more people today.

The internet does allow news, real or fake, to travel faster than ever before. But false rumors traveled widely long before networked personal computers were a significant part of our media landscape. What Gen Xer didn't hear about Richard Gere and the gerbil, or how Walt Disney is cryogenically frozen in EPCOT? Conspiracy theories about the assassination of John F. Kennedy circulated long before social media, hitting their apex just as the internet was starting to reach a mass audience in the 1990s. Since social media emerged, belief in JFK conspiracy theories has gone down about 20 percentage points.

Furthermore, search patterns suggest that the most popular conspiracy theories of the internet era owe their popularity not to the net itself but to more familiar influences. Instead of the herding patterns you'd expect if people were just indiscriminately transmitting ideas from one person to the next, we see patterns that suggest people are following the ideas of elites they trust. Searches for "Obama birth certificate," for example, show three spikes in interest: in 2008, 2011, and 2016. The jump in 2008 was largely due to the mainstream attention given to the theory during and after the election, and those in 2011 and 2016 were due to discussions by Donald Trump. Compare that to nonpolitical but equally dubious ideas, such as the New Age concept of "mindfulness." They show an almost linear pattern: A few people like the idea, then other people think the idea is worthwhile, then more people, and so forth.

Dubious assumption No. 2: People's views are easily pliable, and can be altered by nothing more than reading a webpage or receiving a communication on social media.

People are not easily convinced by new information. Casey Klofstad, Matthew Atkinson, and I learned this when we attempted, in a study published in 2016, to convince experimental subjects of a conspiracy theory involving the media. We found that people who already believed that the media was conspiring against them were not affected by the new information: They already believed it, and new info would not do much to convince them more. The people not inclined to buy into the conspiracy were not affected either. In fact, the only people we could get to believe in our media conspiracy were those who had no inclination to believe or not believe it in the first place—which were very few people.

This helps explain why most conspiracy theories run into a ceiling. In the U.S., most partisan conspiracies can't seem to convince more than 25 percent of the population, because in order to buy into such a theory, you need to be inclined to believe in conspiracy theories and you need to have partisan inclinations that match this particular theory's logic. (Birther theories could not convince the fans of Barack Obama, while 9/11 truther theories had a tough time with the fans of George W. Bush.) Even if the internet allows fake news to reach larger audiences than ever before, that doesn't mean most people will be inclined to accept it.

Dubious assumption No. 3: Most people actively access conspiracy theories and fake news on the web.

It is often assumed that because dubious web pages are available in the dark corners of the net, people will automatically seek them out. In fact, most people don't. The web is largely a reflection of the real world, and just because something is posted somewhere doesn't mean anyone cares. There are almost half a million recipes for duck confit on the internet; hardly anyone is racing home to cook duck confit tonight.

People don't seek out conspiratorial or other dubious information on the web nearly as much as they do more mainstream news sources. The New York Times is currently ranked 21st in the U.S. for website traffic, according to the analytics company Alexa. InfoWars, the most popular conspiracy website in the rankings, is at No. 318. People go to the internet to do all sorts of things; getting fake news is at the bottom of the list.

It's true, of course, that people don't necessarily need to go looking for fake news to find it. All sorts of headlines can be dumped into your Facebook or Twitter feed, whether you read the story or not, and these headlines may impact your views. At the same time, people have much more control over their information environment than ever before. They choose who they friend or follow—in some cases because of their politics—and this affects which advertisers try to reach them. So even the fake news you passively receive is at least somewhat shaped by what messages you are already likely to agree with.

Dubious assumption No. 4: The Internet largely serves to propagate misinformation.

At the same time that the internet has given voice to peddlers of misinformation, it has also given larger voices to authoritative sources of information as well. Fact-checking outlets have multiplied in recent years, and there are many websites—such as Snopes.com—that seek out and debunk popular rumors. Rather than relying on village wisdom, you can get expert information directly from doctors, lawyers, government officials, and others on thousands of sites. Google "sunburn," and you'll find out quickly that rubbing butter on it, like my grandmother used to do to me, is not a good idea.

Steve Clarke of Charles Sturt University in Australia has even made the case that the "hyper-critical atmosphere of the internet" has actually "slowed down the development of conspiracy theories, discouraging conspiracy theorists from articulating explicit versions of their favoured theories." Clarke used the "controlled demolition" theory that explosives were used to help bring down the twin towers on 9/11 to demonstrate that while the internet has "aided in the dissemination" of that story, it also appears "to have retarded its development." Since the internet emerged, the empirical claims that conspiracy theorists make can be disputed right away. The internet can quickly put claims to the test and see if there's any truth to them, and it allows experts to quickly weigh in on debates. If conspiracy theorists' claims are debunked, that news also travels fast. And that wouldn't have been the case in the past. The internet acts as an incubator for fake news, but it also acts as the antidote.

First Do No Harm

So while fake news is a problem, it is neither new nor bigger than ever before. And some of the solutions being suggested to combat it may be worse than the disease. Cracking down on fake news runs the risk of restricting true news as well.

Some sites' contents are fully fake: They traffic in outright hoaxes and nothing else. But there are also outlets that have some tether to truth but serve as little more than ideological propaganda. Still other places are widely considered legitimate news sources but occasionally report biased, distorted, or flatly inaccurate information. In the time since Election Day, all of the above have been lumped together as "fake news." How can Facebook or any other platform decide which outlets, headlines, and stories are true and which ones are not?

There is no guarantee that anyone (or any algorithm) could effectively differentiate true from false, and attempts to do so would likely remove some real news, even if only unintentionally. If we ban new and controversial ideas without allowing them to be poked and prodded in the open marketplace, how can society fully test the veracity of what we currently hold to be true?

Take an example from the recent campaign. In the lead-up to November 8, several right-wing websites reported that Donald Trump was winning the electoral map. Since those claims did not match with the predictions made by the professional pollsters, those sites' claims were deemed fake. But after the vote, those "fake" predictions turned out to be no less wrong than the predictions made by the pros—and at least they called the winner correctly.

The point isn't that we should put more stock in fringe websites than in, say, Nate Silver. It's just that deciding what is true or false can be more tricky than it at first appears. If someone tells you otherwise, watch out: He just might be a fake.

This article originally appeared in print under the headline "Fake News Freakout."

Show Comments (362)