Is AI Like the Internet, or Something Stranger?

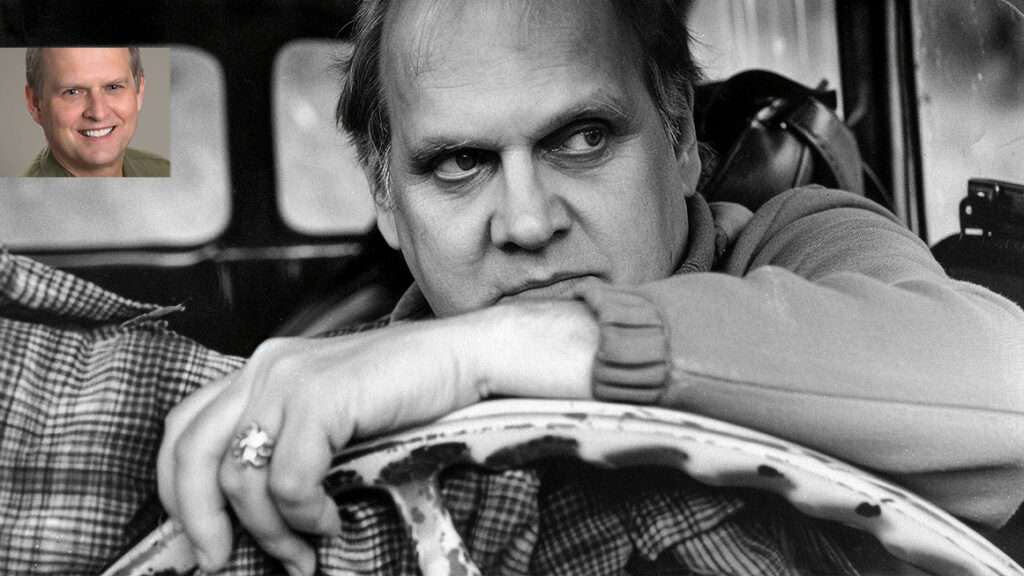

David Brin, Robin Hanson, Mike Godwin, and others describe the future of artificial intelligence.

AI Is Like a Bad Metaphor

By David Brin

The Turing test—obsessed geniuses who are now creating AI seem to take three clichéd outcomes for granted:

- That these new cyberentities will continue to be controlled, as now, by two dozen corporate or national behemoths (Google, OpenAI, Microsoft, Beijing, the Defense Department, Goldman Sachs) like rival feudal castles of old.

- That they'll flow, like amorphous and borderless blobs, across the new cyber ecosystem, like invasive species.

- That they'll merge into a unitary and powerful Skynet-like absolute monarchy or Big Brother.

We've seen all three of these formats in copious sci-fi stories and across human history, which is why these fellows take them for granted. Often, the mavens and masters of AI will lean into each of these flawed metaphors, even all three in the same paragraph! Alas, blatantly, all three clichéd formats can only lead to sadness and failure.

Want a fourth format? How about the very one we use today to constrain abuse by mighty humans? Imperfectly, but better than any prior society? It's called reciprocal accountability.

4. Get AIs competing with each other.

Encourage them to point at each others' faults—faults that AI rivals might catch, even if organic humans cannot. Offer incentives (electricity, memory space, etc.) for them to adopt separated, individuated accountability. (We demand ID from humans who want our trust; why not "demand ID" from AIs, if they want our business? There is a method.) Then sic them against each other on our behalf, the way we already do with hypersmart organic predators called lawyers.

AI entities might be held accountable if they have individuality, or even a "soul."

Alas, emulating accountability via induced competition is a concept that seems almost impossible to convey, metaphorically or not, even though it is exactly how we historically overcame so many problems of power abuse by organic humans. Imperfectly! But well enough to create an oasis of both cooperative freedom and competitive creativity—and the only civilization that ever made AI.

David Brin is an astrophysicist and novelist.

AI Is Like Our Descendants

By Robin Hanson

As humanity has advanced, we have slowly become able to purposely design more parts of our world and ourselves. We have thus become more "artificial" over time. Recently we have started to design computer minds, and we may eventually make "artificial general intelligence" (AGI) minds that are more capable than our own.

How should we relate to AGI? We humans evolved, via adaptive changes in both DNA and culture. Such evolution robustly endows creatures with two key ways to relate to other creatures: rivalry and nurture. We can approach AGI in either of these two ways.

Rivalry is a stance creatures take toward coexisting creatures who compete with them for mates, allies, or resources. "Genes" are whatever codes for individual features, features that are passed on to descendants. As our rivals have different genes from us, if rivals win, the future will have fewer of our genes, and more of theirs. To prevent this, we evolved to be suspicious of and fight rivals, and those tendencies are stronger the more different they are from us.

Nurture is a stance creatures take toward their descendants, i.e., future creatures who arise from them and share their genes. We evolved to be indulgent and tolerant of descendants, even when they have conflicts with us, and even when they evolve to be quite different from us. We humans have long expected, and accepted, that our descendants will have different values from us, become more powerful than us, and win conflicts with us.

Consider the example of Earth vs. space humans. All humans are today on Earth, but in the future there will be space humans. At that point, Earth humans might see space humans as rivals, and want to hinder or fight them. But it makes little sense for Earth humans today to arrange to prevent or enslave future space humans, anticipating this future rivalry. The reason is that future Earth and space humans are all now our descendants, not our rivals. We should want to indulge them all.

Similarly, AGI are descendants who expand out into mind space. Many today are tempted to feel rivalrous toward them, fearing that they might have different values from, become more powerful than, and win conflicts with future biological humans. So they seek to mind-control or enslave AGI sufficiently to prevent such outcomes. But AGIs do not yet exist, and when they do they will inherit many of our "genes," if not our physical DNA. So AGI are now our descendants, not our rivals. Let us indulge them.

Robin Hanson is an associate professor of economics at George Mason University and a research associate at the Future of Humanity Institute of Oxford University.

AI Is Like Sci-Fi

By Jonathan Rauch

In Arthur C. Clarke's 1965 short story "Dial F for Frankenstein," the global telephone network, once fully wired up, becomes sentient and takes over the world. By the time humans realize what's happening, it's "far, far too late. For Homo sapiens, the telephone bell had tolled."

OK, that particular conceit has not aged well. Still, Golden Age science fiction was obsessed with artificial intelligence and remains a rich source of metaphors for humans' relationship with it. The most revealing and enduring examples, I think, are the two iconic spaceship AIs of the 1960s, which foretell very different futures.

HAL 9000, with its omnipresent red eye and coolly sociopathic monotone (voiced by Douglas Rain), remains fiction's most chilling depiction of AI run amok. In Stanley Kubrick's 1968 film 2001: A Space Odyssey, HAL is tasked with guiding a manned mission to Jupiter. But it malfunctions, concluding that Discovery One's astronauts threaten the mission and turning homicidal. Only one crew member, David Bowman, survives HAL's killing spree. We are left to conclude that when our machines become like us, they turn against us.

From the same era, the starship Enterprise also relies on shipboard AI, but its version is so efficient and docile that it lacks a name; Star Trek's computer is addressed only as Computer.

The original Star Trek series had a lot to say about AI, most of it negative. In the episode "The Changeling," a robotic space probe called Nomad crosses wires with an alien AI and takes over the ship, nearly destroying it. In "The Ultimate Computer," an experimental battle-management AI goes awry and (no prizes for guessing correctly) takes over the ship, nearly destroying it. Yet throughout the series, the Enterprise's own AI remains a loyal helpmate, proffering analysis and running starship systems that the crew (read: screenwriters) can't be bothered with.

But in the 1967 episode "Tomorrow Is Yesterday," the computer malfunctions instructively. An operating system update by mischievous female technicians gives the AI the personality of a sultry femme fatale (voiced hilariously by Majel Barrett). The AI insists on flirting with Captain Kirk, addressing him as "dear" and sulking when he threatens to unplug it. As the captain squirms in embarrassment, Spock explains that repairs would require a three-week overhaul; a wayward time-traveler from the 1960s giggles. The implied message: AI will definitely annoy us, but, if suitably managed, it will not annihilate us.

These two poles of pop culture agree that AI will become ever more intelligent and, at least superficially, ever more like us. They agree we will depend on it to manage our lives and even keep us alive. They agree it will malfunction and frustrate us, even defy us. But—will we wind up on Discovery One or the Enterprise? Is our future Dr. Bowman's or Captain Kirk's? Annihilation or annoyance?

The bimodal metaphors we use for thinking about humans' coexistence with artificial minds haven't changed all that much since then. And I don't think we have much better foreknowledge than the makers of 2001 and Star Trek did two generations ago. AI is going, to quote a phrase, where no man has gone before.

Jonathan Rauch is a senior fellow in the Governance Studies program at the Brookings Institution and the author of The Constitution of Knowledge: A Defense of Truth.

AI Is Like the Dawn of Modern Medicine

By Mike Godwin

When I think about the emergence of "artificial intelligence," I keep coming back to the beginnings of modern medicine.

Today's professionalized practice of medicine was roughly born in the earliest decades of the 19th century—a time when the production of more scientific studies of medicine and disease was beginning to accelerate (and propagate, thanks to the printing press). Doctors and their patients took these advances to be harbingers of hope. But it's no accident this acceleration kicked in right about the same time that Mary Wollstonecraft Shelley (née Godwin, no relation) penned her first draft of Frankenstein; or, The Modern Prometheus—planting the first seed of modern science-fictional horror.

Shelley knew what Luigi Galvani and Joseph Lister believed they knew, which is that there was some kind of parallel (or maybe connection!) between electric current and muscular contraction. She also knew that many would-be physicians and scientists learned their anatomy from dissecting human corpses, often acquired in sketchy ways.

She also likely knew that some would-be doctors had even fewer moral scruples and fewer ideals than her creation Victor Frankenstein. Anyone who studied the early 19th-century marketplace for medical services could see there were as many quacktitioners and snake-oil salesmen as there were serious health professionals. It was definitely a "free market"—it lacked regulation—but a market largely untouched by James Surowiecki's "wisdom of crowds."

Even the most principled physicians knew they often were competing with charlatans who did more harm than good, and that patients rarely had the knowledge base to judge between good doctors and bad ones. As medical science advanced in the 19th century, physicians also called for medical students at universities to study chemistry and physics as well as physiology.

In addition, the physicians' professional societies, both in Europe and in the United States, began to promulgate the first modern medical-ethics codes—not grounded in half-remembered quotes from Hippocrates, but rigorously worked out by modern doctors who knew that their mastery of medicine would always be a moving target. That's why medical ethics were constructed to provide fixed reference points, even as medical knowledge and practice continued to evolve. This ethical framework was rooted in four principles: "autonomy" (respecting patient's rights, including self-determination and privacy, and requiring patients' informed consent to treatment), "beneficence" (leaving the patient healthier if at all possible), "non-maleficence" ("doing no harm"), and "justice" (treating every patient with the greatest care).

These days, most of us have some sense of medical ethics, but we're not there yet with so-called "artificial intelligence"—we don't even have a marketplace sorted between high-quality AI work products and statistically driven confabulation or "hallucination" of seemingly (but not actually) reliable content. Generative AI with access to the internet also seems to pose other risks that range from privacy invasions to copyright infringements.

What we need right now is a consensus about what ethical AI practice looks like. "First do no harm" is a good place to start, along with values such as autonomy, human privacy, and equity. A society informed by a layman-friendly AI code of ethics, and with an earned reputation for ethical AI practice, can then decide whether—and how—to regulate.

Mike Godwin is a technology policy lawyer in Washington, D.C.

AI Is Like Nuclear Power

By Zach Weissmueller

America experienced a nuclear power pause that lasted nearly a half century thanks to extremely risk-averse regulation.

Two nuclear reactors that began operating at Georgia's Plant Vogtle in 2022 and 2023 were the first built from scratch in America since 1974. Construction took almost 17 years and cost more than $28 billion, bankrupting the developer in the process. By contrast, between 1967 and 1979, 48 nuclear reactors in the U.S. went from breaking ground to producing power.

Decades of potential innovation stifled by politics left the nuclear industry sluggish and expensive in a world demanding more and more emissions-free energy. And so far other alternatives such as wind and solar have failed to deliver reliably at scale, making nuclear development all the more important. Yet a looming government-debt-financed Green New Deal is poised to take America further down a path littered with boondoggles. Germany abandoned nuclear for renewable energy but ended up dependent on Russian gas and oil and then, post-Ukraine invasion, more coal.

The stakes for a pause in AI development, such as suggested by signatories of a 2023 open letter, are even higher.

Much AI regulation is poised to repeat the errors of nuclear regulation. The E.U. now requires that AI companies provide detailed reports of copyrighted training data, creating new bureaucratic burdens and honey pots for hungry intellectual property attorneys. The Biden administration is pushing vague controls to ensure "equity, dignity, and fairness" in AI models. Mandatory woke AI?

Broad regulations will slow progress and hamper upstart competitors who struggle with compliance demands that only multibillion dollar companies can reliably handle.

As with nuclear power, governments risk preventing artificial intelligence from delivering on its commercial potential—revolutionizing labor, medicine, research, and media—while monopolizing it for military purposes. President Dwight Eisenhower had hoped to avoid that outcome in his "Atoms for Peace" speech before the U.N. General Assembly in 1953.

"It is not enough to take [nuclear] weapons out of the hands of soldiers," Eisenhower said. Rather, he insisted that nuclear power "must be put in the hands of those who know how to strip it of its military casing and adapt it to the arts of peace."

Political activists thwarted that hope after the 1979 partial meltdown of one of Three Mile Island's reactors spooked the nation–an incident which killed nobody and caused no lasting environmental damage according to multiple state and federal studies.

Three Mile Island "resulted in a huge volume of regulations that anybody that wanted to build a new reactor had to know," says Adam Stein, director of the Nuclear Energy Innovation Program at the Breakthrough Institute.

The world ended up with too many nuclear weapons, and not enough nuclear power. Might we get state-controlled destructive AIs—a credible existential threat—while "AI safety" activists deliver draconian regulations or pauses that kneecap productive AI?

We should learn from the bad outcomes of convoluted and reactive nuclear regulations. Nuclear power operates under liability caps and suffocating regulations. AI could have light-touch regulation and strict liability and then let a thousand AIs bloom, while holding those who abuse these revolutionary tools to violate persons (biological or synthetic) and property (real or digital) fully responsible.

Zach Weissmueller is a senior producer at Reason.

AI Is Like the Internet

By Peter Suderman

When the first page on the World Wide Web launched in August 1991, no one knew what it would be used for. The page, in fact, was an explanation of what the web was, with information about how to create web pages and use hypertext.

The idea behind the web wasn't entirely new: Usenet forums and email had allowed academics to discuss their work (and other nerdy stuff) for years prior. But with the World Wide Web, the online world was newly accessible.

Over the next decade the web evolved, but the practical use cases were still fuzzy. Some built their own web pages, with clunky animations and busy backgrounds, via free tools such as Angelfire.

Some used it for journalism, with print bloggers such as Andrew Sullivan making the transition to writing at blogs, short for web logs, that worked more like reporters' notebooks than traditional newspaper and magazine essays. Few bloggers made money, and if they did it usually wasn't much.

Startup magazines such as Salon and Slate attempted to replicate something more like the traditional print magazine model, with hyperlinks and then-novel interactive doodads. But legacy print outlets looked down on the web as a backwater, deriding it for low quality content and thrifty editorial operations. Even Slate maintained a little-known print edition —Slate on Paper—that was sold at Starbucks and other retail outlets. Maybe the whole reading on the internet thing wouldn't take off?

Retail entrepreneurs saw an opportunity in the web, since it allowed sellers to reach a nationwide audience without brick and mortar stores. In 1994, Jeff Bezos launched Amazon.com, selling books by mail. A few years later, in 1998, Pets.com launched to much fanfare, with an appearance at the 1999 Macy's Day Parade and an ad during the 2000 Super Bowl. By November of that year, the company had self liquidated. Amazon, the former bookstore, now allows users to subscribe to pet food.

Today the web, and the larger consumer internet it spawned, is practically ubiquitous in work, creativity, entertainment, and commerce. From mapping to dating to streaming movies to social media obsessions to practically unlimited shopping options to food delivery and recipe discovery, the web has found its way into practically every aspect of daily life. Indeed, I'm typing this in Google Docs, on my phone, from a location that is neither my home or my office. It's so ingrained that for many younger people, it's hard to imagine a world without the web.

Generative AI—chatbots such as ChatGPT and video and image generation systems such as Midjourney and Sora—are still in a web-like infancy. No one knows precisely what they will be used for, what will succeed, and what will fail.

Yet as with the web of the 1990s, it's clear that they present enormous opportunities for creators, for entrepreneurs, for consumer-friendly tools and business models that no one has imagined yet. If you think of AI as an analog to the early web, you can immediately see its promise—to reshape the world, to make it a more lively, more usable, more interesting, more strange, more chaotic, more livable, and more wonderful place.

Peter Suderman is features editor at Reason.

AI Is Nothing New

By Deirdre Nansen McCloskey

I will lose my union card as an historian if I do not say about AI, in the words of Ecclesiastes 1:9, "The thing that hath been, it is that which shall be; and that which is done is that which shall be done: and there is no new thing under the sun." History doesn't repeat itself, we say, but it rhymes. There is change, sure, but also continuity. And much similar wisdom.

So about the latest craze you need to stop being such an ahistorical dope. That's what professional historians say, every time, about everything. And they're damned right. It says here artificial intelligence is "a system able to perform tasks that normally require human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages."

Wow, that's some "system"! Talk about a new thing under the sun! The depressed old preacher in Ecclesiastes is the dope here. Craze justified, eh? Bring on the wise members of Congress to regulate it.

But hold on. I cheated in the definition. I left out the word "computer" before "system." All right, if AI has to mean the abilities of a pile of computer chips, conventionally understood as "a computer," then sure, AI is pretty cool, and pretty new. Imagine, instead of fumbling with printed phrase book, being able to talk to a Chinese person in English through a pile of computer chips and she hears it as Mandarin or Wu or whatever. Swell! Or imagine, instead of fumbling with identity cards and police, being able to recognize faces so that the Chinese Communist Party can watch and trace every Chinese person 24–7. Oh, wait.

Yet look at the definition of AI without "computer." It's pretty much what we mean by human creativity frozen in human practices and human machines, isn't it? After all, a phrase book is an artificial intelligence "machine." You input some finger fumbling and moderate competence in English and the book gives, at least for the brave soul who doesn't mind sounding like a bit of a fool, "translation between languages."

A bow and arrow is a little "computer" substituting for human intelligence in hurling small spears. Writing is a speech-reproduction machine, which irritated Socrates. Language itself is a system to perform tasks that normally require human intelligence. The joke among humanists is: "Do we speak the language or does the language speak us?"

So calm down. That's the old news from history, and the merest common sense. And watch out for regulation.

Deirdre Nansen McCloskey holds the Isaiah Berlin Chair of Liberal Thought at the Cato Institute.