Social Media Executives Echo Politicians' Hysteria About 'Russian Disinformation'

If our democracy cannot survive another 43 hours of political videos on YouTube, it is already doomed.

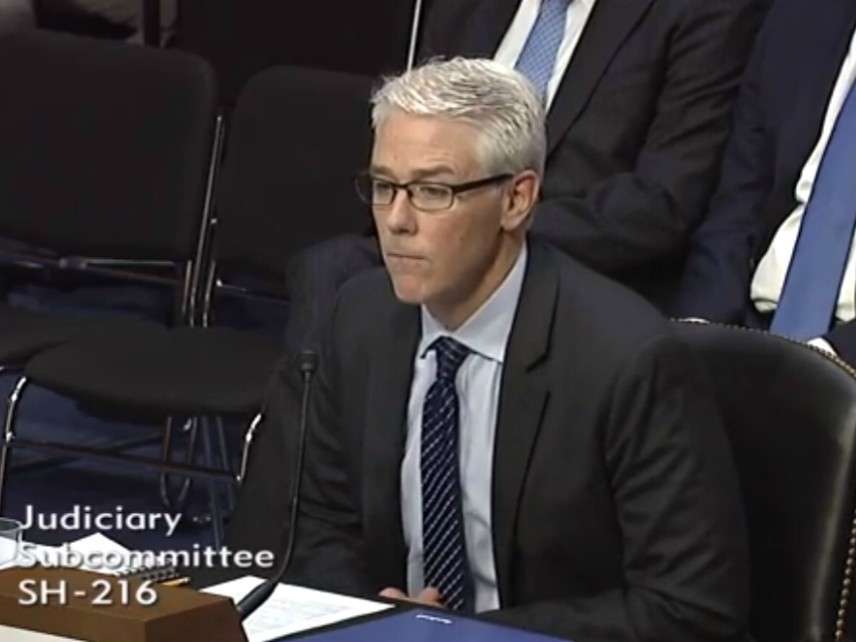

Yesterday representatives of Facebook, Google, and Twitter testified before a Senate subcommittee about online "Russian disinformation," sounding a note of alarm that echoed legislators' concerns and therefore grossly exaggerated the threat. "When it comes to the 2016 election," said Facebook General Counsel Colin Stretch, "the foreign interference we saw is reprehensible and outrageous and opened a new battleground for our company, our industry, and our society. That foreign actors, hiding behind fake accounts, abused our platform and other internet services to try to sow division and discord—and to try to undermine our election process—is an assault on democracy, and it violates all of our values."

The idea that Russian ads on Facebook, Russian tweets on Twitter, and Russian videos on YouTube "undermine our election process" and constitute "an assault on democracy" (let alone that such propaganda "violates all of our values") is hard to take seriously given what we know about the nature and scale of this operation. Social media platforms have every right to insist that users follow their terms of service, which in Facebook's case ban phony source descriptions (falsely identifying a Russian's posts as an American's, for example). But the expectation that Facebook, Twitter, and Google will police political discourse to minimize "Russian influence" is not just impractical but, if backed by the threat of legislation, contrary to the First Amendment.

It is important to keep in mind that we are not talking about direct interference with the election process (by hacking computers that tally votes, say). We are talking about efforts to persuade people—or, as seems to have been more common, reinforce their pre-existing opinions—through words and images. Although some of these messages can fairly be described as "disinformation" (such as a fake letter posted on Twitter supposedly documenting a $150 million contribution to Hillary Clinton's campaign by the conservative Bradley Foundation), some (such as reports about police shootings) were entirely accurate, while others were expressions of opinion on subjects such as racism, LGBT issues, immigration, and gun rights.

Except for the fact that the messages appear to have been sponsored by the Russian government, there was nothing especially sinister or insidious about them. Cases of broken English and awkward phrasing aside, they were indistinguishable from the mixture of facts, lies, and blather that constitutes good, old-fashioned, American-produced political discourse. So when The New York Times reports that Russian-sponsored "political ads and other content" (including videos from the government-sponsored news outlet RT) "were meant to sow discord or chaos," it is either being hysterical or ascribing absurdly unrealistic ambitions to the Russian government. Our social and political order is not one viral video or inflammatory tweet away from catastrophic collapse.

Russian participation in the online U.S. political debate looks even less scary when you consider how tiny its footprint seems to be. On Monday a Times headline proclaimed that "Russian Influence Reached 126 Million Through Facebook Alone," which sounds impressive unless you realize that an ad can "reach" people without being noticed or read, let alone persuading anyone. What the headline really meant, as the Times explained in its report on yesterday's hearing, is that "more than 126 million users potentially saw inflammatory political ads bought by a Kremlin-linked company, the Internet Research Agency." Yes, and since the post you are reading is available on the internet, it could potentially be seen by 3.6 billion people.

No doubt Vladimir Putin would love to determine the outcome of presidential elections by spending $100,000 on Facebook ads. It is far less clear that he (or anyone else) has the power to do that, no matter how big the ad buy. As Brian Doherty noted here on Monday, the evidence suggests that politicians and campaign "reformers" greatly overestimate the effectiveness of political advertising.

According to Facebook, the ads bought by the Internet Research Agency represented "four-thousandths of one percent (0.004%) of content in News Feed, or approximately 1 out of 23,000 pieces of content." The Times concedes that "Russia-linked posts represented a minuscule amount of content compared with the billions of posts that flow through users' News Feeds every day." Between 2015 and 2017, the paper notes, "people in the United States saw more than 11 trillion posts from pages on Facebook."

The Russian contribution on other platforms looks similarly unimpressive. Twitter Acting General Counsel Sean Edgett testified that "the 1.4 million election-related Tweets that we identified through our retrospective review as generated by Russian-linked, automated accounts constituted less than three-quarters of a percent (0.74%) of the overall election-related Tweets on Twitter at the time." The Times admits that tweets by Russian operatives posing as Americans "may have added only modestly to the din of genuine American voices in the pre-election melee," and "many of the suspect posts were not widely shared."

Still, the paper insists, the tweets "helped fuel a fire of anger and suspicion in a polarized country." As Ed Krayewski noted a few weeks ago, the headline over another Times story breathlessly claimed that "Russia Harvested American Rage to Reshape U.S. Politics." Talk about disinformation.

Richard Salgado, Google's senior counsel on law enforcement and information security, testified that the company found 18 YouTube channels offering about 1,100 videos with political content that were "uploaded by individuals who we suspect are associated with this [Russian] effort." The videos, which totaled 43 hours on a platform where 400 hours of content are uploaded every minute and more than 1 billion hours are watched every day, "mostly had low view counts," with less than 3 percent attracting more than 5,000 views.

"While this is a relatively small amount of content," Salgado hastened to add, "we understand that any misuse of our platforms for this purpose is a serious challenge to the integrity of our democracy." I assume Salgado was trying to placate senators outraged (or pretending to be outraged) by "Russian interference in our electoral process." But if our democracy cannot survive another 43 hours of political videos on YouTube, it is already doomed.

Editor's Note: As of February 29, 2024, commenting privileges on reason.com posts are limited to Reason Plus subscribers. Past commenters are grandfathered in for a temporary period. Subscribe here to preserve your ability to comment. Your Reason Plus subscription also gives you an ad-free version of reason.com, along with full access to the digital edition and archives of Reason magazine. We request that comments be civil and on-topic. We do not moderate or assume any responsibility for comments, which are owned by the readers who post them. Comments do not represent the views of reason.com or Reason Foundation. We reserve the right to delete any comment and ban commenters for any reason at any time. Comments may only be edited within 5 minutes of posting. Report abuses.

Please to post comments

I'm certain US Intelligence has NEVER attempted to influence an election in a foreign country...

It's been mostly a right-wing agenda directing those American influence campaigns.

Sure, if opposing communists is considered to be a 'right-wing agenda'. Say, remember when the Left in this country use to say that they weren't socialists or supportive of socialists? The good old days

We have certainly pretended to approve of Pussy Riot's inappropriate agenda, sneeringly telling our Russian comrades how to run their criminal justice system. Far worse than the foreign "propaganda" certain individuals are complaining of is the domestic disinformation we've been confronted with in the so-called "First Amendment dissent" of a single, isolated judge in our nation's leading criminal "satire" case, and in outrageous "opinions" from the academic left such as this:

http://forward.com/opinion/385.....s-scholar/

P.s. and it is truly absurd that our senators should complain about online political advertising, when our nation is confronted, day after day, by outrageous, indiscreet documentation of true crimes on websites that would, one can still hope, never be allowed to exist in Russia, such as this:

http://raphaelgolbtrial.wordpress.com/

My Whole month's on-line financ-ial gain is $2287. i'm currently ready to fulfill my dreams simply and reside home with my family additionally. I work just for two hours on a daily basis. everybody will use this home profit system by this link.........

======================

http://www.webcash20.com

======================

Start earning $90/hourly for working online from your home for few hours each day... Get regular payment on a weekly basis... All you need is a computer, internet connection and a litte free time...

Read more here,,,,, http://www.onlinecareer10.com

You want to explain Obama and US tax dollars in the Israeli election?

"You want to explain Obama and US tax dollars in the Israeli election?

If Hole can't find it on Vox or HuffPo, Hole is not capable of responding to anything.

.... because they are too busy doing that here.

Yeah but see the problem is you can throw a rock in any direction and hit someone who is busily reciting Russian-originated horseshit mostly about evil Hillary Clinton. It takes up a good 60% of these very comments boards sometimes.

I see y'all have finally gotten down the list of talking points to "All of the heinous shit I said and did never actually happened, you've just been fed a bunch of lies from the evil Russians."

I guess that's better than "Vast Right-Wing Conspiracy".

So horseshit is Russian for true story since Hillary is evil

Fuck Tony at this point the Russians may be regurgitating Sean Hannity. It's that crazy in Republican land.

What's funny is that true party people like John Boehner and scores of formerly ultra-partisan Republican pundits are losing their shit about what a freakshow it has become, yet all these independent-minded nonpartisan free-thinking libertarians just continue regurgitating the nonsense.

"What's funny is that true party people like John Boehner and scores of formerly ultra-partisan Republican pundits are losing their shit about what a freakshow it has become, yet all these independent-minded nonpartisan free-thinking libertarians just continue regurgitating the nonsense."

I'll bet you imagined there was a credible point buried in that pile of bullshit.

The point is you're dumber than Bill Kristol, who's never been right about anything.

You don't say?

ony|11.1.17 @ 4:39PM|#

"The point is you're dumber than Bill Kristol, who's never been right about anything."

Gee, Tony, how clever!

You stupid piece of shit, you LOST. And you lost because you're too stupid to realize how pathetic that walking baggage cart is.

Fuck off.

"people like John Boehner "

don't care

Memory Hole|11.1.17 @ 2:37PM|#

"Fuck Tony at this point the Russians may be regurgitating Sean Hannity. It's that crazy in Republican land."

You, OTOH, post nothing other than bullshit.

But what's the difference between Russian horseshit and American horseshit? You get pissed at anything bad said about Queen Hillary regardless of its point of origin.

Only when it's a lie, especially one whose debunking you can find with one Google search. Stop being lazy partisan shitheads.

"especially one whose debunking you can find with one Google search"

You said this the other day, and when someone proved you wrong, you shut up and hid.

Nobody did any such thing. They dismissed the pages and pages of credible reporting as innuendo.

People believe what they want to believe, I guess. How nice it must be to live like that.

People believe what they want to believe, I guess. How nice it must be to live like that.

Yes, Captain Above-it-all. You certainly don't do that.

"Nobody did any such thing. "

You should learn to use Google.

Tony|11.1.17 @ 3:21PM|#

"They dismissed the pages and pages of credible reporting as innuendo."

So they called you on your bullshit? How.........

boring.

""They dismissed the pages and pages of credible reporting as innuendo.""

I saw no evidence of credible reporting in anything I read via the Google search.

I still challenge you to show me some credible reporting, assuming you know the difference between real reporting and opinion editorials.

You only found opinion pieces discussing the targeted Russian hacking during the 2016 election? So they just pulled it from their asses?

That doesn't address the point though: if it's a lie I don't see how its point of origin matters all that much. Getting in a lather over a lie that comes from Russia when there are gobs of American lies strikes me as pointless.

That's because it IS pointless. I guarantee you that a fraction of a percent of people were influenced enough by Facebook, Twitter, etc. that they actually changed their vote. There's probably more transgender people as a percentage of our society.

What this all boils down to is that it was Herself's turn, Trump stole that from her, and Libertarians are big meanie poopie heads for not acknowledging that.

Well, I can understand that. I've been a big meanie poopie head for decades. I'm rather proud of it.

"What this all boils down to is that it was Herself's turn, Trump stole that from her, and Libertarians are big meanie poopie heads for not acknowledging that."

Yep.

If all the votes affected by TEH RUSSSSKIESS!!!!!!!!!!!! were tabulated, you could do so with out taking off your shoes.

Propaganda works, otherwise there wouldn't be such a thing as propaganda.

You sit there and deny that the most frequent reason people give for voting Trump is because he wasn't that cankle bitch Hillary with her emails and murders.

So you think the truth is propaganda. How typical.

"Homeopathy works, otherwise there wouldn't be such a thing as homeopathy"

Tony logic.

So you are claiming that propaganda doesn't work?

Are you claiming Homeopathy does?

No. Are you often a fallacious shithead or just when you lose an argument?

Analogies don't work, otherwise there would be such a thing as sevo not completely misunderstanding them.

And you don't think Russia is deliberately trying to foment "The Resistance" right now?

The Steele Dossier probably has some truth in it, but it is also obviously filled with blatant lies and falsehoods intentionally added by Russians aimed specifically at fomenting the "Resistance".

What has been proven to be a falsehood?

The pee story, for starters.

When?

Tony|11.1.17 @ 2:21PM|#

"Yeah but see the problem is you can throw a rock in any direction and hit someone who is busily reciting Russian-originated horseshit mostly about evil Hillary Clinton."

"Russian-originated" since the US press was too pathetic to publish that hag's baggage?

Or are you just flat-out lying?

What baggage would that be? Be specific, comrade.

Just the first one to show up, from that hot-bed of Trump support, WaPO:

"Hillary Clinton has a baggage problem"

https://www.washingtonpost. com/news/the-fix/wp /2015/04/23/hillary-clintons -baggage-problem/?utm_ term=.bbba7bf3cf11

And this one is 'way too early to include the criminal treatment of classified data, her illegal server network, her getting coached on questions for the debate, etc, etc, etc.

It's fun! You can find almost as much baggage as you can Obo lies! Well, maybe not THAT many...

Oh, the horror. Also stop lying and exaggerating.

Do you not see the current president? Is it too horrible to bear?

Basically this. There is a cyber Cold War going on and this election was score 1 for Putin.

YC|11.1.17 @ 3:57PM|#

"Basically this. There is a cyber Cold War going on and this election was score 1 for Putin."

Basically, you bullshit also.

I'll agree with YC for this reason.

Regardless of the truthfulness of Russian meddling, some Americans are convinced that Russia affected the outcome. Since it's being played that way in leftist media, the mere perception of it is a win for Putin because it makes it sound like he was successful.

Score 1 for Putin, and he couldn't have done it without the help of liberals.

So here's an instance of Russians organizing both sides of a protest (in Texas, mind you), and then watching, presumably gleefully, as the sheep on both sides fought it out.

I literally laughed out loud at "the rally organizers were nowhere to be found."

http://www.businessinsider. com/russia-trolls-senate-intel ligence-committee-hearing-2017-11

BI is not a source, in case you're confused

I would say "let me google that for you", as it is all over now including the msm you are probably faux-terrified of, but judging by your post history and syntax in pretty sure I am talking to an idiot who wants to keep his head in the sand

Eagerly awaiting the next news from Mueller 🙂

The US has about 700 overseas bases. Russia has about two.

The Cold War is over. You can come out of your bomb shelter now.

They used what resources they had and stuck us with Trump, which is more damage than they ever managed during the real Cold War.

You're an idiot.

"They used what resources they had and stuck us with Trump, which is more damage than they ever managed during the real Cold War."

They seem to have caused brain-damage among lefty idiots, making them even dumber yet.

So it's a win for the US.

Are the Social Media Executives also going to condemn VOICE OF AMERICA?

In 2013 the US repealed the law which prevented US propaganda being used in the US

http://foreignpolicy.com/2013/.....americans/

"...while others were expressions of opinion on subjects such as racism, LGBT issues, immigration, and gun rights."

So, basically, they used the divisions sewed by American identity politics to their advantage. No wonder out politicians and their financiers have their pantaloons in a bunch, the dastardly ruskies stole their idea. Maybe they should sue them for copyright infringement.

Social Media Executives Echo Politicians' Hysteria About 'Russian Disinformation'

neither of those are real jobs, so the people who have them need to find something to fill their days

Yesterday representatives of Facebook, Google, and Twitter testified before a Senate subcommittee about online "Russian disinformation," sounding a note of alarm that echoed legislators' concerns and therefore grossly exaggerated the threat

None wanted to be the guy who didn't give the Government a harrumph.

It's funny that people who think the ad that was running in Virginia portraying conservative rural voters as monsters running down immigrant kids in their pickup trucks is okay would have the brass to complain about propaganda.

Which ad was that?

I think the Russians did affect the elections. And I believe they did it by getting Hillary and Obama to do a) the great deal for Iran, Putin's close ally, for nothing but promises, b) to sell the US uranium to Russians, and c) to let Putin invade Crimea without consequence. After all Obama did promise Medvedev he'd "have more flexibility after the [2012} elections" on a hot mic unbeknownst to Obama.

Why? Because Putin's (as Obama and Hillary have told us) great hackers got her emails, including the ones from Obama suspiciously using an alias (about which he lied, claiming he learned of her server via news reports) from her email server she had setup in 2008, and protected by an email guy. They of course had to cover it up. Then they tried to be good puppets by offering up money to Russians to give the dirt they had on Trump in the hopes Putin would prefer them (which he did prefer, but they assumed he had dirt on Trump as well).

Those compromises you're suggesting Obama and Hillary made are pretty libertarian. If that's all that's needed to promote peace and free trade in this country, I hope Putin has explicit pictures of every US politician with Lindsey Lohan and a donkey. Maybe then we'll make some headway in this country.

So what?

The communist party is a registered US political party. What is up with all the russo-phobia?

The Russian BS on social media is no different than the other BS on social media.

All this amounts to is the camels nose under the tent of regulating who can say what on line.

I am still waiting for a reasonable explanation of why Russia would want 'Mr. I-am-hardass' as president over someone they already had in their pocket I mean after the uranium deal, the Iranian deal, and all the million dollar speeches, what the hell does Russia have to fear from Hillary or the democratic party?

That she might spell "reset" in Russian correctly this time?

Smokescreen. Diversion. Sowing chaos. Pick one.

There isn't one. The Dems run the MSM, is all. They're spinning whatever tales think make them look good.

There were two facebook groups, one was like... friends of Texas which was linked to Russia. It had like 200,000 subscribers. Then there was a pro-Muslim group that had 350,000 subscribers, also linked to Russia. Both groups scheduled a rally in the same place at the same time.

Someone else tweeted like... a MILLION TIMES! No word on how many subscribers the twitter account had.

The news on this is so laughable it should be scary, because you know what the reaction is going to be. But it's still funny as hell.

Well, in those last couple of weeks there HRC was campaigning on sending ground troops into Syria to overthrow Assad and, by implication, shut down one of Russia's two last remaining bases outside of its borders, which also happens to be the only warm water port they fully control.

The Obama administration was also actively arming rebels in Syria, including ISIS and al-Qaeda, in order to further destabilize the Assad regime.

Trump campaigned on obliterating ISIS and "Assad is none of our business."

Per Wikipedia, "On 19 July 2017, it was reported that the Donald Trump administration had decided to halt the CIA program to equip and train anti-government rebel groups, a move sought by Russia."

"What is up with all the russo-phobia?"

Losers like Tony, Hole, turd and the rest still cannot believe that the rest of the world was not willing to turn a blind eye to the immense "awfulness" of that miserable hag.

THEY bought the story, and they're 'smart', so it can't be that they were shown to be pathetic, partisan sheep! No sir!

It must be "COLLUDING!!!!", or "TAKING TO THEM!!!", or finally, "WELL, THEY RAN SOME ADS!!!! DOESN'T THAT COUNT?"

Huh? There's a pretty obvious anti-Russian sentiment coming from the American left.

Ultimately, the Russians fell hook line and sinker for Trump's claims that he was going to be less interventionist abroad. Putin should have spent some time on a libertarian web site and he would have gotten some compelling arguments that Trump was bullshitting all along.

I found myself laughing outloud this morning while listening to NPR on this issue. I've been in a quasi-news blackout recently, and definitely not had the NPR stations on, but for some reason, I flipped over this morning.

They went on and on about how many millions of people saw those Fake News Ads from Russia. Eyeballs eyeballs eyeballs. No one could quantify what it meant, but yet they were absolutely breathless in their reporting. And it seems that everyone agrees that We Need to Crack Down on This. One person actually suggested every ad contain a "Stamp of Origin".

No one mentioned the first amendment.

What's funny to me about this kind of thing is that the talking heads on NPR supposedly saw the ads too. But they're immune from the ads' magical powers...how? Oh right, they're just so much smarter than the commoners in flyover country.

I heard the dumb asses on NPR this morning as well. This is what struck me. They asked one of them if it was possible to trace the origin of the content given tech companies say its difficult to impossible. He hemmed and hawed about how tech companies lie and then said they could have something in place by the 2018 election.

So this is the state of the left. A technologically ignorant hack listens to people who know what they are talking about tell him something can't be done and his response is to say not only can it be done but it can be ready in 12 months or so. Experts tell him there is no solution, he doesn't know of any solution yet he can proclaim the exact timeline to get a fantasy solution implemented.

These people really are that retarded.

I almost can't even listen to NPR anymore. Their complete lack of skepticism regarding the DNC's narratives and their refusal to acknowledge the existence of any story whatsoever except OMG RUSSIAN MEDDLING!! COLLUSION! COLLUSION! has gotten completely unbearable.

At least our local Bay Area commie station, KPFA, had a Democratic congressman on who was explaining that bringing up Manafort on 7-year-old tax evasion charges when they were supposed to be looking for collusion with Russian agents is just like when the Republicans brought perjury charges against Clinton for lying about a sexual relationship when they were supposed to be investigating real estate fraud.

To their credit, they pointed out to the moron that this seriously backfired on the Republicans and asked him why the current investigations won't backfire on the Democrats. He didn't really have a very coherent response.

NPRs coverage was a baffling three ring circus that was painfully light on facts and overloaded with innuendo. The Russians placed Fake Ads. *cue dramatic music*

People saw the fake ads. *cue dramatic music*

What was the measurable effect of the ads? Oh wait, they didn't ask that question. Let me skip to what they did ask next:

How do we stop this from happening?

The stupidity of the reporting has so many secondary implications that would come back to bite... well, pretty much everyone in journalism it makes my head hurt.

Ask anyone why they voted for Trump. More often than not the reason is "At least he isn't Hillary," and they may give a list of Russia-supplied horseshit lies about her. Propaganda works. And Americans are dumb.

You understand, right, that the anti-Hillary people have been saying the same things about her (whether true or not) since the 90s?

How long do you think this Russian conspiracy to keep her out of the White House has been going on?

Tony|11.1.17 @ 4:44PM|#

"Ask anyone why they voted for Trump. More often than not the reason is "At least he isn't Hillary," and they may give a list of Russia-supplied horseshit lies about her."

You're a laugh-riot.

By horseshit lies he means in their own words via stolen Emails.

If it was all horseshit, DWS would not have resigned from the DNC.

What's funny is the Hillary can do no wrong crowd will claims "it's not Hillary" when one of Hillary's associates gets caught red handed, but when a low level worker for the Trump campaign is caught, it's evidence that Trump is bad.

The Hillary can do no wrong crowd just can't be taken seriously. They are hypocrites at every turn.

Cue response: But she IS a cankle email murder bitch! I came to that conclusion independently with much empirical observation!

She and her husband have been sleazing around national politics for over 24 years. The voters already damn well knew what she is. The Democrats had to nominate a known unpleasant quantity instead of finding another cipher with racial appeal like Obama. Hilary lost because black voters were not as motivated to come out in numbers for an old white woman as they were for the youngish black man.

The Russians planted fake stories about Hillary staying with Oval Office Mouth Fuck Billy Bob, and Benghazi, and the double flush bathroom email server, and her multiple drilling faints on the campaign trail! None of that happened! It's a lie from the Russian deplorables!

And she didn't really say that, either!

Propaganda works!

They also planted that cough on her.

""Republicans brought perjury charges against Clinton for lying about a sexual relationship when they were supposed to be investigating real estate fraud.""

Wasn't it the Paula Jones sexual harassment case where the question was asked?

One person actually suggested every ad contain a "Stamp of Origin".

Did they get the idea from looking at a bag of fair-trade coffee? Good job, NPR, way to defy stereotypes

"the foreign interference we saw is reprehensible and outrageous and opened a new battleground for our company, our industry, and our society. That foreign actors, hiding behind fake accounts, abused our platform and other internet services to try to sow division and discord?and to try to undermine our election process?is an assault on democracy, and it violates all of our values."

Bull

.

.

.

shit.

If that's an assault on democracy, democracy needs a 'safe space'.

The federal government has an unprecedented opportunity here. It can now enlist cargo-shorts-wearing CEOs of social media companies to outsource censorship. And these social media execs are all too eager to do it.

I'm amazed at how much power $100,000 of ads on Facebook had. Amazed.

It's a shame the D candidate didn't have a couple of bucks to spend!

Yanks to the Rescue!

I nominate this to be the most unintentionally funny news story of the year. NPR heads were babbling incoherently and even when they talked amongst themselves, they couldn't figure out what any of the Russian ads meant.

NPR heads were babbling incoherently and even when they talked amongst themselves, they couldn't figure out what any of the Russian ads meant.

My schadenfreude moment is that NPR did a piece about how House Of Cards was revealed as a source/training material for some dens of Russian hackers and, not a week later, Kevin Spacey gets outed in the same vein as Harvey Weinstein for allegedly sexually assaulting a 14-yr.-old boy.

The only way it could get more aptly bizarro is if Hillary Clinton (spoiler alert) shoved Kate Mara off a subway platform or claimed "I am not a crook!" while allegedly doing an impression of Pres. Underwood. NPR, of course, would continue to be shocked that wealthy people who aren't Republicans commit crimes and/or murder people.

If any of you are wondering... I'm sorry, I'm laughing while I'm typing this... if any of you want to see the sinister ads that caused Hillary to lose, here are some examples.

If there are people who seriously believe you can vote via Twitter, I think those people absolutely should be encouraged to do so.

If asking for ID when you vote is racist, why wouldn't voting via Twitter be valid?

So the hag lost because hag-voters were stupid enough to try to vote via twitter?

I'd say the Russkis did us a real favor if voters of that intelligence level were removed from the results.

Hey, Tony! We got your number!

Please go easy on Tony. He's only just now learning that his vote didn't count.

It's been a YEAR, for Pete's sake!

You'd think even lefty idiots like Tony could have grown up a bit by now and realize THEY LOST!

(I love pointing it out to them)

Even.if you acknowledge this as an actual problem, ehat is the solution that will not put a prior restraint on Americans exercising their rights to comment on and support or campaign against a particular candidate,

Russians lurking under every rock! These paranoid nutters are so desperate to save face knowing Trump trounced not just their candidate but their entire bubble-reality. They need to use Russians as a scapegoat for their own political shortcomings. Very sad people under the spell of collective narcissism and can't get over it.

Russians lurking under every rock! These paranoid nutters are so desperate to save face knowing Trump trounced not just their candidate but their entire bubble-reality. They need to use Russians as a scapegoat for their own political shortcomings. Very sad people under the spell of collective narcissism and can't get over it.

The only people who deny the existence of a Russian plot are Putin, Assange, Trump, and you idiots.

The Russians probably haven't not had "a plot" since, the late 1940s. The question is, to what effect?

WHY AREN'T YOU AFRAID OF RUSSIA YOU UN-AMERICAN ASSHOLE!!

What was that line that Fred Thompson had in "The Hunt For Red October":

"The Russians don't take a dump without a plan"?

I have it good authority that Hillary lost because Pizzagate. That and propaganda works, so, of course it was Pizzagate.

Fucking first amendment!

Alls I know is, someone better get a handle on this totally gay first amendment thing, 'cause it's harshing my mellow.

It was those dams Ruuushians and the Macedonian content farmers runnin round here with their pokemon adds. That's right kids the Charazard was really Putin convincing to you vote for Trump. Hillary and the DNC commissioned the Dossier to counter the Ruuuushian influence in the election but hookers peeing on Obama's bed wasn't enough to stem the tide of the Ruuushian Pikachu add blitz on facebook.

"You said Russia. And the 1980s are now calling to ask for their foreign policy back. Because the Cold War has been over for 20 years."

-Some Fucking Guy

"Except for the fact that the messages appear to have been sponsored by the Russian government"

Appear? How is this equivocal shit any better than fake news? If true it matters (I guess), if not it destroys the whole fucking story. You can't weasel word your way past that.

Great reporting.

Just hours before the 2016 election, liberal sites were gleefully showcasing Hillary Clinton's HUGE electoral lead over Trump right up until the time I went to bed. Because of the Hillary's-got-it-in-the-bag frenzy, I figured she was a shoo-in.

Liberals were soon in vermilion-faced shock. They'd been misled.

Liberals again are misled by their media, creating a Trump-Russia-collusion frenzy. It could worsen this:

"The whole Democratic Party is now a smoking pile of rubble: In state government things are worse, if anything. The GOP now controls historical record number of governors' mansions, including a majority of New England governorships. Tuesday's election swapped around a few state legislative houses but left Democrats controlling a distinct minority. The same story applies further down ballot, where most elected attorneys general, insurance commissioners, secretaries of state, and so forth are Republicans." (Search Vox.com)

And this:

"The Democratic Party is viewed as more out of touch than either Trump or the party's political opponents. Two-thirds of Americans think the Democrats are out of touch ? including nearly half of Democrats themselves. ...a large chunk of Democrats feel that their party is united in a vision ? that's at odds with the concerns of the American public." -Washington Post

archive.is/SAj6w

"The videos, which totaled 43 hours on a platform where 400 hours of content are uploaded every minute and more than 1 billion hours are watched every day, "mostly had low view counts," with less than 3 percent attracting more than 5,000 views."

Oh, the hand wringing: these pearl clutchers need to be rakes over the coals a bit more. Their self-righteous indignation seems to be at odds with their disinterest in maintaining any semblance of objective truth flowing through their platforms. They wouldn't have been in that room had Hillary won (a cool breeze comes over me, I shudder).

Do any of these Tech Morons have experience in the "real world", or have they been cosseted away their entire lives with their little 1's and 0's?