Government Is the Cause of—Not the Solution to—the Latest Hacking Outbreak

A failure of transparency and responsibility by multiple nations.

Privacy and cybersecurity experts and activists have been warning for ages that governments have their priorities all wrong. National security interests (not just in America but other countries as well) comparatively spend much more time and money attempting to breach the security systems of other countries and potential enemies than they do bolstering their own defenses. Reuters determined, with the information from intelligence officials, that the United States spends $9 on cybersurveillance and government hacking for every $1 it sends on defending its network systems.

The "WannaCry" Malware attack that spooled out over the end of last week and into the weekend, implicates both sides of this problem. The ransomware, first of all, allegedly originated from vulnerabilities and infiltration tools developed by the National Security Agency (NSA) they had been hoarding and keeping secret from technology companies whose defenses they were breaching. All of this secrecy was to facilitate the NSA's ability to engage in cyberespionage and to prevent technology companies from building defenses that would have inhibited government surveillance. The NSA lost control of these infiltration tools and they were publicly exposed by the hacker group known as the "Shadow Brokers" last month.

So this WannaCry attack or something like it (and probably many more) was incoming, and attentive information technology specialists were aware and hopefully prepared. Microsoft had already released a patch to address the vulnerabilities. Except not everybody downloaded it.

The non-downloaders included parts of the United Kingdom's National Health Service (NHS), the socialized, taxpayer-funded healthcare system that covers the entire population there. The NHS had been warned that computers using old Microsoft operating systems were vulnerable, but several hundreds of thousands of computers had not been upgraded, according to the BBC.

So on the one hand, we have a government agency refusing to disclose cybersecurity vulnerabilities it had discovered in order to take advantage of them, potentially leaving everybody's computers open to attacks. And then, on the other hand, we have a government agency refusing to properly prioritize cybersecurity to protect the data and privacy of its citizens (they blamed it on not having enough money, of course).

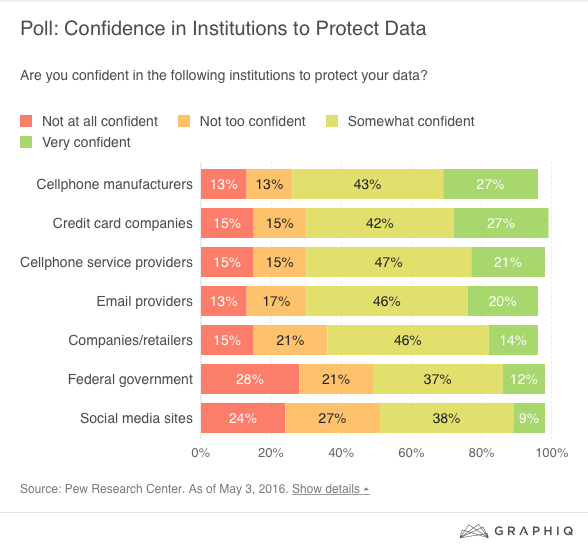

This poll from Pew from last year shouldn't be a surprise, then. Consumers have less confidence in the federal government to protect their data than cellphone companies, email service providers, and credit card companies:

That the government has been terrible on both ends of this problem makes this op-ed response at The New York Times by Zeynep Tufekci all the more confusing: She blames Microsoft and tech companies for apparently wanting to be paid to continue fixing and updating old, outdated operating systems. While she acknowledges that there are costs involved in such behavior, she seems to think that Microsoft should just suck it up and shell out. This is a rather remarkable hot take (and she's most certainly not alone in it):

[C]ompanies like Microsoft should discard the idea that they can abandon people using older software. The money they made from these customers hasn't expired; neither has their responsibility to fix defects. Besides, Microsoft is sitting on a cash hoard estimated at more than $100 billion (the result of how little tax modern corporations pay and how profitable it is to sell a dominant operating system under monopolistic dynamics with no liability for defects).

Has anybody seen a demand for free goods and services couched in an argument as fundamentally dumb as "The money hasn't expired!" before? Why does The New York Times continue to charge year after year to subscribers? The money readers paid the first time hasn't expired!

Note that she also takes aim at those evil corporations and their money "hoards." Earlier in the column she described the NHS, a massive government juggernaut of a bureaucracy as "cash-strapped." The NHS blows through an equivalent of Microsoft's "hoard" and then some every single year. Its most recent budget is around $122 billion for a year and is predicted to continue growing. It's disingenuous to portray Microsoft as Scrooge McDuck and the NHS as a beggar on a street corner with a sign and a hat.

If nothing else, perhaps NHS's poor financial prioritizations and lack of responsibility will warn Americans against socialized single-payer healthcare systems? No, probably not.

Tufekci's piece isn't all terrible—she, too, recognizes the NSA's culpability in this breach by prioritizing offense over defense. But she nevertheless thinks that the problem is not enough government, despite the fact that this disaster all around is a direct result of poor government behavior:

It is time to consider whether the current regulatory setup, which allows all software vendors to externalize the costs of all defects and problems to their customers with zero liability, needs re-examination.

Whatever new regulations that may be brought to bear against Microsoft will not stop these costs from being "externalized." That's how consumer markets work. If the government mandates that software vendors must continue covering, updating, and protecting its consumers, guess what's going to happen to the price of software? It's going to go up.

Hold the government accountable for all these screw ups, not Microsoft. They're the ones responsible. And Microsoft is not happy about the NSA's behavior either. Brad Smith, Microsoft's president and chief legal officer, called out the feds for its responsibility for these threats to citizens:

The governments of the world should treat this attack as a wake-up call. They need to take a different approach and adhere in cyberspace to the same rules applied to weapons in the physical world. We need governments to consider the damage to civilians that comes from hoarding these vulnerabilities and the use of these exploits. This is one reason we called in February for a new "Digital Geneva Convention" to govern these issues, including a new requirement for governments to report vulnerabilities to vendors, rather than stockpile, sell, or exploit them.