The End of Doom

Good news! Dire predictions about cancer epidemics, mass extinction, overpopulation, and more turned out to be a bust.

"The human predicament is driven by overpopulation, overconsumption of natural resources, and the use of unnecessarily environmentally damaging technologies and socio-economic-political arrangements to service Homo sapiens' aggregate consumption," declared notorious doomster Paul Ehrlich and his biologist wife Anne Ehrlich in the March 2013 Proceedings of the Royal Society B. They additionally warned, "Another possible threat to the continuation of civilization is global toxification," which has "expos[ed] the human population to myriad subtle poisons."

In 1968, Ehrlich infamously prophesied in The Population Bomb, "The battle to feed all of humanity is over. In the 1970s the world will undergo famines—hundreds of millions of people are going to starve to death in spite of any crash programs embarked upon now." Ehrlich was wrong then, and he and his wife are wrong now. The world is not going to be overpopulated, run out of resources, or see the outbreak of massive cancer epidemics due to exposures to synthetic chemicals. Let's take a close look at five threats that failed to materialize, despite the warnings of 20th century doomsayers.

The Cancer Epidemic

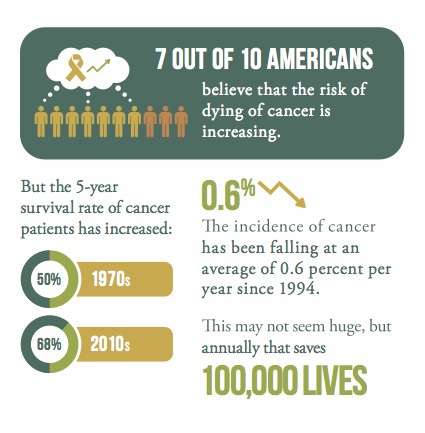

In 2007, an American Cancer Society poll found that 7 out of 10 Americans believed that the risk of dying of cancer is going up. And no wonder, with authorities such as the prestigious President's Cancer Panel ominously reporting that "with nearly 80,000 chemicals on the market in the United States, many of which are used by millions of Americans in their daily lives and are un- or understudied and largely unregulated, exposure to potential environmental carcinogens is widespread." One member of that panel, Howard University surgeon Dr. LaSalle D. Leffall Jr., went so far as to declare in 2010 that "the increasing number of known or suspected environmental carcinogens compels us to action, even though we may currently lack irrefutable proof of harm."

There's just one problem with the panic: There is no growing cancer epidemic. Even as the number of man-made chemicals has proliferated, your chances of dying of the disease have been dropping for more than four decades. And not only have cancer death rates been declining significantly, age-adjusted cancer incidence rates have been falling for nearly two decades. That is, of the number of Americans in nearly any age group, fewer are actually coming down with cancer. What's more, modern medicine has increased the five-year survival rates of cancer patients from 50 percent in the 1970s to 68 percent today.

In fact, the overall incidence of cancer has been falling about 0.6 percent per year since 1994. That may not sound like much, but as Dr. John Seffrin, CEO of the American Cancer Society, explains, "Because the rate continues to drop, it means that in recent years, about 100,000 people each year who would have died had cancer rates not declined are living to celebrate another birthday."

What's going on? According to the Centers for Disease Control and Prevention, age-adjusted cancer incidence rates have been dropping largely because fewer Americans are smoking, more are having colonoscopies in which polyps that might become cancerous are removed, and in the early 2000s many women stopped hormone replacement therapy (which moderately increases the risk of breast cancer).

How did it come to be the conventional wisdom that man-made chemicals are especially toxic and the chief sources of a modern cancer epidemic? It all began with Rachel Carson, the author of the 1962 book Silent Spring.

In Silent Spring, Carson crafted an ardent denunciation of modern technology, hostility to which drives environmentalist ideology to this day. At its heart is this belief: Nature is beneficent, stable, and even a source of moral good; humanity is arrogant, heedless, and often the source of moral evil. Carson, more than any other person, is responsible for the politicization of science that afflicts our contemporary public policy debates.

Rachel Carson surely must have known that cancer is a disease in which the risk goes up as people age. And thanks to vaccines and new antibiotics, Americans in the 1950s were living much longer-long enough to get and die of cancer. In 1900 average life expectancy was 47, and the annual death rate was 1,700 out of 100,000 Americans. By 1960, life expectancy had risen to nearly 70 years, and the annual death rate had fallen to 950 per 100,000 people. Currently, life expectancy is more than 78 years, and the annual death rate is 790 per 100,000 people. Today, although only about 13 percent of Americans are over age 65, they account for 53 percent of new cancer diagnoses and 69 percent of cancer deaths.

Carson realized that even if people didn't worry much about their own health as they aged, they did really care about that of their kids. So to ratchet up the fear factor, she asserted that children were especially vulnerable to the carcinogenic effects of synthetic chemicals. "The situation with respect to children is even more deeply disturbing," she wrote. "A quarter century ago, cancer in children was considered a medical rarity. Today, more American school children die of cancer than from any other disease [her emphasis]." In support of this claim, she reported that "twelve per cent of all deaths in children between the ages of one and fourteen are caused by cancer."

Although it sounds alarming, Carson's statistic is essentially meaningless out of context, which she failed to supply. It turns out that the percentage of children dying of cancer was rising because other causes of death, such as infectious diseases, were drastically declining. The American Cancer Society reports that about 10,450 children in the United States will be diagnosed with cancer in 2014 and that childhood cancers make up less than 1 percent of all cancers diagnosed each year. Childhood cancer incidence has been rising slowly over the past couple of decades at a rate of 0.6 percent per year. Consequently, the incidence rate increased from 13 per 100,000 in the 1970s to 16 per 100,000 now. There is no known cause for this increase. The good news is that 80 percent of kids with cancer now survive five years or more, up from 50 percent in the 1970s.

So did the predicted cancer doom ever arrive? No. Remember-overall incidence rates are down.

With regard to cancer risks posed by synthetic chemicals, the American Cancer Society in its 2014 Cancer Facts and Figures report concludes: "Exposure to carcinogenic agents in occupational, community, and other settings is thought to account for a relatively small percentage of cancer deaths-about 4 percent from occupational exposures and 2 percent from environmental pollutants (man-made and naturally occurring)."

Similarly, the British organization Cancer Research UK observes that for most people "harmful chemicals and pollution pose a very minor risk." How minor? Cancer Research UK notes, "Large organizations like the World Health Organization and the International Agency for Research into Cancer have estimated that pollution and chemicals in our environment only account for about 3 percent of all cancers. Most of these cases are in people who work in certain industries and are exposed to high levels of chemicals in their jobs." Like the American Cancer Society, Cancer Research UK advises, "Lifestyle factors such as smoking, alcohol, obesity, unhealthy diets, inactivity, and heavy sun exposure account for a much larger proportion of cancers."

Overpopulation

"To ecologists who study animals, food and population often seem like sides of the same coin," wrote Paul and Anne Ehrlich in 1990. "If too many animals are devouring it, the food supply declines; too little food, the supply of animals declines." And then the kicker: "Homo sapiens is no exception to that rule."

The Ehrlichs predicted that if the global climate system remained stable "it might take three decades or more for the food-production system to come apart unless its repair became a top priority of all humanity." They added, "One thing seems safe to predict: starvation and epidemic disease will raise death rates over most of the planet." It's 25 years later and instead of going up, the global crude death rate has dropped from 9.7 per 1,000 people in 1990 to 7.9 now.

More than two decades later, despite a distinct lack of apocalypses in the intervening time, the couple was still singing the same tune. For example, during a May 2013 conference at the University of Vermont, Paul Ehrlich asked, "What are the chances a collapse of civilization can be avoided?" His answer: 10 percent.

Did the Ehrlichs just get the timing wrong? Is the threat of overpopulation still looming over us?

In September 2014, demographers working with the United Nations Population Division published an article in Science arguing that world population would grow to around 11 billion by 2100. Nearly all of that increase—4 billion people—was projected in sub-Saharan Africa.

But Wolfgang Lutz and his fellow demographers at the International Institute for Applied Systems Analysis beg to differ. In their November 2014 study, World Population and Human Capital in the Twenty-First Century, Lutz and his colleagues take into account the fact that the education levels of women are rising fast around the world, including in Africa. "In most societies, particularly during the process of demographic transition, women with more education have fewer children, both because they want fewer and because they find better ways to pursue their goals," they note. Given current age, sex, and education trends, they estimate that world population will most likely peak at 9.6 billion by 2070 and then begin falling. If the boosting of education levels is pursued more aggressively, world population could instead top out at 8.9 billion in 2060 before starting to drop.

Falling fertility rates are overdetermined—that is, there is a plethora of mutually reinforcing data and hypotheses that explain the global downward trend. These include the effects of increased economic opportunities, more education, longer lives, greater liberty, and expanding globalization and trade. The crucial point is that all of these explanations reinforce one another and accelerate the trend of falling global fertility. Even more interestingly, they all emphasize how the opportunities afforded women by modernity produce lower fertility.

Insight into how the life prospects of women shape reproductive outcomes is provided in a 2010 article in Human Nature, "Examining the Relationship Between Life Expectancy, Reproduction, and Educational Attainment." In that study, University of Connecticut anthropologists Nicola Bulled and Richard Sosis divvied up 193 countries into five groups by their average life expectancies. In countries where women could expect to live to between 40 and 50 years, they bear an average of 5.5 children. Those with life expectancies between 51 and 61 average 4.8 children. The big drop in fertility occurs at that point. Bulled and Sosis found that when women's life expectancy rises to between 61 and 71 years, her total fertility drops to 2.5 children; between 71 and 75 years, it's 2.2 children; and over 75 years, women average 1.7 children. The United Nations' 2012 Revision notes that global average life expectancy at birth rose from 47 years in 1955 to 70 years in 2010. These findings suggest that it is more than just coincidence that the average global fertility rate has fallen over that time period from 5 to 2.45 children today.

In any case, it is a mistake to decry people as just consumers of resources. With the advent of democratic capitalism, they are also creators of new technologies, services, and ideas that have over the past two centuries enabled billions to rise from humanity's natural state of abject poverty and pervasive ignorance.

Killer Tomatoes

Given the well-established advantages of modern biotech crops, including such benefits to the natural environment as lessening pesticide use, preventing soil erosion, tolerating drought, and boosting yields, why do many leading environmentalist groups so strenuously oppose them?

Much of that answer is historically contingent. Just as biotech crops were being commercialized in the 1990s, they ran into a perfect storm of food and safety scandals in Europe. In order to protect beef farmers and suppliers, British food safety authorities downplayed the dangers of "mad cow" disease, which was spread by feeding cattle infected sheep's brains. The agency in charge of French public health permitted human transfusions of HIV-contaminated blood, despite the existence of screening technology. And the asbestos industry was revealed to have exercised undue influence over its regulators in evaluating the risks posed by that toxic mineral.

These incidents of fecklessness "led to strong distrust and caused people to think that firms and public authorities sometimes disregard certain health risks in order to protect certain economic or political interests," argues French National Institute of Agricultural Research analyst Sylvie Bonny. Consequently, the events "increased the public's attention to critical voices, and so the principle of precaution became an omnipresent reference." So when biotech crops were being introduced, much of the European public was primed to take seriously any claims that this new technology might carry hidden risks.

Bonny points out that opposition to biotech crops arose first among "ecologist associations," including Greenpeace and Friends of the Earth. In fact, hyping opposition to biotech crops served as a lifeline to these organizations. Bonny notes that in the late 1990s, Greenpeace in France was experiencing a serious falloff in membership and donations, but the genetically modified organism (GMO) issue rescued the group. "Its anti-GMO action was instrumental in strengthening Greenpeace-France which had been in serious financial straits," she reports. It should always be borne in mind that environmentalist organizations raise money to support themselves by scaring people. More generally, Bonny observes, "For some people, especially many activists, biotechnology also symbolizes the negative aspects of globalization and economic liberalism." She adds, "Since the collapse of the communist ideal has made direct opposition to capitalism more difficult today, it seems to have found new forms of expression including, in particular, criticism of globalization, certain aspects of consumption, technical developments, etc."

These concerns obviously go well beyond any scientific considerations regarding the safety of biotech crops for health and the environment, and have had significant negative consequences for the acceptance of this useful technology around the world, especially in poor countries.

No one has ever gotten so much as a cough, sneeze, sniffle, or stomachache from eating foods made with ingredients from modern biotech crops. Every independent scientific body that has ever evaluated the safety of biotech crops has found them to be safe for humans to eat. "We have reviewed the scientific literature on [genetically engineered] crop safety for the last 10 years that catches the scientific consensus that has matured since GE plants became widely cultivated worldwide, and we can conclude that the scientific research conducted so far has not detected any significant hazard directly connected with the use of GM crops," asserted a team of Italian university researchers in September 2013. And they should know, since they conducted the largest ever survey of scientific studies—more than 1,700—that evaluated the safety of such crops.

A statement issued by the board of directors of the American Association for the Advancement of Science (AAAS), the largest scientific organization in the United States, on October 20, 2012, point-blank asserted that "contrary to popular misconceptions, GM crops are the most extensively tested crops ever added to our food supply. There are occasional claims that feeding GM foods to animals causes aberrations ranging from digestive disorders, to sterility, tumors and premature death. Although such claims are often sensationalized and receive a great deal of media attention, none have stood up to rigorous scientific scrutiny." The AAAS board concluded, "Indeed, the science is quite clear: crop improvement by the modern molecular techniques of biotechnology is safe."

In July 2012, the European Commission's chief scientific adviser, Anne Glover, declared, "There is no substantiated case of any adverse impact on human health, animal health, or environmental health, so that's pretty robust evidence, and I would be confident in saying that there is no more risk in eating GMO food than eating conventionally farmed food."

At its annual meeting in June 2012, the American Medical Association endorsed a report arguing against the labeling of bioengineered foods from its Council on Science and Public Health. The report concluded, "Bioengineered foods have been consumed for close to 20 years, and during that time, no overt consequences on human health have been reported and/or substantiated in the peer reviewed literature." In December 2010, a European Commission review of 130 E.U.-funded biotechnology research projects, covering a period of more than 25 years and involving more than 500 independent research groups, found "no scientific evidence associating GMOs with higher risks for the environment or for food and feed safety than conventional plants and organisms."

Catastrophic Climate Change

Let's start by accepting that global warming is real. There are two ways to address concerns about climate change: adaptation and mitigation. In 2014, the United Nations Intergovernmental Panel on Climate Change (IPCC) issued two reports addressing these options: Climate Change 2014: Impacts, Adaptation, and Vulnerability (hereafter the Adaptation report), which describes adaptation as the "process of adjustment to actual or expected climate and its effects"; and Climate Change 2014: Mitigation (hereafter the Mitigation report), which defines mitigation as "a human intervention to reduce the sources or enhance the sinks of greenhouse gases."

The IPCC reports offer cost estimates for both adaptation and mitigation. The Adaptation report reckons that, assuming that the world takes no steps to deal with climate change, "global annual economic losses for additional temperature increases of around 2°C are between 0.2 and 2.0 percent of income." The report adds, "Losses are more likely than not to be greater, rather than smaller, than this range."

In a 2010 Proceedings of the National Academy of Sciences article, Yale economist William Nordhaus assumed that humanity will do nothing to cut greenhouse gas emissions. Nordhaus uses an integrated assessment model that combines the scientific and socioeconomic aspects of climate change to assess policy options for climate change control. His RICE-2010 integrated assessment model found that "of the estimated damages in the uncontrolled (baseline) case, those damages in 2095 are $12 trillion, or 2.8% of global output, for a global temperature increase of 3.4°C above 1900 levels." Nordhaus' estimate evidently assumes that the world's economy will grow at about 2.5 percent annually, reaching a total GDP of roughly $450 trillion in 2095.

What might the world's economy look like by 2100 using various policies with the aim of mitigating or adapting to climate change? In 2012, the IPCC asked the economists in the Environment Directorate at the Organisation for Economic Co-operation and Development to peer into the future and devise a plausible set of shared socioeconomic pathways (SSPs) to the year 2100. The OECD economists came up with five baseline scenarios. Let's take a look at a couple of the scenarios to get some idea of how the world's economy might evolve over the remainder of this century. The OECD analysis begins in 2010 with a world population of 6.8 billion and a total world gross product of $67 trillion (in 2005 dollars). This yields a global per-capita income just shy of $10,000. For reference the OECD notes that U.S. 2010 per capita income averaged $42,000.

The SSP2 scenario is described as the "middle of the road" projection in which "trends typical of recent decades continue, with some progress towards achieving development goals, reductions in resource and energy intensity at historic rates, and slowly decreasing fossil fuel dependency." If economic and demographic history unfolds as that scenario suggests, world population will have peaked at around 9.6 billion in 2065 and fallen to just over 9 billion by 2100. The world's economy will have grown more than eightfold, from $67 trillion to $577 trillion (2005 dollars). Average income per person globally will have increased from around $10,000 today to $60,000 by 2100. U.S. annual incomes would average just over $100,000.

In the SSP5 "conventional development" scenario, the world economy grows flat out, which "leads to an energy system dominated by fossil fuels, resulting in high [greenhouse gas] emissions and challenges to mitigation." Because there is more urbanization and because there are higher levels of education, world population will peak at 8.6 billion in 2055 and will have fallen to 7.4 billion by 2100. The world's economy will grow 15-fold to just over $1 quadrillion, and the average person in 2100 will be earning about $138,000 per year. U.S. annual incomes would exceed $187,000 per capita. It is of more than passing interest that people living in the warmer world of SSP5 are much better off than people in the cooler SSP2 world.

The OECD analysis adds with regard to climate change in this scenario that the much richer and more highly educated people in 2100 will face "lower socio-environmental challenges to adaptation result[ing] from attainment of human development goals, robust economic growth, highly engineered infrastructure with redundancy to minimize disruptions from extreme events, and highly managed ecosystems." In other words, greater wealth and advanced technologies will significantly enhance people's capabilities to deal with whatever the deleterious consequences of climate change turn out to be.

As noted above, the IPCC estimates that failure to adapt to climate change will reduce future incomes by between 0.2 to 2 percent for temperatures exceeding 2°C. Yale's Nordhaus is one of the more accomplished researchers in the area of trying to calculate the costs and benefits of climate change. In his 2013 book The Climate Casino: Risk, Uncertainty, and Economics for a Warming World, Nordhaus notes that a survey of studies that try to estimate the aggregated damages that climate change might inflict at 2.5°C warming comes in at an average of about 1.5 percent of global output. The highest climate damage estimate Nordhaus cites is a 5 percent reduction in income, though the much-criticized 2006 Stern Review: The Economics of Climate Change suggested that the business-as-usual path of economic growth and greenhouse gas emissions could reduce future incomes by as much as 20 percent.

Future temperatures will perhaps exceed these, but transient climate response temperatures over the remainder of the century are likely to be close to the 2.5°C benchmark cited by the IPCC. In the scenarios sketched out above, a 2 percent loss of income would mean that the $60,000 and $138,000 per capita income averages would fall to $58,800 and $135,240, respectively. Stern's more apocalyptic estimate would cut 2100 per capita incomes to $48,000 and $110,400, respectively.

So how much should people living now on incomes averaging $10,000 per year spend to make sure that people whose incomes will likely be six to 14 times higher aren't reduced by a couple of percentage points? As Nordhaus observes, "Most philosophers and economists hold that rich generations have a lower ethical claim on resources than poorer generations."

Making the extreme set of assumptions that all countries of the world begin mitigation immediately and adopt a single global carbon price, and widely deploy current versions of low- and no-carbon technologies such as wind, solar, and nuclear power, the IPCC's 2014 Mitigation report estimates that keeping carbon dioxide concentrations below 450 parts per million (ppm) in 2100 would result in "an annualized reduction of consumption growth by 0.04 to 0.14 percentage points over the century relative to annualized consumption growth in the baseline that is between 1.6 percent and 3 percent per year." The median estimate in reduced annualized growth in consumption is 0.06 percent.

The IPCC Mitigation report notes that the optimal scenario that it sketches out for keeping greenhouse gas concentrations below 450 ppm would cut incomes in 2100 by between 3 and 11 percent. How much would that be? As was done with regard to the losses from a lack of adaptation, let's look at how much the worst-case mitigation scenario might reduce future incomes. Without extra mitigation, the increase of global gross product to $577 trillion in the middle-of-the-road scenario implies an economic growth rate of 2.42 percent between 2010 and 2100. Cutting that growth rate by 0.14 percentage points to 2.28 percent yields an income of $510 trillion in 2100, reducing per capita incomes from $60,000 to $57,000 per capita. Growth in the conventional-development scenario is cut from an implied 3.07 percent to 2.93 percent, reducing overall income from over $1.015 quadrillion to $901 trillion, and cutting average incomes from $138,000 to $122,000.

All of these figures must be taken with a vat of salt since they are projections for economic, demographic, and biophysical events nearly a century from now. That being acknowledged, projected IPCC income losses that would result from doing nothing to adapt to climate change appear to be roughly comparable to the losses in income that would occur following efforts to slow climate change. In other words, it appears that doing nothing about climate change now will cost future generations about the same as doing something now.

If the results of adaptation and mitigation are more or less the same for future generations, how to pick which path to follow? Given what we know about the competence and efficiency of government, it seems likely that what governments try to do to mitigate climate change may well turn out to be worse than climate change. The better path is one that helps future generations deal with climate change by adopting policies that encourage rapid economic growth. This would endow future generations with the wealth and superior technologies necessary to handle whatever comes at them, including climate change.

Mass Extinction

Many biologists and conservationists are urgently warning that humanity is on the verge of wiping out hundreds of thousands of species in this century. "A large fraction of both terrestrial and freshwater species faces increased extinction risk under projected climate change during and beyond the 21st century," states the 2014 IPCC Adaptation report. "Current rates of extinction are about 1000 times the likely background rate of extinction," asserts a May 2014 review article in Science by Duke University biologist Stuart Pimm and his colleagues. "Scientists estimate we're now losing species at 1,000 to 10,000 times the background rate, with literally dozens going extinct every day," warns the Center for Biological Diversity. That group adds, "It could be a scary future indeed, with as many as 30 to 50 percent of all species possibly heading toward extinction by mid-century."

Eminent Harvard University biologist E.O. Wilson agrees. "We're destroying the rest of life in one century. We'll be down to half the species of plants and animals by the end of the century if we keep at this rate." University of California at Berkeley biologist Anthony Barnosky similarly notes, "It looks like modern extinction rates resemble mass extinction rates." Assuming that species loss continues unabated, Barnosky adds, "The sixth mass extinction could arrive within as little as three to 22 centuries."

Let's assume 5 million species. If Wilson is right that half could be gone by the middle of this century, that implies that species are disappearing at a rate of 71,000 per year, or just under 200 per day. Contrast this implied extinction rate with Pimm and his colleagues, who estimate that the background rate of extinction without human influence is about 0.1 species per million species years. This means that if one followed the fates of one million species, one would expect to observe about one species going extinct every 10 years. Their new estimate is 100 species going extinct per million species years. So if the world contains 5 million species, then that suggests that 500 are going extinct every year. Obviously, there is a huge gap between Wilson's off-the-cuff estimate and Pimm's more cautious calculations, though both assessments are troubling.

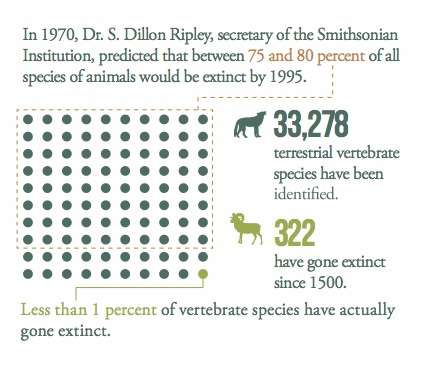

But this is not the first time biologists have sounded the alarm over purportedly accelerated species extinctions. In 1970, Dr. S. Dillon Ripley, secretary of the Smithsonian Institution, predicted that in 25 years, somewhere between 75 and 80 percent of all the species of living animals would be extinct. That is, as much as four out of every five species of animals would be extinct by 1995. Happily, that did not happen. In 1994, biologist Peter Raven predicted that "since more than nine-tenths of the original tropical rainforests will be removed in most areas within the next thirty years or so, it is expected that half of the organisms in these areas will vanish with it." It's now more than 20 years later and nowhere near 90 percent of the rainforests have been cut down, and no one thinks that half of the species inhabiting tropical forests have vanished.

In 1979, Oxford University biologist Norman Myers suggested in his book The Sinking Ark that 40,000 species per year were going extinct and that the world could lose a million species, or "one-quarter of all species by the year 2000." At a 1979 symposium at Brigham Young University, Thomas Lovejoy, a former president of the H. John Heinz III Center for Science, Economics, and the Environment, announced that he had made "an estimate of extinctions that will take place between now and the end of the century. Attempting to be conservative wherever possible, I still came up with a reduction of global diversity between one-seventh and one-fifth." Lovejoy drew up the first projections of global extinction rates for the Global 2000 Report to the President in 1980. If Lovejoy had been right, between 15 and 20 percent of all species alive in 1980 would be extinct right now. No one believes that extinctions of this magnitude have occurred over the last three decades.

What did happen? As of 2013, the International Union for the Conservation of Nature (IUCN) lists 709 known species as having gone extinct since 1500. A study published in Science in July 2014 reported that among terrestrial vertebrates, 322 species have become extinct since 1500. That's not nothing, but those assessments amount to just 1 percent of all vertebrate terrestrial species alive today.

Don't Panic

Why does it matter if the population at large believes these dire predictions about humanity's future? The primary danger is they may fuel a kind of pathological conservatism that could actually become a self-fulfilling prophecy.

The closest thing to a canonical version of the "precautionary principle" was devised by a group of 32 leading environmental activists meeting in 1998 at the Wingspread Center in Wisconsin. The Wingspread Consensus Statement on the Precautionary Principle reads: "When an activity raises threats of harm to human health or the environment, precautionary measures should be taken even if some cause and effect relationships are not fully established scientifically. In this context the proponent of an activity, rather than the public, should bear the burden of proof. The process of applying the Precautionary Principle must be open, informed and democratic and must include potentially affected parties. It must also involve an examination of the full range of alternatives, including no action."

Why was this new principle needed? Because, the Wingspread conferees asserted, the deployment of modern technologies was spawning "unintended consequences affecting human health and the environment," and "existing environmental regulations and other decisions, particularly those based on risk assessment, have failed to protect adequately human health and the environment."

As a result of these unintended side effects and the supposed regulatory inadequacy, the conferees insisted, "Corporations, government entities, organizations, communities, scientists and other individuals must adopt a precautionary approach to all human endeavors" (emphasis added). Contemplate for a moment this question: Are there any human endeavors at all that some timorous person could not assert raise a "threat" of harm to human health or the environment?

Promoters of the precautionary principle argue that its great advantage is that implementing it will help avoid deleterious unintended consequences of new technologies. Unfortunately, supporters are most often focusing on the seen while ignoring the unseen. In his brilliant essay "What Is Seen and What Is Unseen," 19th century French economist Frederic Bastiat pointed out that the favorable predictable effects of any policy often produce many disastrous later consequences.

Banning nuclear power plants reduces the imagined seen risk of exposure to radiation while boosting the unseen risks associated with man-made global warming. Prohibiting a pesticide aims to diminish the seen risk of cancer, but elevates the unseen risk of malaria. Demanding more drug trials seeks to prevent the seen risks of toxic side effects, but increases the unseen risks of disability and death stemming from delays in getting effective drugs to patients. Mandating the production of biofuels attempts to address the seen risks of dependence on foreign oil, but heightens the unseen risks of starvation.

Electricity, automobiles, antibiotics, oil production, computers, plastics, vaccinations, chlorination, mining, pesticides, paper manufacture, and nearly everything that constitutes the vast enterprise of modern technology all have risks. On the other hand, it should be perfectly obvious that allowing inventors and entrepreneurs to take those risks has enormously lessened others. How do we know? People in modern societies are enjoying much longer and healthier lives than did our ancestors, with greatly reduced risks of disease, debility, and early death.

The precautionary principle is the opposite of the scientific process of trial and error that is the modern engine of knowledge and prosperity. The precautionary principle impossibly demands trials without errors, successes without failures.

"The direct implication of trial without error is obvious: If you can do nothing without knowing first how it will turn out, you cannot do anything at all," explained Aaron Wildavsky in his 1988 book Searching for Safety. "An indirect implication of trial without error is that if trying new things is made more costly, there will be fewer departures from past practice; this very lack of change may itself be dangerous in forgoing chances to reduce existing hazards." Wildavsky added, "Existing hazards will continue to cause harm if we fail to reduce them by taking advantage of the opportunity to benefit from repeated trials."

Should we look before we leap? Sure we should. But every utterance of proverbial wisdom has its counterpart, reflecting both the complexity and the variety of life's situations and the foolishness involved in applying a short list of hard rules to them. Given the manifold challenges of poverty and environmental renewal that technological progress can help us address in this century, the wiser maxim to heed is "He who hesitates is lost."

This article originally appeared in print under the headline "The End of Doom."