The Problem of Military Robot Ethics

I'm not entirely sure what it would mean for a robot to have morals, but the U.S. military is about to spend $7.5 million to try to find out. As J.D. Tuccille noted earlier today, the Office of Naval Research has awarded grants to artificial intelligence (A.I.) researchers at multiple universities to "explore how to build a sense of right and wrong and moral consequence into autonomous robotic systems," reports Defense One.

The science-fiction-friendly problems with creating moral robots get ponderous pretty fast, especially when the military is involved: What sorts of ethical judgments should a robot make? How to prioritize between two competing moral claims when conflict inevitably arises? Do we define moral and ethical judgments as somehow outside the realm of logic—and if so, how does a machine built on logical operations make those sorts of considerations? I could go on.

You could perhaps head off a lot of potential problems by installing behavioral restrictions along the lines of Isaac Asimov's Three Laws of Robotics, which state that robots can't harm people or even allow harm through inaction, must obey people unless it could cause someone harm, and must protect themselves, except when that conflicts with the other two laws. But in a military context, where robots would at least be aiding with a war effort, even if only in a secondary capacity, those sorts of no-harm-to-humans rules would probably prove unworkable.

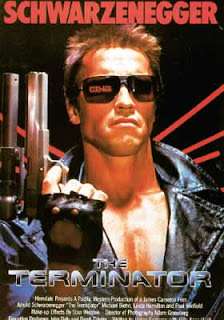

That's especially true if the military ends up pursuing autonomous fighting machines, what most people would probably refer to as killer robots. As the Defense One story notes, the military currently prohibits fully autonomous machines from using lethal force, and even semi-autonomous drones or others are not allowed to select and engage targets without prior authorization from a human. But one U.N. human rights official warned last year that it's likely that governments will eventually begin creating fully autonomous lethal machines. Killer robots! Coming soon to a government near you.

Obviously Asimov's Three Laws wouldn't work on a machine designed to kill. Would any moral or ethical system? It seems plausible that you could build in rules that work basically like the safety functions of many machines today, in which the specific conditions result in safety behaviors or shut down orders. But it's hard to imagine, say, an attack drone with an ethical system that allows it to make decisions about right and wrong in a battlefield context.

What would that even look like? Programming problems aside, the moral calculus involved in waing war is too murky and too widely disputed to install in a machine. You can't even get people to come to any sort of agreement on the morality of using drones for targeted killing today, when they are almost entirely human controlled. An artificial intelligence designed to do the same thing would just muddy the moral waters even further.

Indeed, it's hard to imagine even a non-lethal military robot with a meaningful moral mental system, especially if we're pushing into the realm of artificial intelligence. War always presents ethical problems, and no software system is likely to resolve them. If we end up building true A.I. robots, then, we'll probably just have to let them decide for themselves.