Drunk Man Assaults R2D2-Style Security Robot and Gets Arrested

Good thing he didn't mess with a pistol-packing Russian FEDOR robot

While on patrol in the parking lot of its manufacturer, a K5 security robot was assaulted by Jason Sylvain. Sylvain, who was apparently drunk, pushed over the 300-pound R2D2-style robot made by the Knightscope company. Alerted by the robot, two Knightscope employees detained Sylvain until the local police showed up. The K5 robots operate autonomously and provide 360 degree video of the areas they patrol. The robots are equipped with two way audio that allows intercom conversations between the security operations center and a person near the robot. In addition, the robots can broadcast pre-recorded and live audio messages in the areas where they are operating. They also monitor environmental conditions, keep track of license plates, and detect wireless activity. The company promises that the robots will soon offer a gun detection feature.

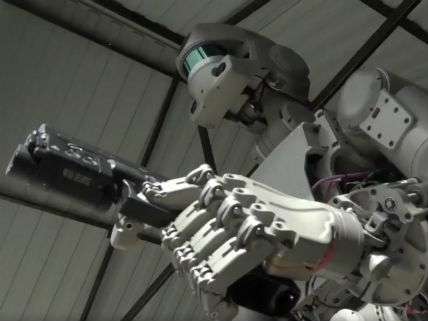

Going beyond mere gun detection, the Russian FEDOR humanoid robot can now shoot guns. As Futurism reports: FEDOR — short for Final Experimental Demonstration Object Research — is a humanoid robot developed by Android Technics and the Advanced Research Fund in Russia. "Final" does sound a bit ominous. In any case, the FEDOR bot can drive a car, use various tools (including keys), screw in lightbulbs, and even do pushups. It has now added the ability to hold and fire two pistols at the same time to its skill set.

Russia's Deputy Prime Minister Dmitry Rogozin tweeted, "Robot FEDOR showed the ability to shoot from both hands. Fine motor skills and decision-making algorithms are still being improved." Rogozin further explained, "Shooting exercises is a method of teaching the robot to set priorities and make instant decisions. We are creating AI, not Terminator." Very reassuring.

Enabling mall security robots to protect themselves by, say, administering a mild electric shock to someone Sylvain is OK, but arming them lethal weapons is, well, premature. On the other hand, I am against banning the development of warbots. See FEDOR in action below.