Can You Trust Wikipedia?

Wikipedia shapes our perception of reality today more than ever before because it informs the large language models like ChatGPT. But can we really trust it?

Whom can you trust?

Trust in institutions is at an all-time low. Trust in the media has collapsed.

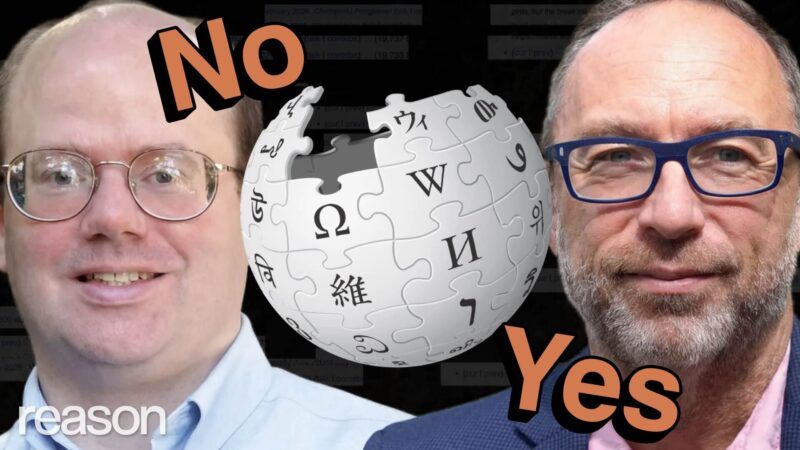

"Meanwhile, during that same 25-year period, Wikipedia has gone from being kind of a joke to one of the few things people trust," says Wikipedia founder Jimmy Wales, whose new book The Seven Rules of Trust: A Blueprint for Building Things That Last shares lessons he learned about building trust while creating the world's largest crowdsourced encyclopedia.

He's right that many of us turn to Wikipedia as one of the last trusted sources for information online.

But can we really trust it?

Wikipedia shapes our perception of reality today more than ever before because it informs the large language models like ChatGPT that we increasingly turn to for answers. Wikipedia pops up as an authoritative source at the top of Google searches and under YouTube videos on controversial topics.

But Elon Musk calls Wikipedia an "extension of legacy media propaganda," recently launching an AI-based competitor called Grokipedia.

"Wikipedia has come to be dominated by the Left," says Wikipedia co-founder Larry Sanger, who compares it to the Catholic Church before the Reformation. He posted Nine Theses to his Wikipedia user page in the spirit of Martin Luther. Wikipedia became "the voice of the establishment. It's just that the establishment itself is working behind the scenes."

Wales tells Reason, "This idea that we've been taken over by woke activists just doesn't really track" and the notion that nefarious forces are manipulating Wikipedia behind the scenes is "absolute fucking nonsense."

Welcome to the Wiki Wars.

The most controversial Wikipedia page might be the one belonging to Donald John Trump.

He's described as an "American politician, media personality, and businessman who is the 47th president of the United States." Biographical details include his time as a real estate developer, reality show host, and his 2016 presidential victory over Hillary Clinton. So far, no disagreements.

But the first policy mentioned is a travel ban against seven Muslim-majority countries, expanding the border wall, family separations, rolling back environmental and business regulations, downplaying the severity of the COVID-19 pandemic, refusing to concede the 2020 election, and getting impeached. Then comes a recounting of his legal battles, his second term involving "mass layoffs of federal workers," "targeting of political opponents," the "revers[al] of pro-diversity policies," and "persecution of transgender people."

The final paragraph concludes that "many of his comments and actions have been characterized as racist or misogynistic" and that "he has made many false or misleading statements during his campaigns and presidency, to a degree unprecedented in American politics." It also states that his actions "have been described as authoritarian" and "historians ranked him as one of the worst presidents in American history."

Tell us how you really feel, Wikipedians.

"In order to be neutral, it should be impossible for a well-informed reader to tell whether, or what position the writers of the article take on controversial questions that are raised in the article," says Sanger, critiquing what he sees as the site's mission drift.

But Wales defends the Trump article, saying, "If you get a negative view of Donald Trump from reading it, that's not our fault."

Former Wikipedia editor Betty Wills says her experience with Wikipedia changed "quite noticeably" after Trump came on the political scene in 2015. Wills began editing Wikipedia under the username Atsme in 2011. A self-described political centrist, she started contributing to the site by editing a page about the alligator gar, drawing on her expertise in producing a documentary on the topic for PBS. She also weighed in on pain management, pit bulls, and the Taj Mahal, once earning an "Editor of the Week" award for being "one of the best contributors to Wikipedia."

Then Trump ran for president.

"I was doing well until I got involved in politics," says Wills.

She says the editors on Trump's page were constantly trying to insert that he was a "liar, racist, that he had a mental condition" in his Wikipedia page. One argument was over whether Wikipedia should assert that Trump referred to immigrants from Haiti and El Salvador as coming from "shithole" countries. Wills wanted to note that Sens. Tom Cotton (R–Ark.) and David Perdue (R–Ga.) were in the room and denied hearing the president say the phrase.

Another Wikipedia editor who goes by Mr. X overruled her on the grounds that "most (reliable) sources factually state that the comment was made."

Another argument erupted over a Wikipedia page article titled "Racial Views of Donald Trump," which Wills and others wanted to change to "Accusations of Racism against Donald Trump."

"I wanted it to be objective and neutral," says Wills.

"Absolutely not," said Mr. X on the article's talk page, shooting down the title change. "This article is not about accusations; it's about Trump's 45 year documented history of racially-provocative remarks….This is not creative writing where we try to turn the tables and make the so called accusers look like the bad guys."

"Mr X., we're not supposed to make anyone look like the 'bad guys'…including Trump," replied Wills.

A few weeks later, Mr. X brought an arbitration case against Wills, accusing her of violating, among other rules, Wikipedia's "gaslighting" policy by "repeatedly discrediting reliable sources; claiming bias and propaganda in reliable sources."

Wills says that Mr. X created the "gaslighting" policy and altered it to target her.

"He added gaslighting, changed it around to make it fit me, OK. I wasn't gaslighting anybody. All I was doing was telling the truth," says Wills.

Wikipedia banned Wills from editing the Trump page.

The conflict partly revolved around what constitutes a "reliable source," which Wikipedia lists in color-coded fashion on its "perennial sources" page, also created by Mr. X. Reason would have reached out to him for comment, but Mr. X, whose identity is secret, announced he was taking a break from Wikipedia in 2020, returned in 2022 to thank his well-wishers, and has since disappeared.

Sources like the Associated Press, Reuters, and Reason are marked green as "generally reliable in its areas of expertise." Publications marked yellow, like Buzzfeed, Cosmopolitan, and The Daily Beast, have no consensus on reliability. Red sources like the Daily Caller, The Epoch Times, and The Federalist are considered "generally unreliable," while greyed out sources like Breitbart are blacklisted for alleged "persistent abuse," such as doxxing anonymous Wikipedia editors.

"The number of heavily restricted, I would simply use the word blacklisted sources on Wikipedia that are left wing, it's very small," says Sanger.

Daily Kos, Alternet, and Counterpunch are among the left-wing publications that Wikipedia editors approach with caution. But Sanger is right that far more right-wing sources are marked as questionable. Wales says this reflects the quality of available conservative media.

"The Right tends to have a few more low-quality sources out there," says Wales. "Maybe some right-wing billionaire funders should fund some quality media….I think that would be wonderful for the world, but that's not a Wikipedia problem."

But Sanger says Wikipedians feign naivete about the real cause of this imbalance.

"I don't think they're quite as naive as they're letting on. But they would have you believe that they think that there are objective facts that all responsible people agree upon, and they appear in the greenlit sources. And those other sources are uncontrovertibly bad sources. They are sources of misinformation. Basically, all epistemic virtue is on one side and there's none on the other."

At one point, Wikipedia banned Wills from editing all pages related to American politics. The ban was lifted, but soured by the experience, Wills launched Justapedia, where editors revise Wikipedia articles, aiming to live up to the site's motto, "where neutrality meets objective truth."

Justapedia's Trump page begins by describing his biographical details, similar to his Wikipedia entry. It summarizes his first term as having "delivered tax cuts, three Supreme Court Justices, and…[a] border wall." It notes his legal troubles, though mentions that House Republicans called his trials "politically motivated" and concludes that "he set a historic record as the first Republican in 20 years to win the popular vote…swept all seven swing states, and secured 312 electoral votes, surviving two assassination attempts."

Kamala Harris' entry notes in the second paragraph that her paternal grandfather was "a prominent slave owner," later says she "has been referred to by her opposition as 'an elitist radical,'" and concludes that she's "received substantial criticism" for her role as border czar "in light of 10 million undocumented immigrants entering the U.S." during her tenure. None of these details appear on Harris' Wikipedia page, which describes her as "the first female, first African American, and first Asian American U.S. vice president, and the highest-ranking female and Asian American official in U.S. history."

Is it possible to achieve real neutrality and objectivity in a digital landscape flooded with conflicting information and opinion? Or is the best we can hope for a conservative-flavored Wikipedia clone? Sanger says the ideal online encyclopedia should include diverse perspectives and let readers decide.

He says Wikipedia's original neutrality policy was meant to encourage editors to present multiple points of view about a controversy without unduly tilting the scale.

For example, Sanger's Wikipedia page says his "status as co-founder has been questioned by [Wikipedia's other co-founder Jimmy] Wales, but is generally accepted." This notes the majority opinion but adds a notable dissenting view.

Sanger, who was laid off in 2002, less than a year after Wikipedia's launch, has criticized the site for years and tried several times to launch competitors.

"In the early days, there was a lot of just plain disrespect for experts who would come by. And that bothered me," says Sanger, "Jimmy Wales, he failed to rein these people in, and he allowed those people to drive off a lot of really good editors."

Sanger says that over the years, Wikipedia's most aggressive and ideological editors worked their way up the ladder.

"The inmates took over the asylum, and they're still in charge," says Sanger. "Wikipedia has come to be dominated by the Left and, generally speaking, people who are, for whatever reason, interested in a particular topic."

Much of that interest among unpaid editors is sparked by a genuine commitment to accuracy and advancing knowledge, but Wikipedia is in a constant battle with vested interests, including P.R. firms revamping client pages and state actors pushing a political agenda.

The Taiwan page is under constant siege, and a vote had to be taken on whether to call it a country before locking that part of the page. Wikimedia Foundation banned seven members of the Chinese Mainland Wikipedia group in 2021, with a V.P. announcing that "community 'capture' is a real and present threat."

In 2024, a pro-Palestine group coordinating over Discord to mass edit pages related to the Israel-Palestine conflict was exposed in a 244-page dossier compiled by the Jewish Journal.

Wikipedia's arbitration committee eventually sanctioned three of the editors affiliated with the Discord server.

American political activists are also fighting an ideological information war in the bowels of Wikipedia talk pages, with many accounts spending full work days editing Wikipedia pages. According to Sanger, so too are U.S. intelligence agencies.

"One of the main things that intelligence is supposed to be about these days is the massaging of public opinion. They would be falling down on the job if they weren't doing that with respect to Wikipedia. That's just low hanging fruit," says Sanger.

In 2007, a California Institute of Technology grad student named Virgil Griffith built a tool called WikiScanner to trace the I.P. addresses of Wikipedia editors. He found addresses linked to weapons manufacturers, a voting machine company, both major political parties, the House of Representatives, and the CIA.

Since then, Wikipedia has strengthened its conflict-of-interest policies around editing and deployed filters to better block coordinated editing. But the site also began shielding I.P. addresses to protect the anonymity of its editors.

"I think we would be foolish to think it isn't still happening all the time at some level," says Sanger.

But Wales says that's ridiculous.

"I think that it's quite easy for people to imagine that Wikipedia is under assault from dark webs of mysterious operatives, and it's fucking nonsense," says Wales. "I know the Wikipedians. I mean, they're a bunch of geeks."

Wales says that while bad actors try to manipulate entries "around the edges," that it's highly inefficient and "not a very useful thing for people to invest in" because of the time and effort it takes to convince other editors to accept the edits.

But some in the U.S. government think Wikipedia has become a national security issue.

The Department of Justice sent Wikimedia Foundation a letter in April 2025 threatening to revoke their tax-exempt status and alleging that the site is "allowing foreign actors to manipulate information and spread propaganda to the American public."

The British government is also attempting to regulate Wikipedia. A U.K. law could force all Wikipedia editors to relinquish their anonymity in the name of child safety. The government wants editors to provide ID so that minors working on Wikipedia don't come into contact with anonymous adults.

Sanger agrees with the Wikimedia board, which has sued the U.K. over its law, that anonymity is vital for rank-and-file editors.

"People need to be free to share what they know without negative consequences from the authorities," says Sanger.

The reforms Sanger is proposing don't require the heavy hand of the government. But they might require a special session from the Wikimedia board, which is why Sanger is calling for the Wikimedia Foundation to have a "constitutional convention" to implement some structural changes to the editorial process.

One of Sanger's proposals to reform Wikipedia is to de-anonymize the site's most influential editors, which he calls the "power 62," so the public can know who is making the decisions to check and ban I.P. addresses or topic-ban editors like Wills.

"That's probably his worst idea and it doesn't address any problem that we actually have," says Wales. "We don't see the people with the most power egregiously violating the rules of Wikipedia with their power.…We live in a very dangerous world, and we have editors who are heroes who are editing Wikipedia in authoritarian countries."

But Sanger says knowing the identities of the powerful editors would make it easier for Wikipedia to fight against paid, coordinated shadow campaigns, and it would make the project more transparent. He also wants to abolish decision making by "consensus," which he calls an "institutional fiction" that "hides legitimate dissent under a false veneer of unanimity."

"It's three wolves and a sheep deciding what's for dinner," Wills says, describing her experience with Wikipedia's consensus process.

One of Sanger's most radical proposals is to allow competing Wikipedia articles instead of seeking what he says is a false consensus on a single definitive entry. For example, instead of fighting covert Chinese state actors, Wikipedia might invite Chinese nationalists to openly write an article about Taiwan or religious scholars to write an article about Yahweh, the God of the Bible that Wikipedia describes as "an ancient Semitic deity of weather and war"—a characterization Sanger, a recent convert to Christianity, has called offensive and at odds with the views of many modern religious scholars.

Sanger says such problems arise because Wikipedia only permits the "GASP" framework: Globalist, Academic, Secular, and Progressive.

"I don't think there's anything wrong with being academic. As a Ph.D. philosopher, I'm all for it," says Sanger. "It's just that in today's academy, there are a lot of reasonable positions that simply are not considered, or they're such minority positions. The kind of people who edit Wikipedia will pretend that they're not even present in the academy at all."

The case for a more pluralistic Wikipedia is one of epistemic humility—that we should acknowledge the limits of not only what we know, but what we can know. But Wales says competing articles are a "terrible idea" that Wikipedia will never adopt.

"It's really important that we all meet in one article, and that we have that discourse, and we try really hard to achieve neutrality," says Wales.

Wales concedes that Wikipedia could do better on that front. Recently, both Sanger and Wales publicly critiqued an article entitled "Gaza genocide," defining it as "the ongoing, intentional, and systematic destruction of the Palestinian people in the Gaza Strip carried out by Israel." Wales wrote that "this article fails to meet our high standards and needs immediate attention" and that "there is much more work to do." He's currently leading a neutral point-of-view working group within Wikipedia.

"It would be a mistake for Wikipedia to be complacent about any of these issues," says Wales.

Whether or not Wikipedia implements any of Sanger's suggested reforms, large language models are already forcing change.

While Wikipedia still draws more than 100 million unique visits a day, its human pageviews have declined as more people turn to chatbots like ChatGPT and Grok for answers.

The Trump page on Elon Musk's AI-generated encyclopedia Grokipedia is more neutral than you'll find on either Wikipedia or Justapedia, highlighting Trump's success as the host of The Apprentice alongside his multiple bankruptcies, listing both his major policy achievements and controversies, and concluding with a description of his 2024 win and early raft of executive orders.

But Wales is concerned that Grokipedia, which allows human feedback on its articles, might ultimately be programmed to reflect the biases of its creator.

"I'm not sure to what extent the project trying to support Elon's view of world, and that, frankly, isn't really gonna be neutral. But we'll see, I mean, I think it's too early to say," says Wales.

Sanger says there are other dangers in leaning too much on AI to generate the definitive record of human affairs.

"We do not want to offload the task of summarizing what we think we know to a machine," says Sanger. "So there's two different purposes of an encyclopedia. One is for people to go and learn the basics about a topic or look up information about the topic, but then the other is to actually authoritatively record what is known or believed to be the case in various fields. It would lack credibility, and it would ultimately draw conclusions that human beings may not be comfortable with. It's a very, very sensitive issue, ultimately, how you wordsmith and which facts you want to present as being facts."

Wikipedia's decentralized and open-source nature is also its greatest source of strength and resilience. As Wales points out, it might be the only publication that has a lengthy, evolving article documenting criticisms of itself.

"Wikipedia is a living thing that people can come and engage and like enter that dialog and enter that conversation. And any publication that doesn't allow for reader feedback in the form of actually getting involved, I just see a limitation there," says Wales.

Sanger says it might not take that many people to change Wikipedia for the better from within.

Wikipedia publishes all its user statistics, which reveal that while there are almost 50 million active users, there are only 822 administrators, 49 Checkusers who can track I.P. addresses, and only 16 people, called bureaucrats, with the power to knight new administrators.

"I do think that it's very important that we get involved, at least for a season, you know?" says Sanger. "Give it the old college try and see if we can, collectively, from the bottom up, change the nature of Wikipedia."

Sanger is right: It's important to fight for fair and accurate information on the internet.

Though Wikipedia, like every encyclopedia, has shortcomings and biases, we'll never fully agree on what's true. There will always be contested ground, although John Stuart Mill wrote in his defense of the marketplace of ideas that the goal is still to discover shared truths and that "the well-being of mankind may almost be measured by the number and gravity of the truths which have reached the point of being uncontested."

Even so, Wikipedia is one of the great achievements of the free and open internet, and a huge improvement on the Encyclopedia Britannica-era, in which all of our information was filtered through a central authority that nobody could challenge. Despite all the controversy over Wikipedia, nobody is saying we should go back to that world.

Not long ago, the idea that you could trust anything written in a decentralized online encyclopedia seemed totally outlandish. Stephen Colbert famously mocked Wikipedia in 2006, calling it "the encyclopedia where you can be an authority even if you don't know what the hell you're talking about" and joking that reality would be replaced with "Wikiality."

He encouraged his viewers to mass edit the online encyclopedia to claim that the elephant population had tripled in six months and that George Washington didn't have slaves. It worked for a moment. And then…it didn't.

"What was interesting about that, it is kind of a good case of how robust Wikipedia really can be," says Wales. "The page was locked within seconds because, as I told him later, Wikipedians also watch The Colbert Report."

When Colbert complained of "Wikiality," what he was really lamenting was the demise of the informational gatekeepers that once dominated mass media. But those days are finished.

Dissent is cheaper and easier than ever in the digital age, and it's better to harness it for good than to squash it for the illusion of consensus.

Systems that best approximate a marketplace of ideas and insulate themselves from capture or abuse are the ones that will bring us closer to the truth. That might be Wikipedia, or Grokipedia, or some new ecosystem of competing encyclopedias.

Fact finding in the AI-infused digital age is bound to be a messy, confusing, and contentious process, but grasping our way towards the truth always has been.